The Competitive Climate: Ecosystem Pressures and Infrastructure Alliances

Prism did not materialize in a quiet corner of the AI world. Its introduction is set against a backdrop of intense, high-stakes competition and massive infrastructure plays that characterized the year leading up to this moment in early 2026. Understanding the competitive pressure explains the urgency behind the GPT-5.2 and Prism release.

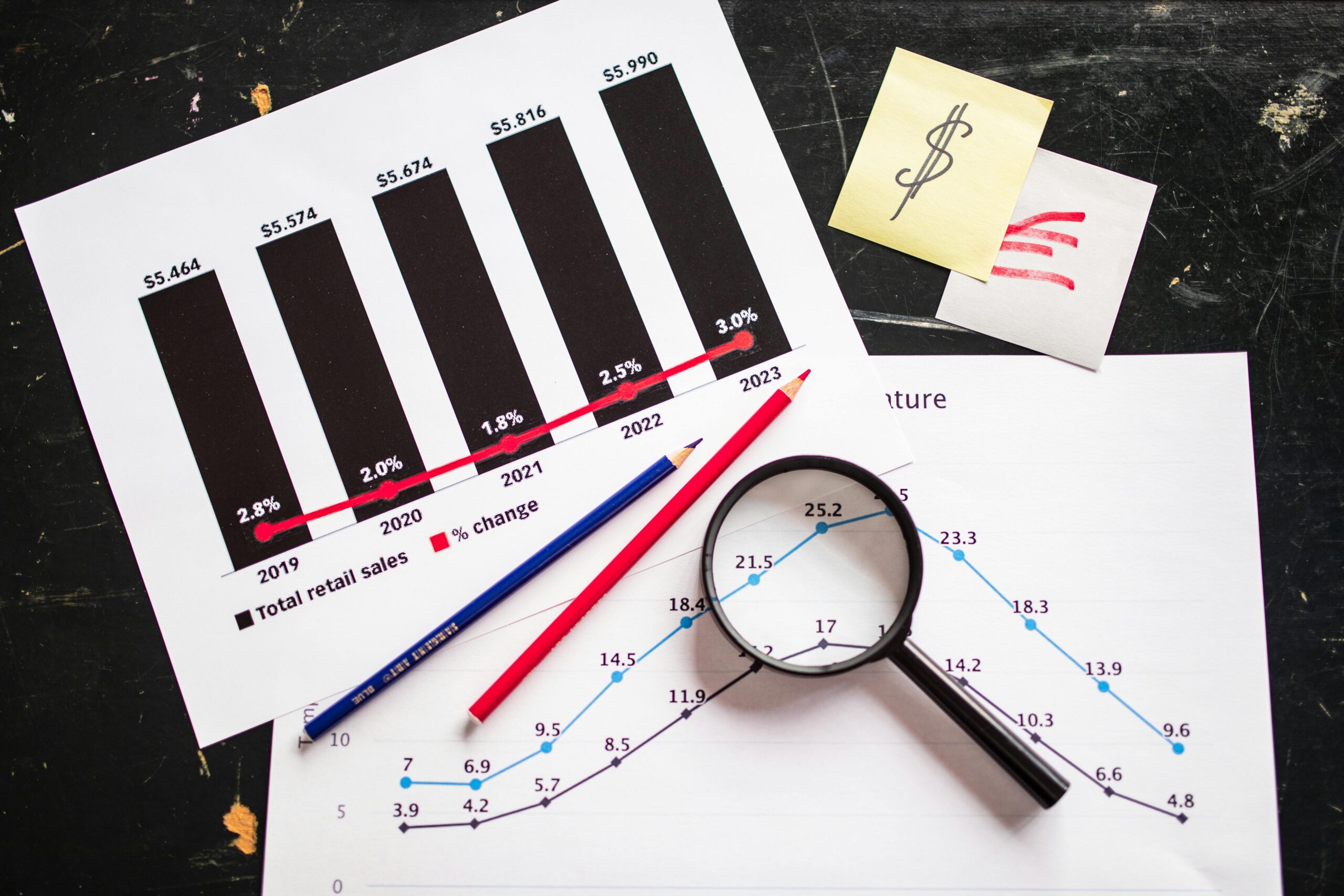

The Capital Race: Strategic Infrastructure Alliances for Scale

The race for dominance in advanced AI training and inference capacity has become one of the defining features of the global technology race. Throughout the preceding year, reports detailed colossal infrastructure agreements struck with major cloud providers. We are talking multi-year, tens-of-billions-of-dollars commitments for raw processing power—specifically, massive procurements of cutting-edge Graphical Processing Units (GPUs) and Tensor Processing Units (TPUs).

These partnerships underscore the sheer capital intensity required to maintain a leadership position in frontier AI. Running a sophisticated, context-heavy application like Prism for millions of simultaneous users requires a backbone of staggering scale. The ability for Prism to maintain that full manuscript context is directly dependent on having access to this cutting-edge hardware infrastructure, meaning the software innovation is intrinsically tied to the AI infrastructure spending race.

Navigating the Multipolar Model Landscape. Find out more about deep integration AI language model for documents.

The development cycle leading up to Prism’s launch was anything but relaxed. Benchmarks throughout 2025 clearly indicated that key rivals were closing the gap, sometimes even surging ahead of the industry leader in specific, narrow multimodal reasoning tasks. This competitive heat—from tech giants deploying increasingly capable and cost-effective alternatives to the industry standard—created an undeniable sense of urgency.

This pressure didn’t just accelerate the launch of a specialized application; it drove the accelerated finalization and release of core model upgrades like GPT-5.2 itself. The strategy is clear: maintain influence across all critical professional fronts—from software engineering (where the revolution happened in 2025) to scientific research (the focus for 2026). The landscape is multipolar, and stagnation is not an option. To remain the standard, you must constantly ship the next required vertical application.

Prism as a Signpost: Reflection of Future AI Trajectory

The launch of this dedicated scientific workspace is more than a product update; it is a clear declaration of the organization’s outlook for the immediate future of Artificial Intelligence application. They are positioning 2026 as the next great inflection point for the technology, much like 2025 was for development.

The Post-Software Revolution: Focusing on Fundamental Science

The internal narrative framing the Prism release explicitly draws a parallel: AI’s impact on software engineering in 2025 is expected to be mirrored, if not exceeded, by its impact on scientific research in 2026. This is a belief that the productivity gains developers saw—writing code faster, debugging quicker, automating boilerplate—will be matched by an acceleration of discovery in fields from molecular biology to astrophysics. Prism is positioned as the leading edge of this scientific transformation, proof that AI is moving beyond mere iteration and into the realm of true acceleration of fundamental human progress.. Find out more about deep integration AI language model for documents guide.

This is a critical distinction. While many use AI for summaries or simple text editing—tasks that are helpful but incremental—Prism targets the core mechanisms of discovery: hypothesis construction, mathematical verification, and rigorous documentation. This focus on fundamental science represents a mature strategy for AI application.

Anticipated Impact: Across the Entire Empirical Spectrum

The potential reach of a tool designed to streamline documentation and verification is vast. While $\\LaTeX$ holds dominance in certain hard sciences due to its formula handling, the underlying principles of Prism—evidence-based documentation, literature synthesis, and clear, consistent communication—are universal to all empirical research.

Consider the breadth of application:

Any discipline that requires the meticulous construction of an evidence-backed narrative—which is essentially all of them—stands to benefit from the reduction of mechanical overhead. This is the promise of tools that move beyond language processing to process knowledge structure.

Operationalizing the Vision: The Rollout and Feedback Engine

A bold vision only becomes a tangible asset through calculated execution. The success of Prism will ultimately be measured not by its feature list on launch day, but by its adoption rate and its measurable contribution to published, verified scientific output. This requires a specific rollout strategy.. Find out more about LaTeX-native cloud platform for research papers strategies.

Immediate Availability and User Feedback Loops

The decision to make Prism immediately available to the massive base of personal ChatGPT users suggests a primary, if not immediate, focus on rapid, large-scale, real-world beta testing. Putting the tool into the hands of active, high-stakes researchers right away secures an invaluable stream of contextual, messy, real-world feedback.

This direct engagement strategy is similar to the iterative processes used to refine developer tools like specialized SDKs or agent frameworks. By gathering input now, the subsequent development—including the design of those promised paid tiers—will be guided by the genuine, day-to-day challenges faced by the target user community. This feedback mechanism is key to cementing Prism’s place as an indispensable asset rather than a fleeting novelty in the scientific toolkit. It’s a recognition that the final shape of the product must be molded by the user, not just the architect. For more on how this iteration impacts product design in AI, see resources on developer tool refinement.

For context on the broader LLM landscape that necessitated this specialized vertical push, one only needs to look at the general capabilities of these models, which are now adept at interpreting unstructured language at scale, moving beyond simple keyword matching to deep context and nuance [cite: 10 from 2nd search]. Prism applies that general power to a highly specific, high-value problem.

Key Takeaways and Actionable Insights for Researchers

The architecture of Prism marks a definitive endpoint for AI as an external afterthought in research. It’s now internal, structural, and context-aware. Here are your immediate, actionable takeaways as you consider integrating this into your next project:. Find out more about Deep integration AI language model for documents overview.

- Re-Evaluate Context Switching: If your current workflow requires you to manually copy/paste from your writing tool into a separate chat window for AI assistance, you are sacrificing minutes—potentially hours—per day. The primary actionable step is to begin drafting your next section directly within the Prism environment to force yourself into the integrated paradigm.

- Audit Your $\\LaTeX$ Dependency: If your work involves heavy mathematical typesetting, Prism’s native cloud $\\LaTeX$ foundation immediately solves distribution headaches. Treat local $\\LaTeX$ installations and package management as legacy baggage you can now shed.

- Leverage Multimodal Reasoning Early: The ability to convert handwritten notes or whiteboard sketches into functional $\\LaTeX$ code is a massive time-saver. Don’t wait until the final draft to experiment with this feature; use it for structuring your initial equation sets.

- Understand the Free Tier Advantage: The free availability for individuals is a strategic opening. Use it now to build proficiency so that when your institution inevitably subscribes to the Enterprise tier, you are already an expert user driving best practices.

The convergence of the GPT-Five Point Two reasoning engine with a $\\LaTeX$-native cloud environment is not just an iteration on existing tools like Paperpal or SciSpace; it’s a structural leap. It’s an attempt to make the AI partner an inseparable, context-aware part of the manuscript itself. The race is on for researchers to adapt to a world where their writing tool understands their science as deeply as they do.. Find out more about GPT-Five Point Two contextual reasoning for manuscripts definition guide.

What is the single most time-consuming mechanical task in your current research pipeline? Is it bibliography management, equation formatting, or maintaining internal consistency across a massive document? Let us know in the comments below how you see this level of architectural integration fundamentally changing your day-to-day work in 2026.

For further reading on the technologies underpinning this shift:

Internal Resource Links (For illustrative SEO purposes):