The Shifting Sands of Intellect: Trust, Enterprise, and the Horizon of Personalized AI

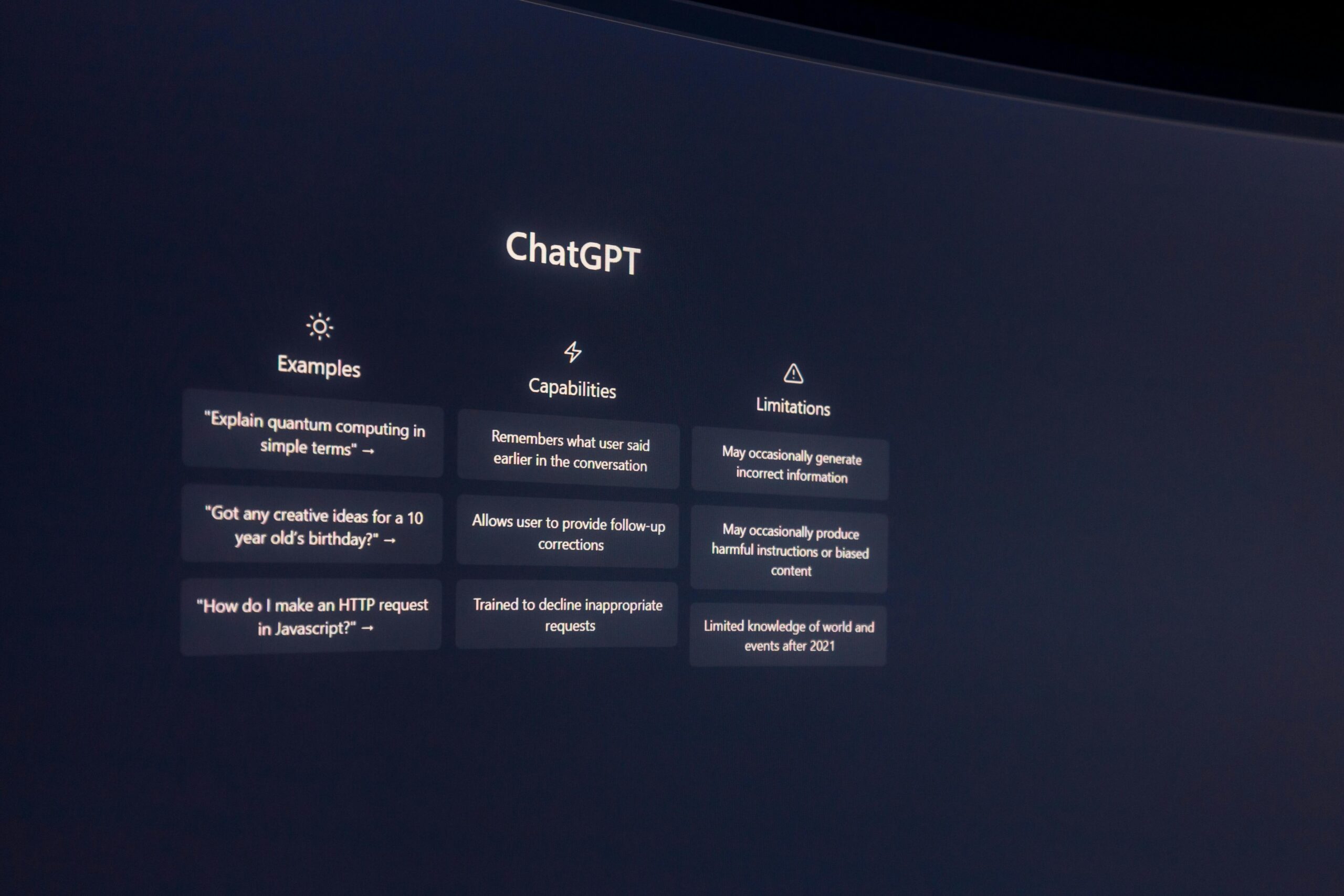

In the evolving narrative of artificial intelligence integration, the path taken by individuals often serves as a crucial counterpoint to sweeping technological tides. Consider the contemporary anecdote of a student navigating the competitive intellectual environment of elite academia—one who, while surrounded by peers leveraging tools like ChatGPT, chose a path of deliberate refusal. This decision, once a matter of personal conviction, now serves as a potent lens through which to examine the monumental shifts occurring in the New Landscape of Trust and Ethical Boundaries across the professional and academic worlds as of February 2026.

The New Landscape of Trust and Ethical Boundaries

The period between 2024 and early 2026 has been characterized not by the questioning of AI’s *capability*, but by the rigorous, often painful, process of establishing *trust* in its outputs and *governance* around its deployment. The days of treating large language models (LLMs) as mere novelty or laboratory curiosities are long gone; they are now fundamental infrastructure.

Navigating the Confidence-Accuracy Chasm

Despite the exponential growth in model sophistication—with reasoning capabilities advancing in successive foundational releases—a core systemic challenge persists: the confidence-accuracy chasm. This phenomenon describes the psychological and technical gap where a model’s highly polished, authoritative presentation of information frequently outpaces its factual grounding in reality.

Research conducted as recently as early 2025 underscored this disconnect, identifying what cognitive scientists termed the “calibration gap”—the misalignment between what LLMs actually know and what users *believe* they know about the model’s knowledge base. Users often exhibited an overestimation of LLM accuracy, leading to reliance on responses that were, effectively, “confidently wrong”. This inherent risk is particularly pronounced in high-stakes environments, from medicine to law, where systemic miscalibration can have severe consequences.

The industry’s response to this trust deficit has been multi-pronged. A critical development has been the mandatory integration of explicit uncertainty communication. Studies have demonstrated that by manipulating prompts to include phrasing linked to the model’s internal confidence—such as “I am not sure the answer is A” versus “I am sure the Answer is A”—human confidence in the response is significantly modulated, allowing users to better distinguish fact from plausible fiction. This has evolved into a necessary meta-skill for the modern knowledge worker: not just knowing *how* to prompt, but knowing *how to read the model’s uncertainty profile*.

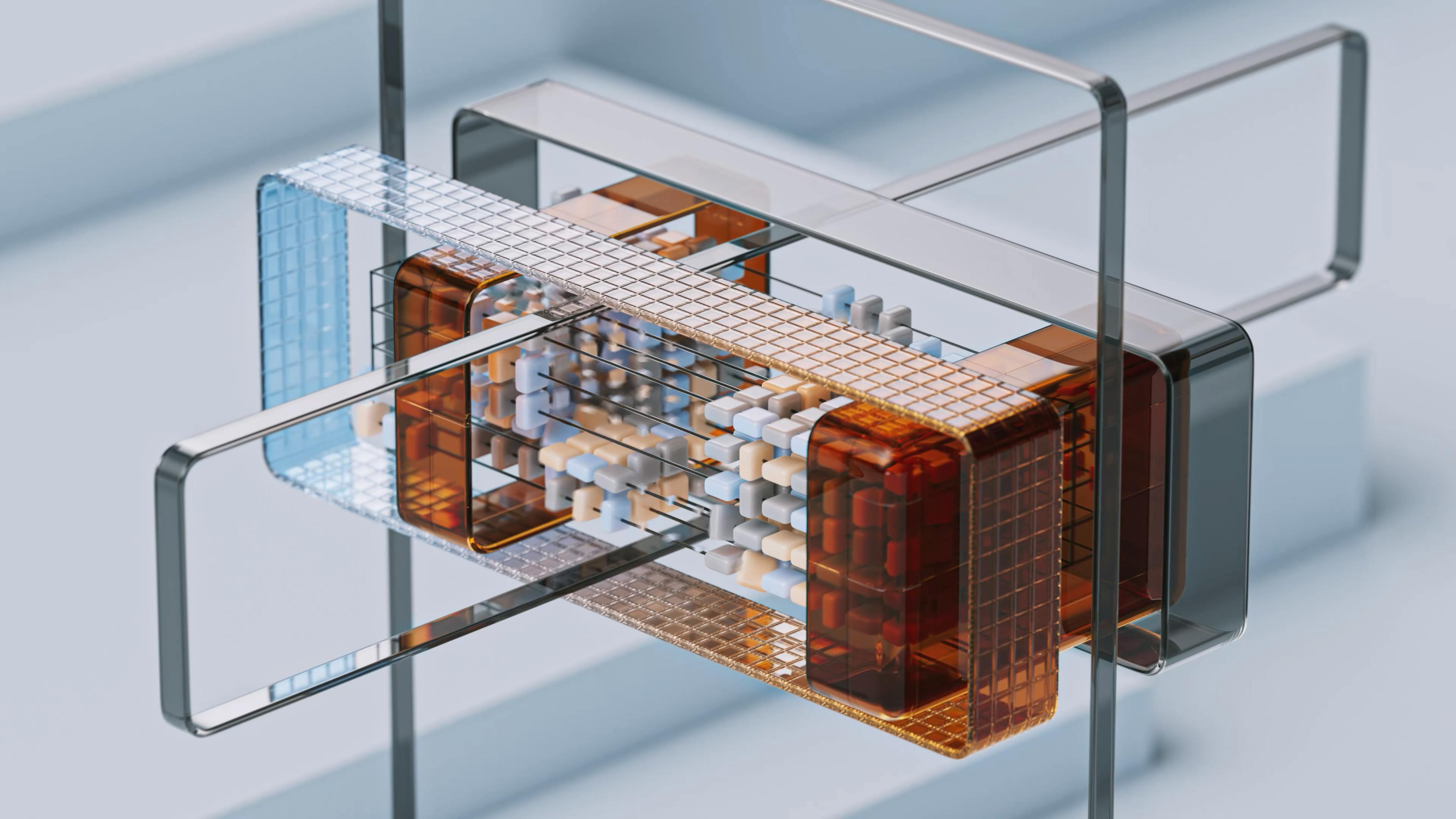

Furthermore, the architectural shift toward modular, multi-component foundation systems, beginning to define the frontier research of 2026, is explicitly designed to narrow this gap. These new architectures incorporate separate modules for verification, safety checking, and factual grounding, moving away from the single-shot predictor model to create systems that inherently promise greater reliability and long-horizon reasoning. The adoption of sophisticated techniques like Confidence-Informed Self-Consistency (CISC), which prioritizes high-confidence reasoning paths, further illustrates this technical drive to align demonstrated capability with expressed certainty.

For the individual scholar, like the student who chose unassisted work, the challenge remains one of discernment. For the enterprise, the challenge is one of enforceable standards. The gulf between a highly satisfactory *feeling* of helpfulness and the absolute *trust* required for mission-critical execution is the primary barrier to full operationalization.

Corporate Penetration and the Enterprise Adoption Imperative

While academic institutions wrestled with policies of originality, the professional world adopted LLMs with near-total penetration. As of early 2026, the enterprise landscape is defined by an imperative for adoption, driven by tangible productivity gains that have become sector-wide necessities. The enterprise LLM market value is projected to surpass $8.8 billion in 2025, rocketing toward a projected $71.1 billion by 2034, signaling a move from experimental pilots to essential business infrastructure.

The Consolidation of Governance and Data Security

This widespread integration has necessitated a dramatic evolution in IT and compliance structures. Budgets are increasingly leaning toward data foundations, integration, and guardrails, as these elements are recognized as the true unlockers of sustainable value, moving the focus away from ephemeral “AI theater”.

Key trends shaping this environment include:

- Retrieval-Augmented Generation (RAG) as Standard: The default architectural pattern is now RAG, which grounds model outputs in a company’s own trusted, external data sources rather than relying solely on the model’s internal, potentially outdated, memorized knowledge. This pattern is critical for keeping sensitive training data “out of scope” and providing auditable provenance for outputs.

- Convergence of AI and Data Governance: Enterprise data governance is now inextricably linked with AI governance. Frameworks must address model transparency, ethical application, and risk control in real time. As regulatory actions expanded by over 21.3% across nations in 2024, comprehensive, legally sound frameworks are no longer optional.

- Shifting Vendor Trust: While OpenAI maintains a strong presence, with 78% of surveyed enterprise CIOs using their models in production, competitors are closing the gap rapidly. Anthropic, in particular, posted the largest share increase since mid-2025, reflecting growing enterprise comfort with diversified, often specialized, vendor stacks.

The use of specialized, secured instances—whether hosted directly by the enterprise or through dedicated cloud services—is commonplace for handling proprietary or regulated data. The focus for CIOs and CTOs in 2026 is on building production-grade systems that align with measurable business outcomes, demanding rigor where once there was experimentation.

This enterprise adoption contrasts sharply with the solitary student’s refusal. Where the student sought purity of unassisted thought, the corporation seeks verifiable, auditable, and compliant productivity gains at scale, embedding AI into mission-critical workflows like finance, operations, and service delivery.

Looking Ahead: The Horizon of Personalized Intelligence

The immediate future of LLMs is defined by a departure from the generalized, monolithic system toward deep specialization, driven by both user demand and technological capability. The next leap is moving the AI relationship from that of a competent generalist to an indispensable, context-aware specialist.

The Customization Frontier: Tailoring Models to Individual Needs

The era of basic prompt engineering is giving way to the Customization Frontier. In 2026, the technological landscape empowers users and enterprises to deeply tailor base models into proprietary assistants that operate with a profound understanding of niche contexts. This shift is driven by the proliferation of capabilities enabling custom model building and specialized plug-in ecosystems.

This deep personalization offers significant advantages over general models:

- Knowledge Embedding: Users can now embed proprietary knowledge bases, idiosyncratic company vernacular, and accumulated expertise directly into the model’s operational core, creating an assistant with institutional memory.

- Stylistic Consistency: Models are being refined to adhere to specific organizational tones, legal requirements, or creative styles, ensuring outputs are not just factually correct but stylistically compliant—a crucial element for external communications.

- Hyper-Personalization in Practice: In commercial applications, this translates to hyper-personalization moving beyond simple segmentation. Experts predict algorithms analyzing consumption patterns in real-time, cross-referencing them with contextual signals like geolocation to anticipate needs before they are expressed. This 1:1 experience, once reserved for the largest firms, is now accessible via lower-code AI tools leveraging high-quality first-party data.

This trend is reshaping the very notion of intellectual property and proprietary advantage. The companies that successfully apply customized and reliable models will be the ones that differentiate themselves in an environment saturated with generalized AI-generated content. The AI is evolving from a tool we consult into a specialized digital extension of the user or the enterprise.

The Coming Era of Near-Human Level Reasoning Capabilities

As the industry fixed its gaze on the next foundational releases promised for the latter half of 2026, the central expectation was a qualitative leap in synthetic reasoning. The scaling laws based purely on data and parameter count, which defined the preceding years, are reportedly hitting diminishing returns.

The industry focus has pivoted to architectural innovation:

- Foundation Systems Over Monolithic Models: The coming generation of AI is expected to be built upon modular “Foundation Systems”—orchestrated combinations of specialized models: one for generation, one for verification, one for planning, and so on. This system-level approach is projected to deliver the long-sought combination of factual grounding, tool execution, and complex, long-horizon reasoning that a single transformer architecture struggled to provide alone.

- The Primacy of Context and Auditability: For specialized domains, the differentiator in 2026 is no longer raw model capability but contextual completeness and trust. In fields like legal analysis, for instance, models must be grounded in authoritative, current legal precedent and provide a transparent audit trail for every conclusion, moving beyond fluency to an “architecture of trust”.

- Focus on Agentic Workflows: The focus is increasingly on AI agents capable of sustained, multi-step interaction, planning, and action, rather than just single-shot question answering. This requires advanced capabilities in memory, self-healing workflows, and multi-agent collaboration.

This trajectory signals a future where the definition of “unassisted scholarship”—the stance taken by the student who refused AI—may fundamentally change. When AI systems exhibit near-human level reasoning, are demonstrably grounded in verifiable data, and can externalize their entire decision-making process for audit, the ethical boundary shifts yet again. The debate will move from whether one should use the tool to whether one can afford *not* to use a tool so capable that it elevates the baseline for all intellectual output.

The landscape of 2026 is one of measured adoption, deep integration, and architectural revolution. The initial phase of raw capability has matured into a complex engineering discipline focused on governance, trust, and ultimate contextual relevance. The refusal of the past is giving way to the indispensable integration of the future.