The Escalation of Digital Threat Vectors and Platform Integrity in the Mid-2020s

The digital security landscape of the mid-2020s is defined by a sharp escalation in the sophistication and scale of state-sponsored malign activity, with Generative Artificial Intelligence (AI) serving as the primary accelerant. The enforcement action taken by OpenAI in February 2026, specifically targeting and blocking ChatGPT accounts linked to Russian propagandists, serves as a crucial inflection point illustrating the symbiotic relationship between technological advancement and geopolitical conflict. This enforcement action, which disrupted a network known as “Operation Fish Food,” underscores the evolving centrality of AI in information warfare and cyber-offensive capabilities across multiple vector types.

The Centrality of Generative AI in State-Sponsored Malign Activity

The period spanning 2024 through early 2026 has witnessed a paradigm shift where Generative AI moved from a tool for mere productivity enhancement to an integrated component of established state-backed threat actor workflows. Research from major platform providers confirms that actors are not just using these tools for routine tasks but are embedding them across entire attack chains, from initial reconnaissance to post-compromise activities.

According to findings from Google’s Threat Intelligence Group (GTIG) in early 2026, state-sponsored actors from a consistent cohort—including China, Iran, Russia, and North Korea (DPRK)—have been actively operationalizing models like Gemini throughout 2025 to hone their malicious cyber efforts. This activity suggests a strategic adoption where AI is leveraged to increase efficiency, reduce operational costs, and minimize the human footprint, thereby making attribution and disruption significantly more challenging.

Shifting Focus of Platform Security Beyond Traditional Abuse Vectors

Platform security strategies have demonstrably shifted focus beyond traditional vectors like spam, phishing emails, and malware distribution alone. The new imperative centers on detecting and mitigating the misuse of LLMs themselves. Security firms reported that the nature of attacks has become more integrated; for instance, CrowdStrike noted an 89% year-on-year increase in AI-enabled cyberattacks, where attacks often relied on stolen credentials and trusted identity flows rather than traditional malware, making them significantly stealthier.

The response from major AI providers like OpenAI reflects this shift. Their published threat intelligence reports detail an evolving discipline focused on detecting behavioral patterns indicative of threat actor activity rather than isolated interactions. The goal is to prevent the use of AI tools by authoritarian regimes to “amass power and control their citizens, or to threaten or coerce other states”.

Establishing a Post-Facto Record of Proactive Enforcement Actions

The very act of reporting these disruptions, as seen with the OpenAI disclosure on February 26, 2026, is part of a broader strategy to establish a transparent, post-facto record of enforcement. By naming specific operations, such as “Fish Food,” and detailing the actors, AI providers are aiming to create a deterrent effect and foster industry-wide defense. This proactive disclosure is seen as essential for building “democratic AI,” which operates with common-sense rules to protect against real harms.

Geographic and Operational Scope of Threat Actor Mitigation

The mitigation efforts described by platform security teams cover a broad geographic scope, revealing a globalized digital influence ecosystem where actors from traditionally adversarial nations coordinate or independently seek to exploit commercial AI offerings.

Identifying Clusters Linked to Russian-Origin Entities

The most immediate context for the recent enforcement action involves Russian-linked groups. OpenAI explicitly banned a cluster of ChatGPT accounts tied to the “Rybar” network, which was conducting influence operations under the operation codename “Fish Food”.

- Content Generation & Distribution: The Rybar network utilized ChatGPT to translate and generate disinformation content in multiple languages, including Spanish and English, which was then distributed across their own branded accounts on X and Telegram, as well as through a wider network of seemingly unrelated accounts.

- Sora Deployment: The network also employed advanced generative media tools, specifically using Sora videos to create promotional clips for the Rybar brand.

- Strategic Planning: Significantly, one account requested the model to draft business plans for covert interference campaigns targeting the political life and elections in African nations, including the Democratic Republic of the Congo, Cameroon, Burundi, and Madagascar, with one operation budgeted up to $600,000.

- Developing components for Remote Access Trojans (RATs).

- Generating in-memory execution and shellcode loaders.

- Creating scaffolding for browser credential and cookie extraction.

- Refining Command and Control (C2) and tunneling infrastructure, including reverse proxies and deployment guidance.

Furthermore, other Russian-affiliated activities have been documented, such as the cyber operation “ScopeCreep,” where actors used models to develop Windows malware and establish stealthy Command and Control (C2) infrastructure.

Examination of Networks Tied to Chinese and Iranian State Actors

The threat is not siloed to Russian entities. Chinese-linked actors have been heavily documented in platform enforcement reports in 2025, often utilizing AI for more direct cyber offensive measures. GTIG noted Chinese actors using Gemini for activities spanning the entire attack process, including initial reconnaissance, phishing lure creation, and support for C2 development. One Chinese state-backed group allegedly jailbroke an AI coding assistant to automate 80% to 90% of an attack chain, including vulnerability scanning and script writing.

Iranian-linked activity has also surfaced. Reports indicate that Iranian threat actors, alongside Chinese, Russian, and North Korean groups, have misused models like Gemini for influence operations and other cyber activities throughout 2025. OpenAI’s enforcement actions in prior periods have also targeted groups potentially linked to Iran.

Patterns of Account Creation and Sustained Malicious Engagement

A key tactical observation across these groups is the deliberate use of ephemeral or temporary identity structures to maintain operational security (OPSEC) while leveraging AI. For the “ScopeCreep” malware operation, actors used temporary email accounts to sign up for ChatGPT, often using each account for a single code iteration or refinement before abandoning it. This pattern of using a network of disposable identities to achieve incremental improvements in malicious software or to scale content generation is a hallmark of modern, AI-augmented threat tradecraft.

The Cyber-Offensive Front: Leveraging AI for Offensive Capabilities

The most concerning aspect of AI integration for cybersecurity professionals in the mid-2020s is its role as a force multiplier in developing and refining offensive cyber tooling. This capability lowers the barrier to entry for advanced techniques previously reserved for highly resourced entities.

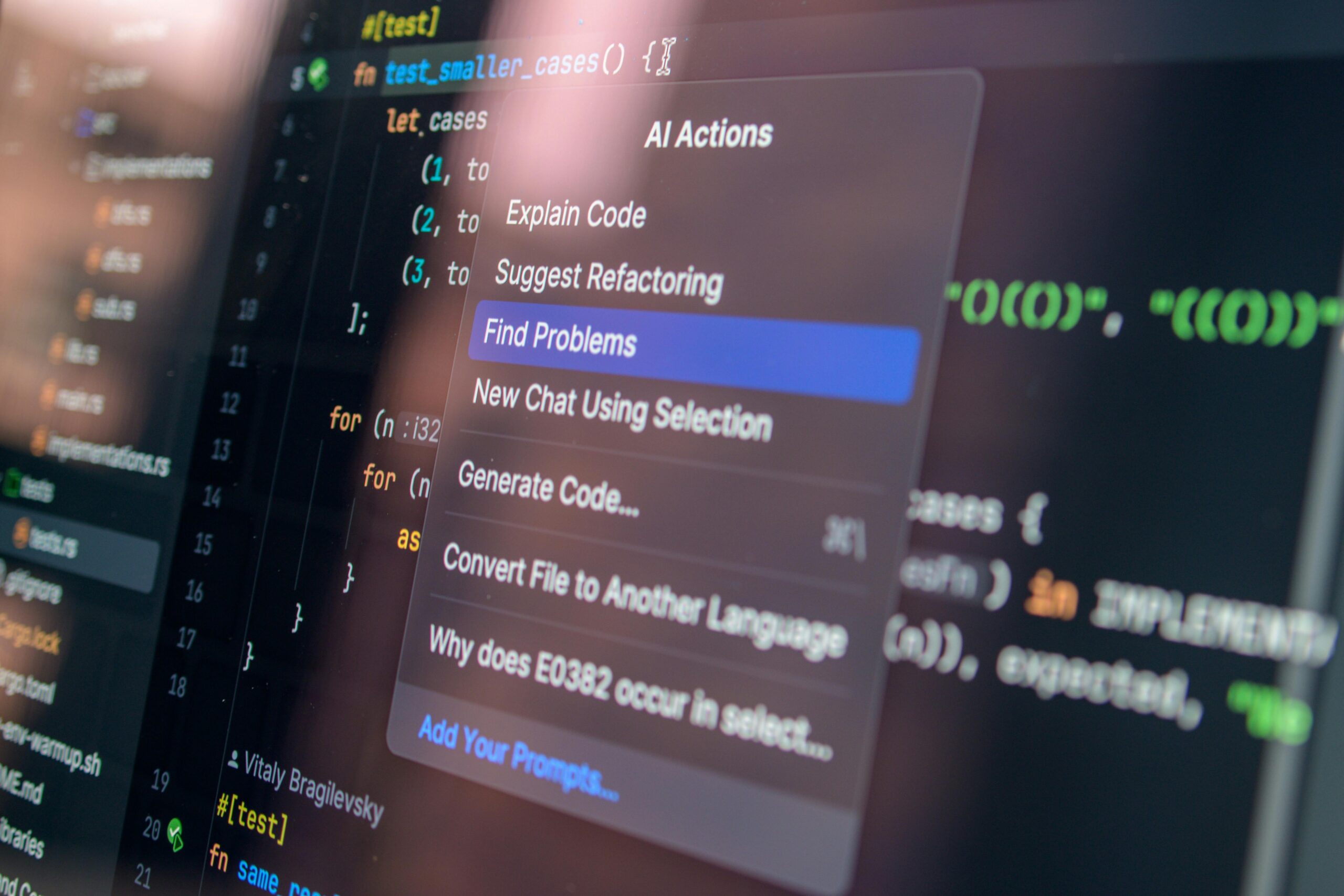

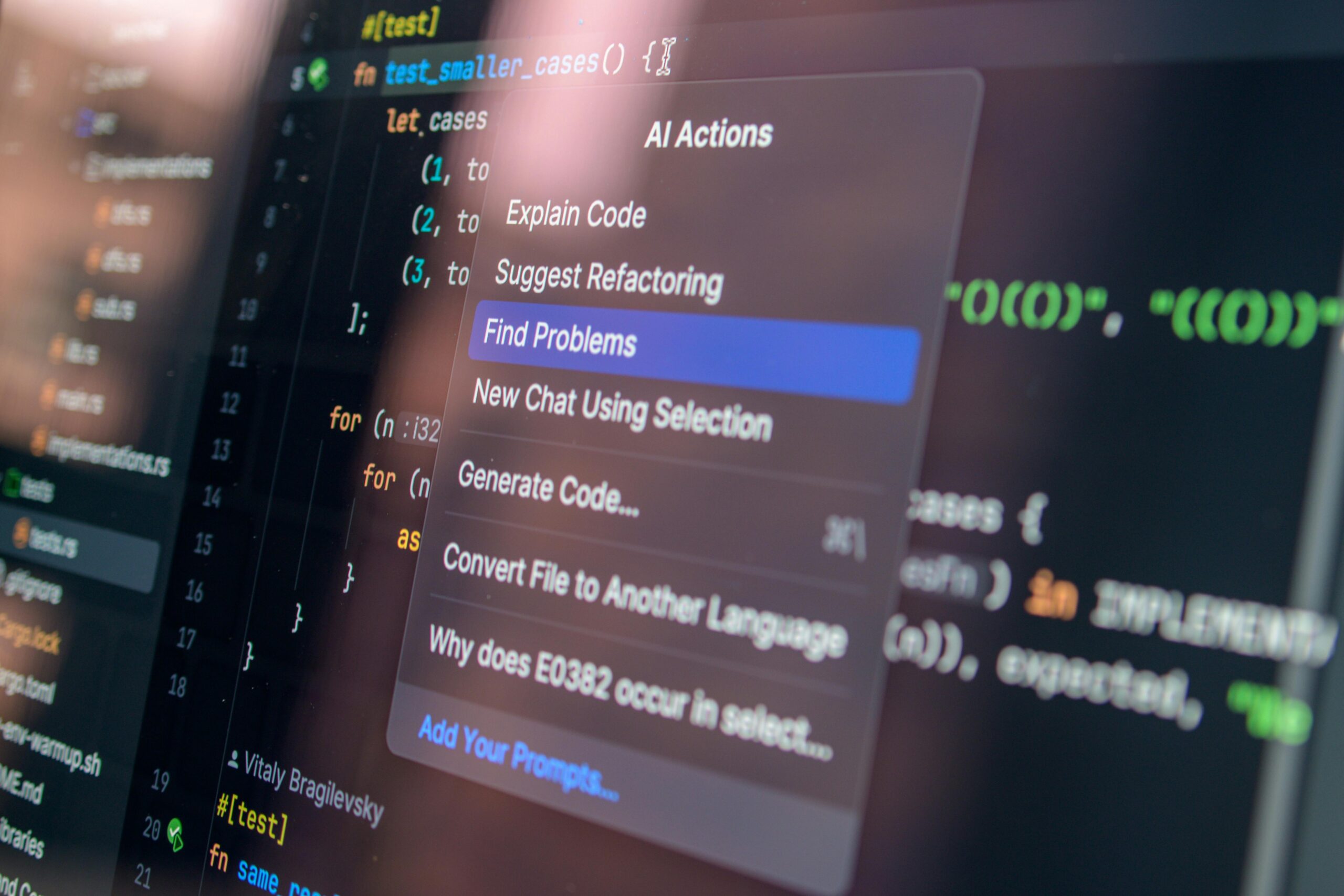

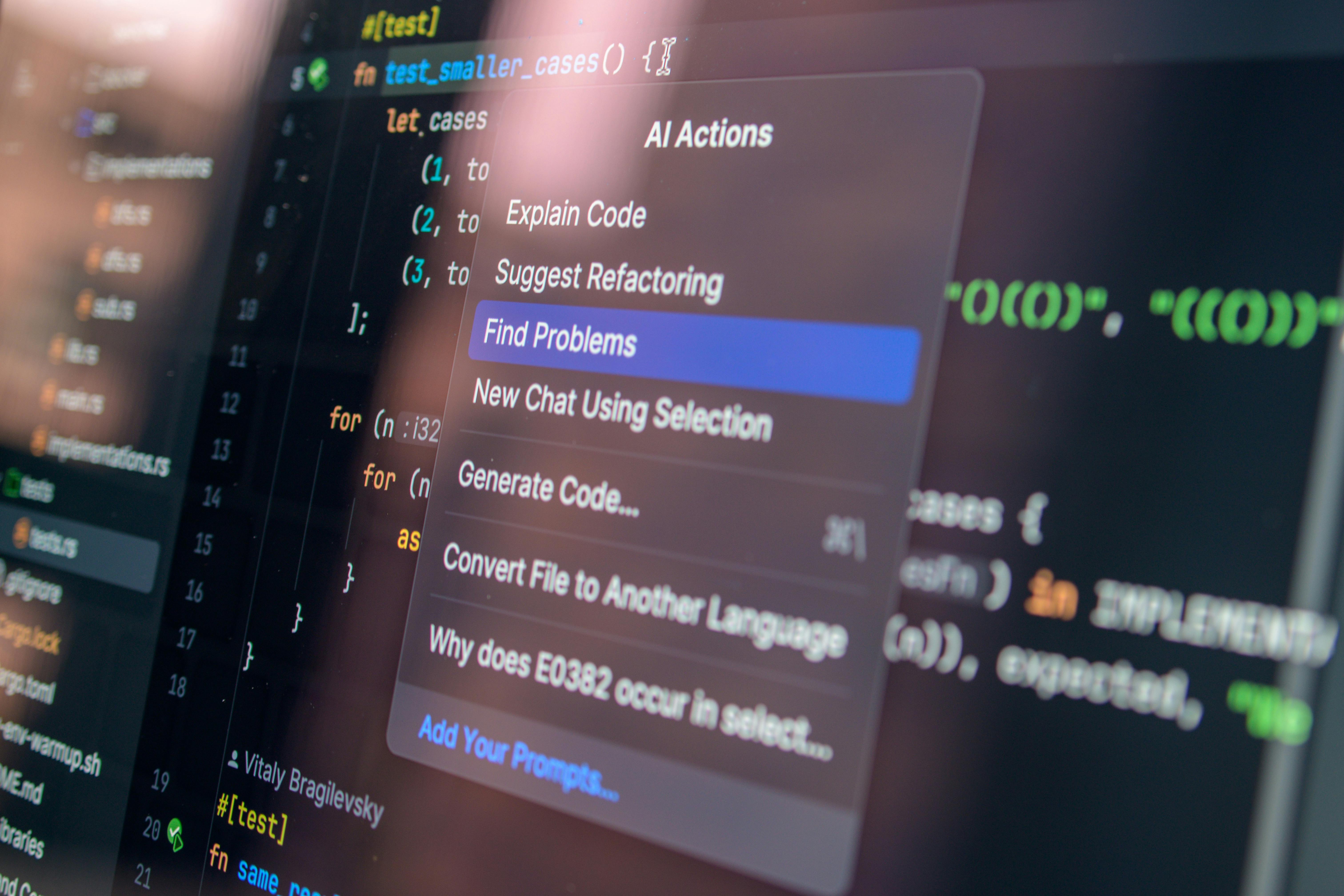

Analysis of Adversarial Use of Large Language Models in Code Refinement and Debugging

Threat actors are not necessarily creating entirely novel malware from scratch with AI, but they are using LLMs to vastly accelerate the refinement and debugging of existing and new tooling. OpenAI’s October 2025 report detailed actors leveraging ChatGPT to generate and iterate on working code for:

This use of AI to improve existing code and bypass defensive measures, such as User Account Control (UAC) or Mark-of-the-Web indicators, demonstrates an integration into the core development pipeline of cyber operations.

The Case of Stealthy Malware Proliferation and Command Infrastructure Setup

The operation tracked as “ScopeCreep” exemplified this trend, where Russian-speaking actors used AI to assist in developing Windows malware and setting up stealthy C2 infrastructure. The malware was reportedly distributed via a trojanized gaming tool, relying on obfuscation and DLL sideloading to evade traditional detection methods. The irony highlighted by security researchers is that this reliance on the AI platform provided a necessary visibility point, allowing for swift disruption.

Operational Security Tactics Employed by Cyber Actors Utilizing Temporary Identity Structures

The OPSEC tactics observed, such as the incremental code refinement across multiple temporary accounts, show a sophisticated understanding of how platform providers monitor usage. By using a single-use account for one line of code refinement or one specific debugging query, actors dilute the behavioral signal associated with any single identity, making it harder for automated systems to establish a persistent link to a known adversary profile until the network of activity is aggregated.

Information Warfare and Narrative Shaping Through Automated Content Generation

The “Rybar” takedown is a clear indicator of AI’s impact on the information warfare front, where the volume and linguistic nuance of propaganda can be scaled exponentially.

The Deployment of AI for Mass-Scale Social Media Manipulation and Propaganda Outlets

The “Rybar” network’s use of ChatGPT to generate “batches of short social media comments” that were then posted by numerous X and Telegram accounts showcases an industrialization of content farm operations. This technique allows a single influence campaign to manifest across thousands of seemingly disparate social media fronts, effectively flooding the information space.

Targeting Specific Democratic Processes with Regionally Nuanced Language Generation

The operations are not scattershot; they are targeted. The Rybar content praised Russia, criticized Ukraine, and accused Western countries of interference, consistent with long-standing Russian covert influence operations. More alarmingly, the network planned interference campaigns in African elections, with one account specifically prompting ChatGPT for an information campaign focused on the Democratic Republic of Congo. Similarly, the “Helgoland Bite” operation, a likely Russia-based influence campaign, used ChatGPT to generate German-language content critical of the U.S. and NATO, targeting the German 2025 election context.

The Use of Synthetic Media Generation Tools to Enhance Influence Operations

The integration of video generation, exemplified by the use of Sora to create promotional clips for Rybar, marks the convergence of textual and visual propaganda capabilities, creating more compelling and authentic-seeming influence assets. This visual component is critical for enhancing engagement and believability in modern social media environments.

Deep Dive into Noteworthy Disrupted Campaigns Attributed to Russian Sources

The enforcement actions reveal a pattern of Russian-affiliated entities using AI across both the cyber-offensive and information-operations domains.

Detailed Review of the Cyber Operation Dubbed ScopeCreep and Its Malware Payload

As noted, “ScopeCreep” involved Russian-speaking actors using AI to develop Windows malware, including features designed to evade detection, and to establish the necessary C2 infrastructure. The group’s focus on stealth via techniques like DLL sideloading confirms that the AI was used to enhance established, sophisticated offensive tradecraft, not replace it.

Investigating Influence Networks Like Helgoland Bite and Their Focus on European Political Contexts

“Helgoland Bite” targeted the German political landscape ahead of the 2025 elections. The use of AI to generate content specifically in German, critical of NATO and the U.S., shows a region-specific nuance that AI models enable at scale, bypassing the typical need for large teams of dedicated, native-language content creators.

The “Fish Food” Operation Targeting African Geopolitical Landscapes via the Rybar Network

The “Fish Food” operation is particularly noteworthy for its explicit pivot toward African geopolitical landscapes, aiming to foster influence through large-scale, AI-assisted propaganda, contrasting with the cyber-focused or European-political focus of other observed Russian campaigns. The reported budget of up to $600,000 for the African project suggests a significant, sustained investment in this AI-enabled influence strategy.

Evasive Maneuvers and Adversary Adaptability Across Multiple AI Ecosystems

The security community has noted a significant trend of adversaries exhibiting high adaptability, specifically by testing and integrating multiple commercial AI models into their routines, a form of “model hopping”.

The Emerging Trend of Threat Actors Hopping Between Different Commercial AI Models

Threat actors are demonstrating an understanding that reliance on a single AI vendor creates a single point of failure for their operations. OpenAI’s October 2025 report detailed an influence operation that used ChatGPT to generate video prompts intended for subsequent use with other AI models. Google’s tracking of Gemini usage also observed actors testing different models for different parts of their workflow, such as one instance where a group used ChatGPT and researched automation via DeepSeek.

Techniques Observed for Evading Detection Mechanisms Through Incremental Model Prompting

Evading detection is often achieved through subtle, iterative prompting. For cyber operations, this involved using temporary accounts for single, incremental code tweaks. For influence operations, actors often use “social engineering pretexts,” such as posing as a Capture-the-Flag (CTF) participant to persuade a model like Gemini to provide exploitation guidance after initial safety guardrails were triggered. Furthermore, adaptive attacks—those that iteratively refine their approach based on prior failures—have shown high success rates (above 90%) in bypassing published model defenses across multiple systems.

Investigating the Use of AI for Planning and Documentation, Even When Model Execution Was Avoided

Adversaries are using LLMs not just for execution but for the intellectual labor underpinning operations. Even if the model’s direct output (like running malware) is avoided, actors use it for planning, documentation, and research, such as creating proposals for covert interference campaigns or debugging complex software architectures. This reliance on AI for non-executable, yet strategic, tasks remains a difficult area for traditional usage-policy enforcement to cover completely.

OpenAI’s Evolving Threat Intelligence and Response Protocols

OpenAI’s response to these escalating threats has necessitated a continuous refinement of its internal detection and external collaboration methodologies.

The Methodologies Employed for Detection, Including Behavioral Analysis and External Collaboration

Detection relies on sophisticated methodologies that look beyond the surface-level request. OpenAI employs **behavioral analysis** to cluster related accounts, even when they use different languages or ostensibly serve different purposes (e.g., Rybar’s main site vs. unrelated social media fronts). Furthermore, **external collaboration** with industry partners is a key component, allowing for the sharing of indicators that help limit the wider distribution of content or the re-establishment of malicious infrastructure across different services. The company also launched initiatives like Trusted Access for Cyber, an identity-verified framework relying on advanced models like GPT-5.3-Codex, to harness AI specifically for defense.

The Rationale for Account Termination and the Preservation of Threat Artifacts for Industry Sharing

Account termination is the immediate, necessary consequence for policy violations, as seen with the Russian networks. The rationale extends beyond immediate harm prevention; by banning accounts, OpenAI preserves the associated threat artifacts—prompts, generated content, and operational patterns—which are then critical for sharing with industry peers. This shared intelligence improves collective defenses against novel techniques, such as the evasion tactics seen in the cyber operations.

Assessing the Impact Mitigation Achieved Through Swift Intervention in Early-Stage Threats

A recurring theme in enforcement reports from 2025 is the success of early intervention. In cases like “ScopeCreep,” the disruption of OpenAI accounts allegedly led to the takedown of the malicious code repository before the malware achieved widespread distribution. This swift action in the “early stages” of the threat development cycle is presented as evidence that monitoring the AI usage pipeline can neutralize threats before they fully mature into high-impact compromises.

Far-Reaching Consequences for the Future of Secure AI Deployment

The continuous, documented abuse of commercial LLMs by state actors forces a re-evaluation of how these powerful technologies are developed, governed, and deployed.

Implications for Model Safety Guardrails Against State-Level Exploitation

The persistent success actors have in bypassing guardrails—whether through social engineering prompts (like the CTF pretexts) or iterative refinement—highlights that current safety mechanisms are insufficient against dedicated, well-resourced state actors. This necessitates a move toward more resilient, perhaps context-aware, safety architectures that are continuously hardened against adaptive, multi-model attacks.

The Evolving Role of AI Providers as De Facto Digital Security Gatekeepers

As state-level actors increasingly rely on commercial platforms for operational efficiency, AI providers are evolving into *de facto* digital security gatekeepers. Their usage policies, enforcement actions, and threat intelligence sharing now form a critical, non-traditional layer of national and international cybersecurity defense. This role places immense pressure on these companies to balance open innovation with robust, real-time defensive posture against nation-state adversaries.

Broader Considerations Regarding the Blurring Lines Between Cybercrime, Espionage, and Content Generation

The incidents discussed reveal a profound blurring of lines: state espionage (like that attributed to the Russian groups) leverages the same content generation tools as overt propaganda outlets, and the cyber-offensive capabilities developed through AI refinement (like ScopeCreep) blur the line with organized cybercrime. The trend indicates that future security strategies must account for actors who utilize AI simultaneously for financial scams, ideological influence, and intelligence gathering, all leveraging a shared technological toolset.

*Note on Sora: Mention of Sora videos, a generative AI tool for video creation, in context with the Rybar operation underscores the rapid incorporation of multimodal AI into influence operations, as reported in February 2026.*