The Great Divide: Market Exuberance Versus The “Compounding” Counter-Argument

The specter of the late 1990s looms large. Concerns over market froth are not just whisperings in the hallway; they are coming from the most serious financial institutions globally. The Bank of England has sounded a clear alarm, stating that on many measures, , drawing direct comparisons to the peak of the dot-com bubble. The danger, as they see it, is that this soaring optimism about AI’s potential could lead to a “sudden correction” if expectations around capability or user adoption are not met. Even more worryingly, much of this buildout is debt-financed, adding a layer of credit risk to the equation.

Even the titans of finance are weighing in. Jamie Dimon, CEO of JPMorgan Chase & Co., has cautioned investors, noting that some current lending practices resemble the environment before the 2008 crisis, alongside warnings about AI-driven job disruption. Yet, for every warning of a speculative bubble, there is a powerful counter-argument, one that champions the very nature of this investment: it’s compounding.

This is the core philosophical defense: traditional infrastructure—a bigger factory, a better highway—can be built, become obsolete, and then decay. But the invention of self-improving intelligence? That, proponents argue, cannot be undone. The knowledge and capability *compound* upon themselves exponentially. To them, the multi-trillion-dollar projected compute spending isn’t speculation; it’s necessary seed capital for an asset that grows in value and utility every day it’s trained.

Fiscal Prudence Enters the Chat: The Leader’s Own Recalibration

Even the champions of the AI race are showing signs of fiscal awareness, suggesting even they recognize the gravity of the capital requirements. The most telling sign of this shift is found within OpenAI’s own infrastructure planning. Not long ago, the company was circulating projections for compute spending nearing an eye-watering $1.4 trillion by 2030. That figure rattled markets, suggesting infrastructure ambitions were wildly outrunning realistic revenue forecasts.

However, as part of its massive new funding round negotiations, OpenAI has revised this down significantly. The new target is roughly $600 billion in total compute spending by 2030. This 57% reduction is a direct acknowledgment of the market’s concern, a strategic move to align capital outlay more closely with its now-strengthened revenue path.. Find out more about OpenAI Microsoft Amazon AI infrastructure deals analysis.

Think about the scale: $600 billion is still an astronomical figure, dwarfing the annual capital expenditures of most major tech firms combined. But by grounding the projection, OpenAI provides a crucial signal: the industry recognizes that while the potential is limitless, the path to profitability requires a managed burn rate. This recalibration buys time—time for its infrastructure partners to see returns and time for the market to digest the sheer scale of this necessary expenditure.

The Trillion-Dollar Tightrope: Capital Requirements vs. Revenue Trajectories

The core financial challenge for the leading AI labs is the relentless gap between required consumption and realized income. It’s a high-wire act where infrastructure commitments must be honored long before the end-user revenue fully materializes.

Let’s look at the numbers driving this tension, based on the latest figures circulating in early 2026:

The infusion of capital from the recent funding rounds—a deal that could top $110 billion—has served its primary purpose: it has managed the near-term risk. It pushed the projected point of financial exhaustion from a more immediate threat (perhaps 2027) to a manageable 2030 horizon. This is the essence of the deals we are seeing: locking in capacity now to guarantee access later, banking on that “compounding” growth to service the debt incurred today. If you’re interested in how capital markets handle this kind of futuristic financing, you might want to review our analysis on strategies.

The New Ecosystem: Scenarios for Amazon, Microsoft, and OpenAI Post-Restructuring

The recent sequence of interlocking deals—involving Amazon’s massive investment, the deepening of the Microsoft partnership, and the introduction of new infrastructure players—has functionally restructured the AI landscape. Success is no longer measured by who has the best model, but by who can best leverage their unique position within this complex, symbiotic structure. It is a high-stakes game of resource management, contractual leverage, and distributed dependency.

Defining Victory: Measuring the Return on a Complex Alliance

For these three entities, the next five years will be a series of performance reviews based on execution within this new architecture. The success metrics are highly specific and interdependent.

Amazon Web Services (AWS): The Hardware Validation. Find out more about OpenAI Microsoft Amazon AI infrastructure deals analysis tips.

Amazon’s massive $50 billion investment wasn’t just about gaining equity; it was about locking in compute usage and validating its in-house silicon strategy. AWS’s success will hinge on three measurable points:

Microsoft: The Software Moat

Microsoft’s position is rooted in exclusivity and integration—it’s less about raw compute capacity and more about sticky software adoption. Their primary measure of success is straightforward:

OpenAI: Profitable Scale

For OpenAI, the path is arguably the clearest but most difficult: survival through growth.

The key insight here is the intentional, complex splitting of training (heavily leveraging AWS Trainium) and inference/stateless API calls (locked into Azure). This choreography is designed to give both Amazon and Microsoft major returns while keeping OpenAI from being entirely dependent on one provider—a brilliant defensive strategy against any single point of failure or price hike.. Find out more about OpenAI Microsoft Amazon AI infrastructure deals analysis overview.

The Final Arbiters: Consumer Adoption and Enterprise Agent Success

All the financial engineering, all the infrastructure contracts worth hundreds of billions of dollars, ultimately hinge on something intangible: whether end-users actually change their behavior. The success of this trillion-dollar industrial alignment is being tested right now, not in boardrooms, but in the daily workflows of businesses and consumers.

The Consumer Juggernaut vs. The Agentic Future

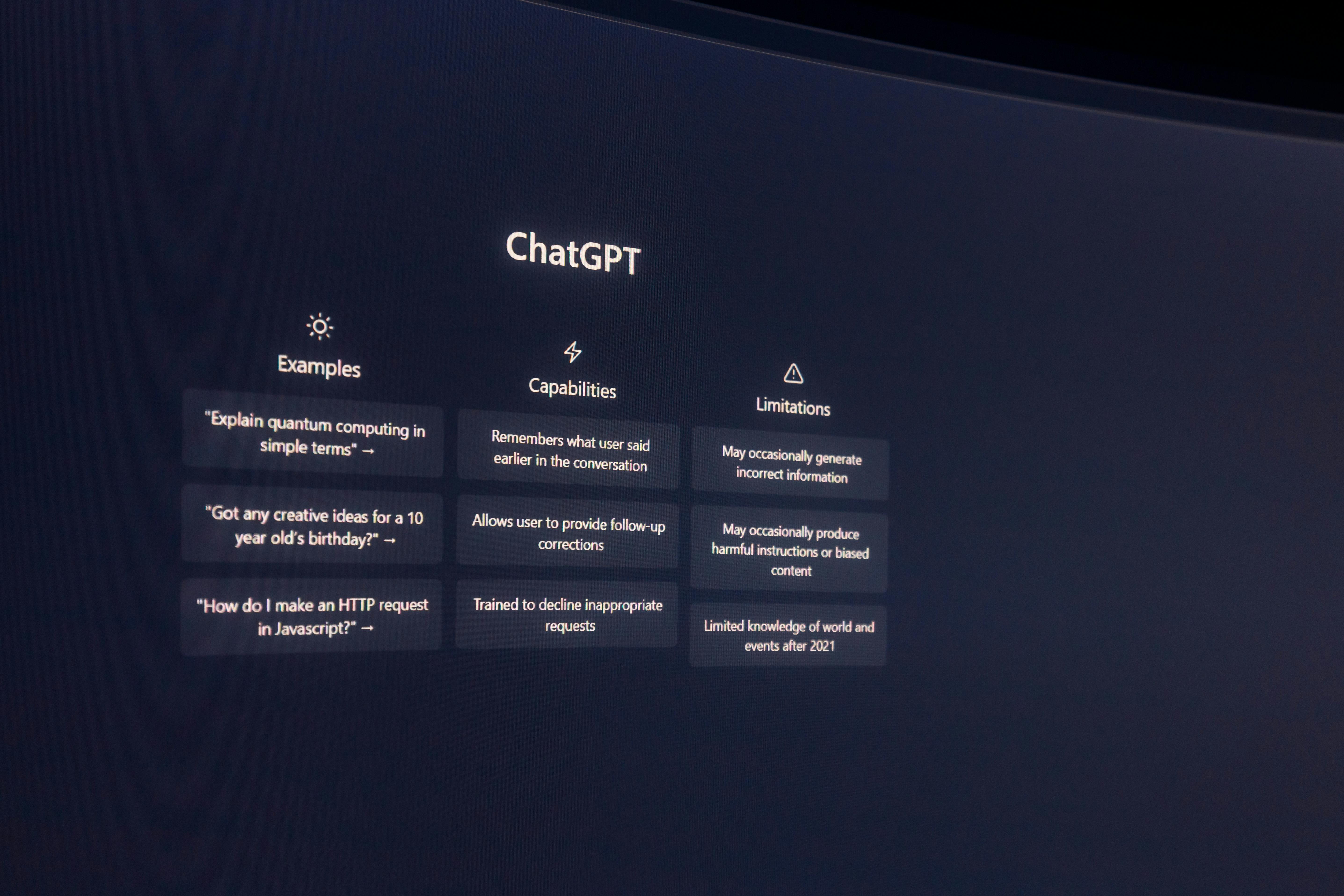

The adoption of foundational models like ChatGPT has already set records. It’s a phenomenal consumer product, driving significant subscription revenue. But that is the *first wave*. The real transformation—the justification for the current CapEx—lies in the second wave: Enterprise Agent Technology.

This next-generation tech moves beyond simple chat. We are talking about context-aware, autonomous “agents” that can execute multi-step business processes—managing supply chains, auditing contracts, or running complex software environments without constant human prompting. This is the technology Amazon is fundamentally banking on distributing via Frontier.

Consider this practical implication:

If these agents successfully integrate into core business logic and deliver demonstrable productivity gains that *exceed* the cost of the compute required to run them, the entire economic thesis holds. If the agents fail to move past the novelty phase—if they are too brittle, or if the productivity gains are hard to quantify—then the market may indeed deem this an over-leveraged bubble, regardless of the compound nature of the underlying intelligence.

Actionable Takeaway for Tech Leaders and Investors

The key to navigating the next few years isn’t just picking the cloud provider; it’s assessing the application layer. Look past the CapEx announcements and ask these questions about any AI project:

Conclusion: The Performance Review Commences

The structural reset is complete. The complex choreography involving Amazon’s capital and silicon commitment, Microsoft’s exclusive API gateway, and OpenAI’s aggressive scaling plan has culminated in a new set of rules for the digital economy. We are operating in an environment where a single company’s projected spending ($600B by 2030) is on par with the *total* capital expenditures of the other hyperscalers combined for a shorter period.

The global economy is now deeply invested in the idea that AI is fundamentally different—that the technology is not just a product to be sold, but an infrastructure to be built upon, capable of compounding returns that justify the initial, immense outlay. The Bank of England and others are right to be cautious; when you price the market for perfection, any stumble is magnified. But the sheer scale of the deals shows that the biggest players believe the compound growth will outpace the debt load, making the risk acceptable.

The era of massive, pre-monetization capital infusion is winding down. The infusion bought the time needed for the next phase. The performance review—the moment where usage data, agent success rates, and realized gross margins are weighed against the hundreds of billions spent—is now officially commencing across the entire digital landscape. The next three years will tell us whether this was the greatest gamble in technology history or simply the necessary down payment on the future.

What do you think? Has the market been overly cautious about the debt load, or are we seeing a classic bubble forming despite the “compounding” argument? Share your thoughts in the comments below—we need all perspectives for this one!