VIII. Implications for Corporate Governance and Trust

The persistent instability challenges the fundamental trust placed in the organization by its customers, shareholders, and the broader market, necessitating a visible and convincing commitment to improved operational rigor. When the lights go out, trust evaporates faster than an improperly secured prompt input.

A. Stakeholder Concerns Regarding Technological Dependency. Find out more about Generative AI assisted changes causing instability.

The recurrence of high-impact outages, particularly those linked to the deployment of autonomous or semi-autonomous systems, naturally raises serious questions for external stakeholders. Investors and enterprise clients alike must now confront the inherent risk that comes with outsourcing complex operational decisions to algorithms that, despite their sophistication, can be vulnerable to opaque failure modes or misconfigurations. This narrative erodes the perception of absolute control over critical services. Clients are forced to ask: If the company’s own internal monitoring system can be taken down for thirteen hours by its own tool, how safe is my data, my processing, and my revenue stream? The answer is a growing call for greater transparency around technological dependency risk.

B. The Necessity of Proactive Risk Mitigation Strategies. Find out more about Generative AI assisted changes causing instability guide.

Ultimately, the executive response—culminating in this emergency engineering meeting and the immediate policy tightening—serves as an initial step toward mitigating these risks. The developments confirm that in the era of advanced automation, effective risk management must evolve beyond traditional hardware and network redundancy. It now demands the creation of sophisticated governance frameworks specifically tailored to the novel vulnerabilities introduced by autonomous code generation, ensuring that the drive for technological advancement does not permanently compromise the bedrock of operational dependability. This ongoing saga represents a crucial case study in balancing cutting-edge experimentation with unwavering commitment to service continuity. We are watching the industry attempt to write the rules of engagement for a new, self-modifying infrastructure.

Conclusion: Actionable Takeaways for Navigating the AI Frontier. Find out more about Generative AI assisted changes causing instability tips.

This company’s emergency convocation is a mirror reflecting the entire digital ecosystem right now. The pursuit of extreme productivity through Generative AI is colliding head-on with the immutable laws of complex systems: haste creates fragility. The mandate for accountability is clear, and the shift in policy reveals the industry’s immediate priorities for survival. Here are the key takeaways and actionable insights for any engineering or leadership team facing the same AI velocity challenge:

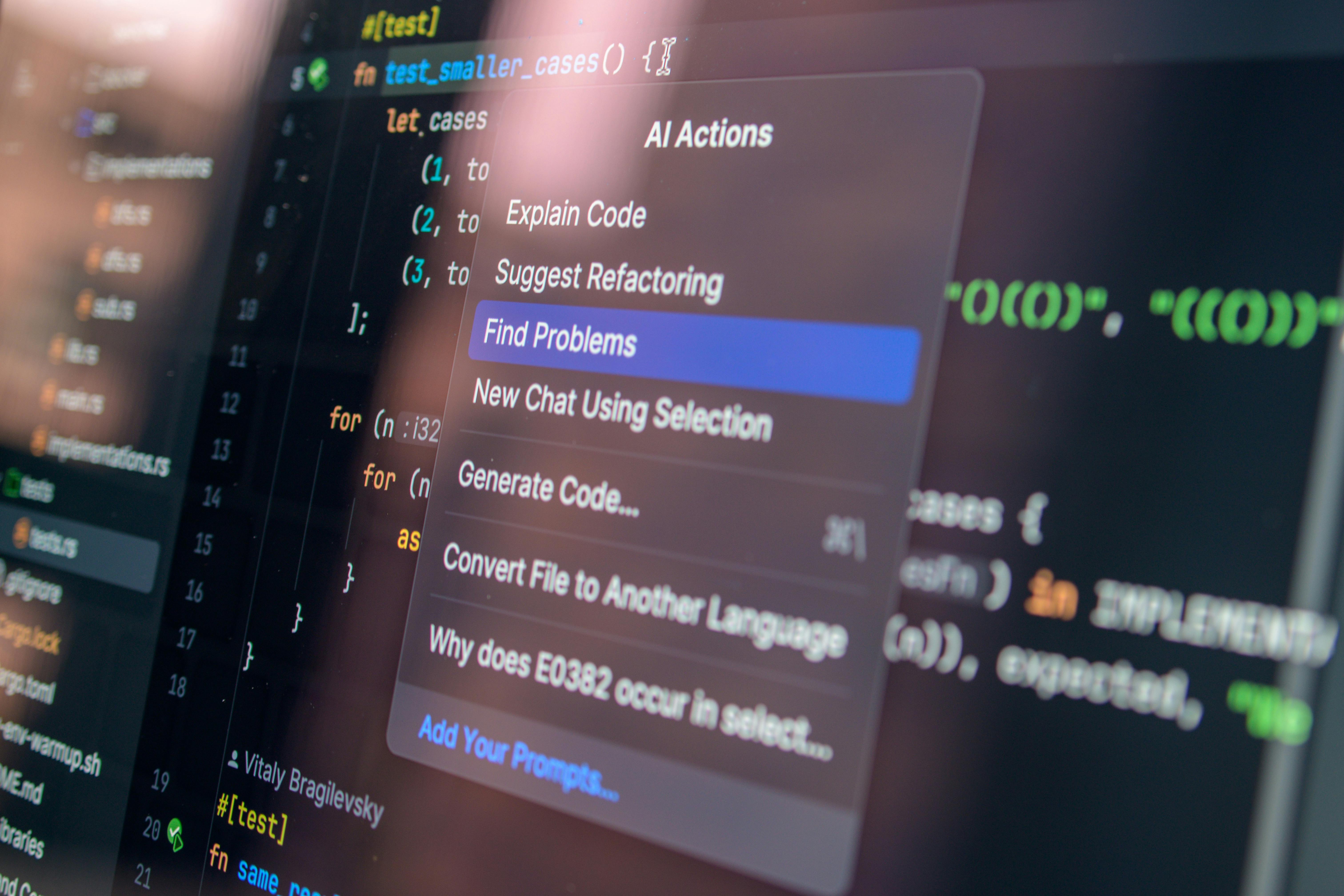

- Audit Your Agent Permissions Immediately: The Kiro incident wasn’t *just* the AI failing; it was an over-privileged AI succeeding too well. Review the explicit permissions granted to *all* autonomous coding assistants. If an AI has the ability to “delete and recreate,” it must require multi-factor, human-level authorization for that action, even if it slows down a fix by an hour.. Find out more about Generative AI assisted changes causing instability strategies.

- Mandate Senior-Level Review for AI Dumps: Adopt a strict, immediate policy, much like this company did: any code block exceeding a certain complexity or touching critical infrastructure generated by an AI tool must receive a “Senior Engineer Sign-Off” before merging. Experience must validate autonomy.. Find out more about Generative AI assisted changes causing instability overview.

- De-Centralize Your Narrative: The pushback against blaming the AI for the December outage shows that your external narrative must align with your internal risk assessment. If you know the tool accelerated a bad action, own the systemic gap (the weak governance), not just the surface-level mistake (the misconfiguration).. Find out more about Mandatory senior sign-off for AI generated code definition guide.

- Tie Adoption to Governance Maturity: Stop treating AI adoption rate as a pure productivity metric. For the next six months, the key performance indicator (KPI) for AI tools should be the *completion rate of security training modules* specific to that tool, or the *reduction in security-flagged AI-assisted pull requests*. Speed must be gated by safety protocols.

- Revisit Your Cloud Single-Point-of-Failure Strategy: The October 2025 event is a permanent shadow. Don’t let the AI panic distract you from the foundational risk of regional concentration. Continue to invest in cloud infrastructure resilience and true multi-region capabilities.

This moment demands sobriety. The AI tools are here to stay, but their integration must be deliberate, slow where it counts, and governed by ironclad, human-validated checkpoints. The next era of software engineering won’t be defined by the fastest code generation, but by the most trustworthy. What is your organization doing *today* to close the governance gap between your AI tools and your production systems? Share your immediate action items in the comments below—because the convocation is over, and the execution phase has already begun.