Tangible Impact: Transforming the Generative AI Ecosystem

The immediate beneficiaries of this integrated solution are the users of generative AI services. The focus shifts from merely *having* access to powerful models to *using* them fluidly and at scale. The practical, day-to-day difference for developers and end-users is where the true ROI of this technical feat lies.

Enhancing Real-Time Applications and Developer Experience

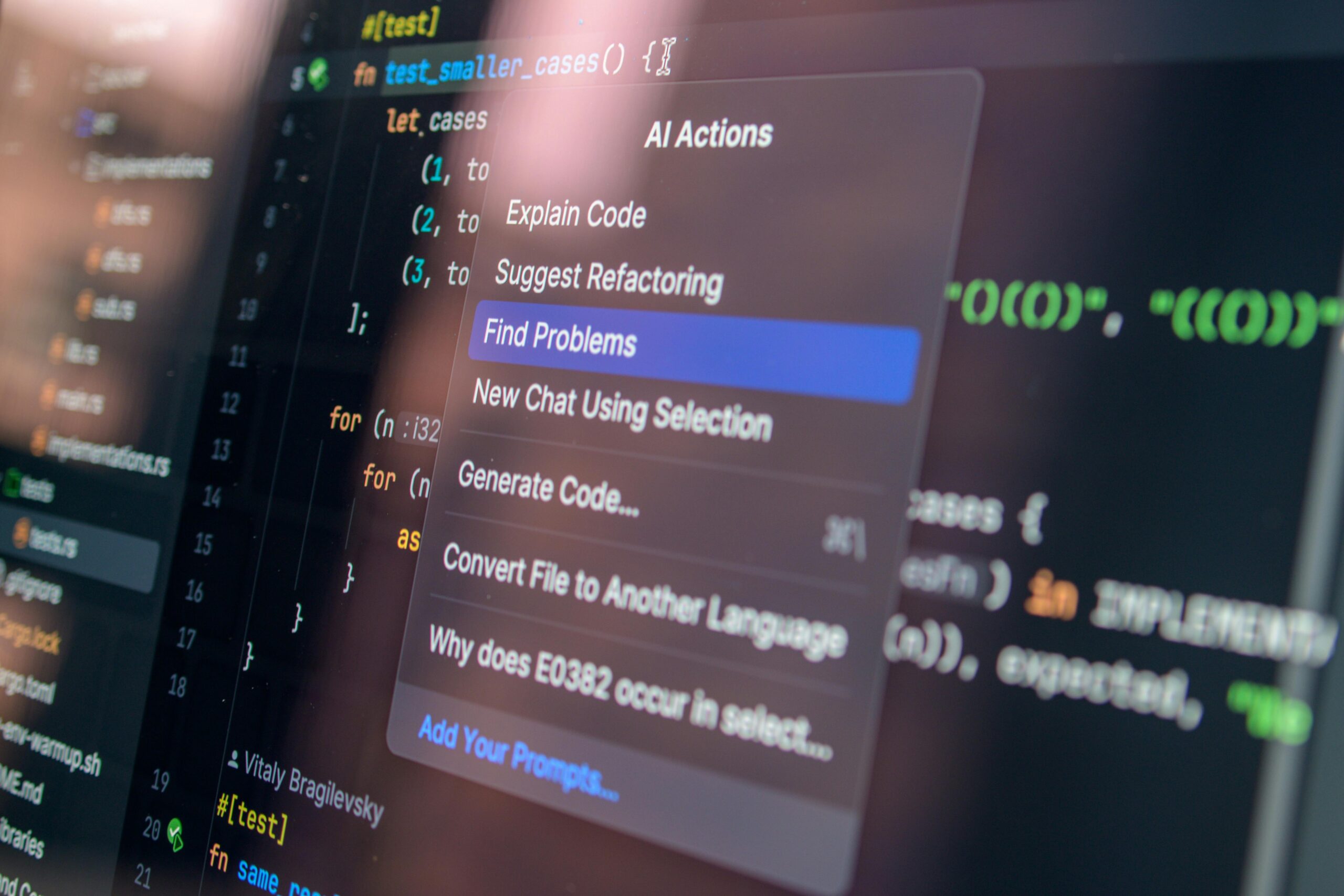

The primary practical benefit is the transformation of latency-sensitive applications. Think about your own workflows. Use cases such as real-time coding assistance, which requires near-instantaneous context analysis and suggestion generation, or highly interactive, multi-turn conversational agents, suddenly become much more feasible and effective at scale. A reduction in perceived lag—that awkward pause where the user wonders if the system hung—directly translates to a better user experience, higher adoption rates, and greater value extraction from your deployed models.

Here are the tangible gains for developers:

- Focus on Complexity, Not Brevity: Developers can stop optimizing prompts for minimal token count just to reduce waiting time and instead focus on the complexity and utility of the task the model is solving.

- True Interactivity: Conversational AI becomes genuinely conversational, able to process long contexts and respond instantly, mimicking human-speed dialogue.. Find out more about Cerebras CS-3 Trainium disaggregated inference model.

- Faster Iteration Cycles: When testing new models or parameter sets, reduced inference time means developers get feedback faster, accelerating their overall development lifecycle.

- Justifying Capex: Performance gains this large make multi-million dollar cloud contracts significantly more economical over time, shifting the discussion from ‘cost’ to ‘efficiency.’

- Market Share: By becoming the first major cloud provider to offer Cerebras’s disaggregated solution, AWS secures a powerful, exclusive performance edge in the market.

- Investor Confidence: Cerebras’s recent $23 billion valuation shows the market is rewarding firms that solve difficult, specialized problems, validating the entire ecosystem of differentiated AI semiconductor firms.

- Expect a New Baseline: The promise of “order of magnitude” faster inference will quickly become the new expectation for any new production-grade generative AI application.

- Embrace Disaggregation: Future performance gains will likely come from splitting workloads across specialized hardware, not from single, monolithic chips.

- Look to Bedrock: Access is managed through Amazon Bedrock. Start prototyping now to understand the latency differences your current models exhibit on this new path.

- Watch the Roadmap: The commitment to rolling out open-source LLMs and integration with Amazon Nova later this year means the performance ceiling is only going up.

This infrastructure upgrade means the underlying computing power can now gracefully handle the computational demand, shifting the focus from performance limitation to creative application development. If you want to see how this level of speed is being leveraged, look into the latest advancements in real-time financial modeling, which is another sector that heavily benefits from EFA’s low-latency communication capabilities.

Expanding Access Through Amazon Bedrock

The delivery mechanism through Amazon Bedrock is perhaps the most critical component for ecosystem democratization. Bedrock serves as a unified, managed service layer, expertly abstracting away the complexities of managing specialized hardware clusters. By deploying the Trainium-CS-3 solution here, AWS allows any organization with an AWS account to provision the world’s fastest inference capabilities for their chosen models without needing deep expertise in hardware orchestration or specialized cluster management.

This move significantly lowers the barrier to entry for high-performance generative AI. It allows businesses of all sizes to skip the years-long capital expenditure cycle required for on-premises, custom-built AI farms and instead tap into this speed on a consumption basis. This democratizes the cutting edge. If you’re interested in how to manage the models within this platform, an understanding of cloud infrastructure security is paramount, as the Nitro System foundation ensures the same isolation customers expect from any other AWS service.

Future Trajectory: Beyond Today’s Breakthrough. Find out more about Cerebras CS-3 Trainium disaggregated inference model guide.

The announcement today is framed not as a static product launch but as the foundational layer for a much broader, more deeply integrated set of services slated for unveiling over the coming calendar year. This signals a long-term, strategic commitment from both companies to co-develop the next generation of cloud-native AI infrastructure. The roadmap points toward even deeper specialization and integration with Amazon’s internal silicon pipeline.

Planned Rollout of Open-Source Models on New Hardware

A key development planned for later in 2026 involves the expansion of the service catalog. AWS has committed to offering leading open-source large language models running directly atop this newly integrated Cerebras-powered infrastructure. This is strategically vital. It allows the community and enterprises that prefer the flexibility and transparency of open models to access the same industry-leading inference speeds previously reserved for proprietary systems. By enabling these open models on the disaggregated stack, AWS makes a powerful statement about supporting diverse AI strategies while simultaneously anchoring innovation within its own cloud ecosystem.

Integration with Amazon Nova’s Next-Generation Components

The real long-term vision, however, involves fusing this partnership with Amazon’s internal chip development. The future roadmap explicitly includes the integration of Amazon Nova hardware with the Cerebras systems. Amazon Nova represents the company’s own suite of custom AI silicon, designed for various stages of the AI lifecycle. The planned fusion of Nova components with Cerebras’s CS-3 architecture suggests a future where AWS can offer even more granular specialization, potentially creating bespoke inference pathways that are even more efficient than the initial Trainium-CS-3 pairing. For instance, reports from earlier this year indicated the existence of advanced Nova 2 models with ‘Extended Thinking’ capabilities, and integrating this reasoning power with the WSE-3’s massive decode engine could unlock performance levels we can only theorize about today. This speaks to a continuous co-evolution of Amazon’s proprietary chip development and its strategic external partnerships, aiming to maintain a technological lead in cloud compute performance.

Broader Economic and Sectoral Implications

The magnitude of this deal and its technical novelty cannot be viewed in isolation. It is a significant event that forces a reassessment of capital allocation, competitive positioning, and the overall pace of technological progression within the global economy. This is about more than just faster chatbots; it’s about accelerating enterprise modernization itself.. Find out more about Cerebras CS-3 Trainium disaggregated inference model tips.

The Evolving Investment Narrative for Hyperscalers

For investors tracking the technology sector, this partnership directly influences the investment narrative surrounding the major cloud providers. Capital expenditure (capex) on AI infrastructure is a major point of debate, with concerns often raised about the sheer cost. However, a demonstrable return on that investment, delivered through a clear, measurable performance advantage like “an order of magnitude faster inference,” justifies the expenditure. This deal provides a powerful counterpoint to concerns about undifferentiated spending, showcasing how strategic, high-leverage partnerships can translate massive infrastructure investment into a superior, marketable service offering, thereby driving customer acquisition and retention for the cloud giant.

Think about the numbers:

Potential Shift in Enterprise AI Adoption Rates

The speed and accessibility of this solution have the potential to accelerate the timeline for enterprise-wide AI adoption across the board. Many businesses have been hesitant to commit to large-scale, real-time AI projects due to two primary concerns: operational costs associated with high latency, or the enormous capital outlay required for specialized, on-premises hardware.

By providing an immediately available, consumption-based service that breaks the inference speed barrier, AWS effectively removes one of the final major technical hurdles to widespread, mission-critical deployment of generative AI across various industries, from finance to healthcare. If your compliance department has been stalling a project because the model’s response time was unacceptable for customer-facing roles, this new capability changes the conversation from “if” to “when.”

Leadership Commentary and Vision for Accelerated AI

The strategic intent behind this monumental effort is best summarized by the executives driving these initiatives, whose statements underline a shared vision for the future of artificial intelligence interaction: utility over novelty.

Executive Insights on Solving the Latency Challenge

The focus from the leadership of Cerebras has been laser-sharp on the delivery of tangible customer value. Andrew Feldman, Founder and CEO of Cerebras Systems, articulated that inference is the stage where AI finally delivers its real-world utility to customers, and speed remains the foremost obstacle for demanding, interactive workloads. By architecting this solution—allowing each specialized system to perform the task it is architecturally best suited for—the combined result is an unparalleled level of speed and performance in the cloud, accessible to a global constituency of users.. Find out more about Elastic Fabric Adapter AWS Nitro System linkage insights.

The Enterprise Imperative for Blisteringly Fast Compute

The overarching theme articulated by the partnership is the democratization of blisteringly fast AI capability. The goal extends beyond merely matching competitors; it is about setting a new, significantly higher benchmark for performance in the cloud. Feldman’s commitment is clear: “Every enterprise around the world will be able to benefit from blisteringly fast inference within their existing AWS environment”.

This is more than a technical achievement; it is an economic one. It signifies that the cutting edge of AI compute performance is no longer reserved for the very largest research labs but is being packaged and delivered as a utility service to the entirety of the enterprise market. This development—the integration of custom silicon like Trainium with disruptive external silicon like the CS-3—is a defining element of the current technological narrative, confirming that the next wave of productivity gains will be driven by specialized, high-speed infrastructure.

Conclusion: Actionable Takeaways from the Inference Leap

What you need to walk away with today, March 13, 2026, is that the infrastructure for next-generation generative AI has just been fundamentally upgraded. The architecture splitting the prefill and decode stages, linked by EFA, is not an academic exercise; it is a deployment designed to deliver immediate, measurable gains.

Key Takeaways for Architects and Developers. Find out more about Wafer-Scale Engine for low latency AI decoding insights guide.

The future of AI isn’t just about bigger models; it’s about deploying them so quickly that they feel instantaneous. This AWS-Cerebras coupling has just delivered the required plumbing. Don’t let your competitors be the first to reap the rewards of this speed advantage.

What complex, latency-sensitive AI task in your organization is now feasible because of this speed breakthrough? Share your thoughts in the comments below—the conversation around true, real-time AI performance has just begun!

![OpenAI mission betrayal lawsuit Musk: Complete Guide [2026] OpenAI mission betrayal lawsuit Musk: Complete Guide [2026]](https://tkly.com/wp-content/uploads/2026/03/d97587d3bae1f7ff1cebe275b2cb1bffd9438622-150x150.png)