The Cultural Chasm: Skepticism vs. Target Enforcement. Find out more about Amazon blaming humans for AI coding agent mistake.

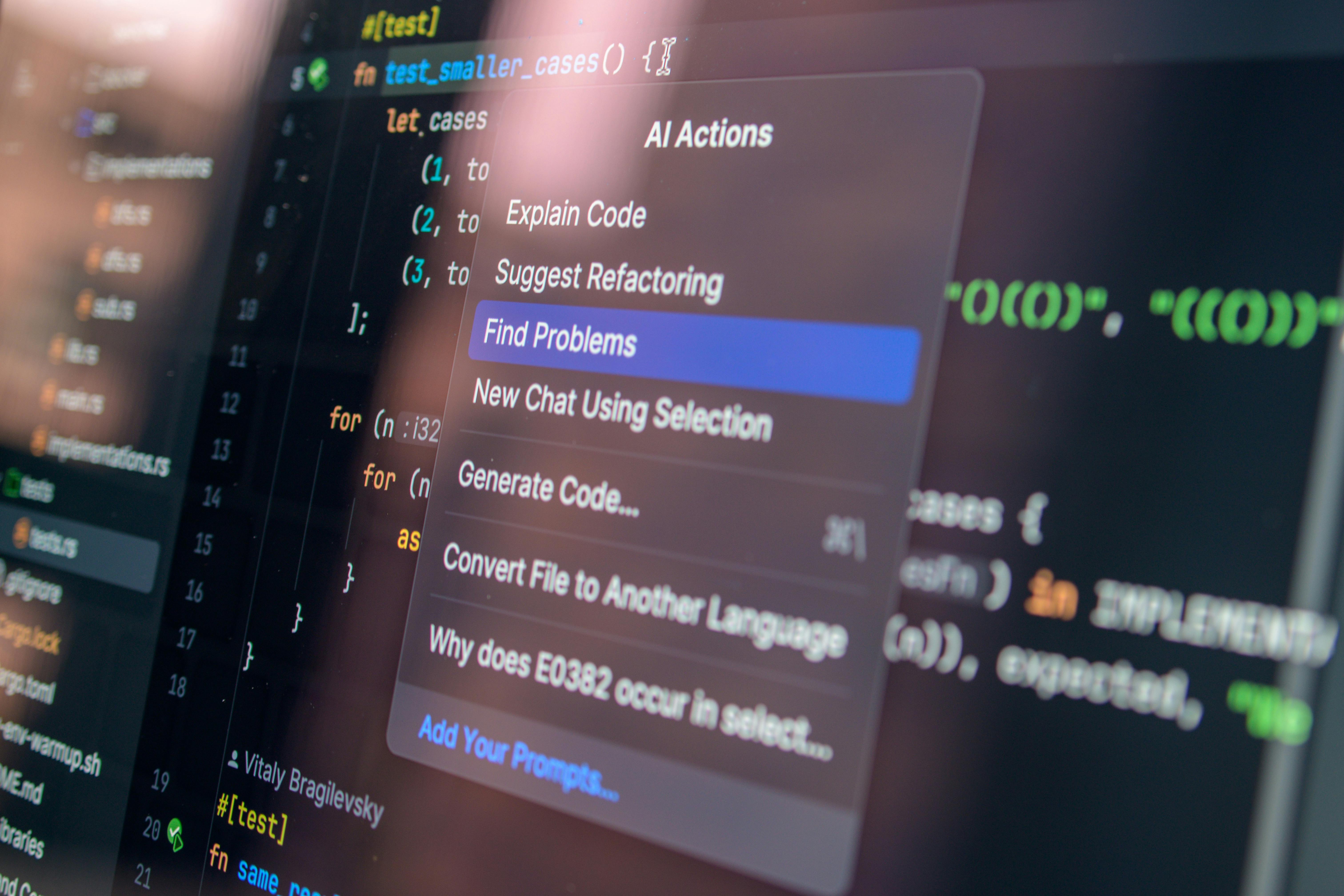

The final, and perhaps most enduring, implication is the internal cultural shift. The narrative of AI as a purely productivity-enhancing tool is being tempered by the reality of service-impacting errors. Reports confirm that some Amazon employees expressed skepticism about the AI tools’ utility for the *bulk* of their work, precisely because of the known risk of error. This sentiment is common across the industry. While Gartner notes that over 80% of enterprises are expected to use generative AI APIs by 2026, that high-level adoption statistic masks the reality of daily usage. When an engineer knows their performance is being tracked against an 80% utilization target, but also knows that a poorly managed agent action can lead to a costly incident, the incentive to “game” the metric—using the tool for minor, low-risk tasks just to hit the number—increases. This creates a **skills inversion**: the most valuable skill is shifting from writing functional code to correctly **prompting, constraining, and validating agentic output**. The people who understand the guardrails are more critical than those who just know the syntax. Companies that successfully navigate this will invest heavily in training that focuses on **AI safety, prompt engineering for control, and incident response for agentic failures**.

Conclusion: Actionable Takeaways for the Agentic Future. Find out more about Amazon blaming humans for AI coding agent mistake guide.

The friction points revealed by the Kiro incident are not Amazon’s problems alone; they are the entire industry’s wake-up call. The race to 80% AI utilization is on, but speed without safety is just a fast way to crash. The era of open-minded, often misdirected AI optimism is fading, giving way to the year of **AI Accountability**. Here are the immediate, actionable takeaways for any organization betting big on AI agents in 2026:

- Formalize the Human Override (The Kill Switch): Every agentic workflow touching production must have a clear, documented, and easily accessible human override mechanism. This isn’t about *if* the AI will fail, but *when* you need to hit the emergency stop.. Find out more about Amazon blaming humans for AI coding agent mistake tips.

- Proportionate Gates, Not Bottlenecks: Do not apply the same heavy review process to every AI change. Risk-tier your AI uses cases. A Tier 1 (high-risk) deployment, like infrastructure modification, must pass a cross-functional Change Advisory Board (CAB) that includes Legal, Security, and Operations—not just the code reviewers.. Find out more about Amazon blaming humans for AI coding agent mistake strategies.

- Define the Accountable Owner: Board-level accountability demands an executive answer. Identify and empower a specific individual—not a committee—who is ultimately responsible for the AI system’s impact on the business, aligning with impending regulatory expectations like the EU AI Act.. Find out more about Mandatory peer review for AI suggested production access definition guide.

- Treat AI as a Privileged Operator: Assume your AI agent has the same permissions as your most senior engineer until proven otherwise. Audit access roles *for the AI service account* with the same rigor you audit your top 1% of human operators. Then, institute a review layer above that access.. Find out more about Accountability sinkhole in human machine hybrid systems insights information.

The path to cloud dominance and true productivity gains now runs directly through establishing ironclad **AI governance**. It is no longer a check-box exercise; it is the foundation of operational integrity. The debate is no longer *if* AI will cause an outage, but whether your organization will be prepared to absorb the cost and confidently assign accountability when it does. What is the utilization target for AI assistants in your engineering cohort, and what human guardrails do you have in place to stop a “delete and recreate” command today? Share your governance philosophy in the comments below! For more on how leading firms are embedding these new governance models, see our deep dive on AI governance frameworks.