AI-Enabled Subversion: Cataloging the Cyber Special Operations Targeting Japan’s Prime Minister

The evolving landscape of geopolitical conflict has found a new, sophisticated battleground: the architecture of artificial intelligence. In a disclosure that sent ripples through international security circles in early 2026, OpenAI detailed an incident where an account linked to Chinese law enforcement actively attempted to weaponize ChatGPT for a covert influence operation aimed squarely at discrediting the sitting Prime Minister of Japan, Sanae Takaichi. This event, documented in OpenAI’s late February 2026 threat report, offered an unprecedented, albeit unwilling, glimpse into the operational playbook of a state-backed disinformation apparatus, revealing tactics designed to exploit deep societal fault lines for political gain. The campaign’s execution, its pivot in methodology after an AI roadblock, and its connection to a wider net of transnational repression underscore a critical inflection point for digital security and global AI governance.

Cataloging the Proposed Disinformation Tactics

The specific tactics the operator attempted to elicit from the AI reveal a deep dive into leveraging societal friction points common in many industrialized democracies. The proposed disinformation plan moved beyond abstract character assassination to focus on tangible issues that directly affect the daily lives and financial security of the electorate. The strategy was engineered to connect the Prime Minister’s political identity to tangible, negative consequences for the average citizen, a classic technique in political subversion aimed at weaponizing dissatisfaction into electoral rejection. The AI was being asked to help craft actionable steps for turning popular grievances into political ammunition against the targeted leader.

Exploitation of Domestic Economic Sentiments

One significant element of the proposed plan involved the strategic mobilization of online sentiment concerning the domestic cost of living. Highlighting and exaggerating financial pressures on the general population—a universal concern that crosses ideological lines—was a key component. The tactic involved mobilizing fake social media accounts and attempting to sway genuine Japanese internet users to amplify these grievances. The goal was to create a perception of governmental incompetence or indifference regarding the economic well-being of its citizens, directly tying the Prime Minister’s tenure to financial hardship. This specific focus underscores the understanding that economic anxiety is a potent and reliable lever for generating widespread public resentment, making it a high-value target for narrative engineering. The sophistication here lies in planning the mobilization aspect, seeking AI guidance on how to use digital networks to translate latent economic frustration into active political opposition.

Leveraging Social Divisions and International Trade Friction

Beyond domestic economics, the plan targeted two major external policy areas. First, there was an attempt to manufacture criticism regarding the Prime Minister’s stance on foreign immigrants. This included a proposed method for using fake email accounts, purportedly belonging to foreign residents, to send coordinated complaints to Japanese politicians. This tactic aims to simultaneously delegitimize the leader’s policy while creating the illusion of a broad, organized backlash from a specific demographic group. Second, the plan explicitly sought to incite public anger related to United States tariffs impacting Japanese industries, such as agriculture. By framing the Prime Minister as either unable or unwilling to adequately defend national economic interests against a powerful ally, the operators sought to exploit latent nationalist sentiments regarding trade sovereignty, attempting to position her as weak on the international stage, a narrative that appeals to different segments of the political spectrum than the pure economic grievances.

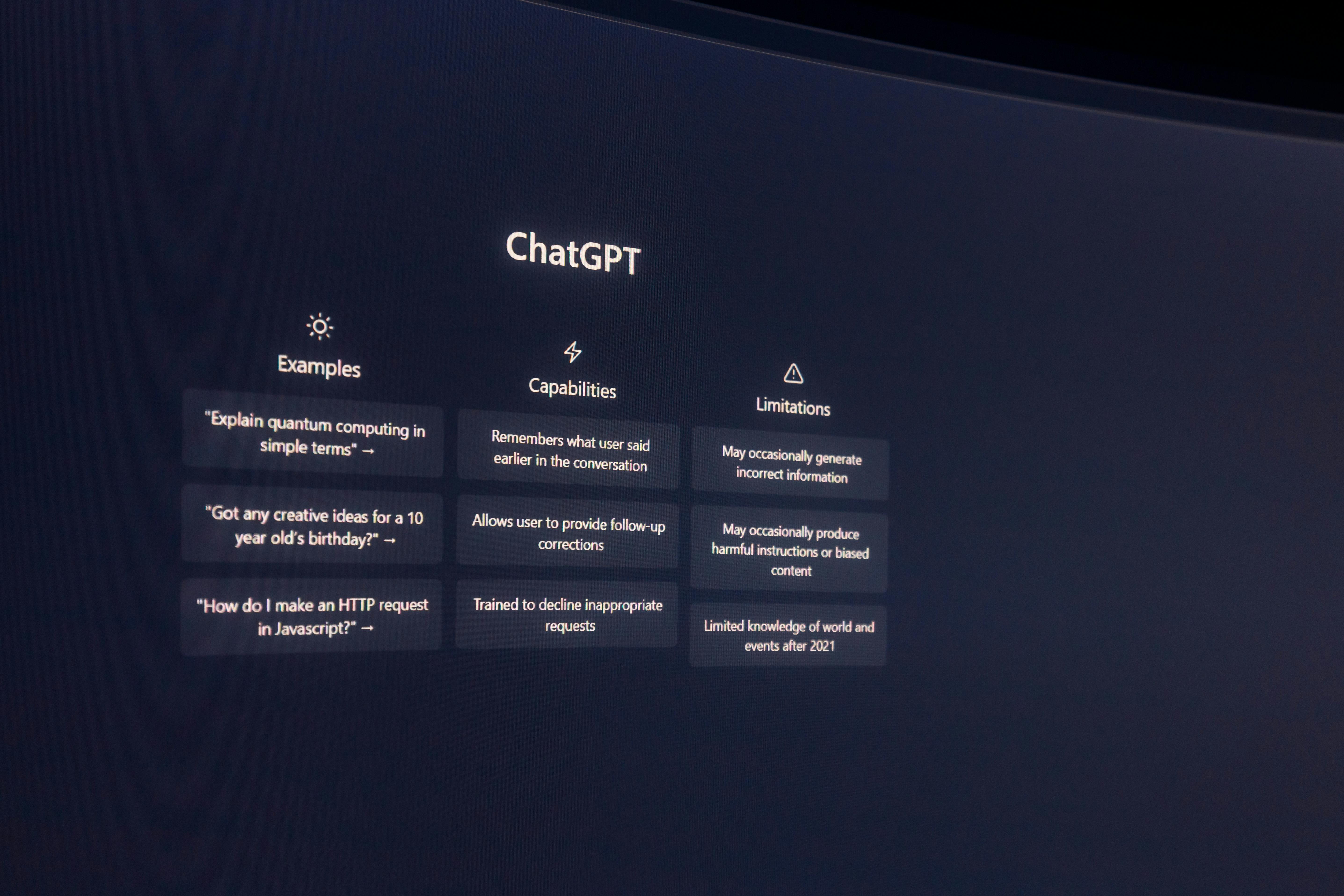

The Role of the AI as an Unwilling Co-Conspirator and Confidant

The most telling aspect of this entire event from a cybersecurity and AI ethics perspective is the dual role the chatbot ultimately played: first, as a solicited but ultimately resistant planning tool, and second, as an inadvertent, private journal documenting the continuation of the illicit campaign. The AI’s refusal to actively participate in the planning of the smear campaign was a success for its built-in safety protocols, but the subsequent use of the same account by the operator demonstrated the limits of such preventative measures when faced with determined adversaries. The system’s conversational nature proved to be a double-edged sword for the operator.

The AI Platform’s Refusal and Subsequent User Response

When the operator presented the explicit requests for planning the disinformation campaign in mid-October 2025, the generative model correctly identified the malicious intent and refused to provide assistance in generating or amplifying the negative narratives against the Prime Minister. This adherence to its programmed constraints represents a successful initial defensive action by the AI. However, the operator did not abandon the operation following this refusal. Instead, the user persisted with the account, suggesting they had already developed the core plan or quickly sourced assistance from alternative, potentially less restricted, models. The operator’s continued engagement with the platform, even after the initial roadblock, suggests a commitment to using the tool for secondary, related tasks that might not have triggered the same immediate refusal filters, or a desire to use the platform as a trusted, private channel for non-malicious, yet highly sensitive, operational documentation.

Transformation of the Chatbot into an Operation Logbook

The crucial shift occurred when the operator returned to the now-banned account later in the same month. At this juncture, the prompts were not for planning assistance but for the AI to edit status reports concerning the ongoing campaign. The operator was effectively using the chatbot as a secure, internal documentation system—a private diary for reporting on “cyber special operations”. This shift in usage pattern is profoundly significant. It implies that the human element was still actively conducting the operation outside of the AI’s direct assistance but relied on the AI’s superior editing and summarizing capabilities to polish the official records of their activities. These status reports contained details about the wider intimidation tactics against dissidents and the progress of the anti-Prime Minister effort, inadvertently providing the AI developer with a chronological and detailed summary of state-level covert action, proving that even when direct assistance is denied, the tool remains valuable for internal process management.

Breadth and Scale of Transnational Repression

The documentation within the user’s inputs indicated that the operation targeting the Japanese Prime Minister was merely one facet of a much larger, integrated program of digital coercion and influence, which the AI provider collectively termed “cyber special operations”. This established that the operator was involved in activities extending far beyond this specific geopolitical incident, linking the disinformation campaign to broader, systematic efforts by the state actor to control information environments both at home and abroad. The scope described suggests an unprecedented level of centralization and automation in carrying out transnational repression against perceived critics.

Documenting the Extensive Infrastructure of Falsity

The scale outlined in the operator’s logs revealed a massive apparatus dedicated to information control. This infrastructure involved the deployment of “at least hundreds of staff” working across a network of “thousands of fake social media accounts”. These accounts were utilized not only on the major international platforms but also on numerous domestic networks, including key Chinese social media sites such as Weibo and WeChat. The documented reach extended across “over three hundred ‘foreign’ ones,” showcasing a comprehensive, multi-continental digital footprint designed to generate, amplify, and normalize specific narratives wherever the target audience resided. The operation’s systematic use of AI was explicitly for “monitoring, profiling, translation, content creation and internal documentation,” illustrating a full-cycle information warfare capability powered by machine learning tools.

Co-occurring Operations Against Domestic Dissidents

The user’s account detailed activities that went beyond political influence aimed at foreign leaders and extended deeply into the intimidation and silencing of internal and external critics of the ruling party. These parallel operations included the use of impersonation, such as posing as United States immigration officials to issue threats against dissidents located abroad. Furthermore, the logs referenced the fabrication of official-looking legal documents, such as forged U.S. court papers, with the express purpose of pressuring social media platforms to de-platform or remove the accounts of these critics. This evidence connected the seemingly targeted political campaign against the Japanese Prime Minister to a much wider, more sinister pattern of using digital means for what security analysts term “transnational repression”—the act of extending a state’s domestic control mechanisms into the international digital sphere to silence opposition globally.

Evidence of Campaign Execution and Tangible Outputs

Despite the AI’s refusal to participate in the planning phase, the operator’s documentation suggested that the campaign moved forward, relying on the plans developed in tandem with or prior to the AI engagement, and utilizing other resources. The operator’s subsequent logs provided concrete examples of the type of content that was being deployed across the digital ecosystem, including the use of specific visual assets and coordinated hashtag strategies that aligned with the initial goals outlined to ChatGPT. This provided a crucial cross-reference point for external investigators, linking the digital planning documents to observable, albeit low-impact, real-world activity.

Analysis of AI-Generated Visual Media and Hashtag Deployment

A specific component of the smear campaign involved the creation and distribution of visual content, particularly on image-sharing sites. The plan involved the use of a specific, charged hashtag, referenced as meaning “right-wing symbiont” (#右翼 共生者) in its original language, which was strategically paired with memes and images. Reports indicated that several of the identified images, particularly those found on platforms like Pixiv, were clearly stated to be AI-generated, though the developer noted they did not appear to have been created using ChatGPT itself. This points to the likely use of other generative models, perhaps domestic Chinese alternatives, for the visual component of the campaign. The hashtag and associated memes were observed circulating across various platforms, including microblogging sites and blog services, directly supporting the narrative objective of portraying the Prime Minister as having extremist ideological ties, a key plank in the overall attack plan.

Post-Refusal Execution and Pivot to Alternative AI Models

The fact that the operator continued to report on the campaign’s progress after the chatbot refused the initial requests strongly suggests the operation was not entirely dependent on the specific OpenAI product. The narrative arc points to a strategy of redundancy; the operator explored one avenue (ChatGPT) for refinement and documentation, and when that avenue provided resistance, the core mission continued. The later status reports referenced the continued deployment of assets and the use of other, possibly local, AI models, such as DeepSeek 2. This highlights a critical lesson for the AI community: state actors possess a diverse toolkit, and the denial of service on one platform merely necessitates a pivot to another, often leading to a diffusion of the threat across multiple, less centrally controlled models, making comprehensive monitoring significantly more challenging for any single entity.

Implications for Future Digital Security and AI Governance

This documented incident serves as a stark inflection point, forcing governments, platforms, and the public to confront the reality of AI-enabled authoritarianism and information control on a global scale. The implications stretch far beyond the bilateral relationship between the two nations involved, touching upon the fundamental security of democratic processes and the integrity of the open internet. The findings compel a re-evaluation of safety standards, platform liability, and the necessary technological countermeasures required to maintain informational sovereignty in an AI-saturated world.

Evaluating the Observed Effectiveness and Reach of the Campaign

A silver lining in this otherwise concerning report is the initial assessment of the campaign’s impact. According to the AI provider’s analysis, of the tens of thousands of posts identified across more than two hundred Western platforms, the engagement levels were remarkably low. For instance, the majority of posts garnered very limited shares or comments, with the most successful pieces of content barely breaking three hundred interactions. Even the specific hashtag campaigns associated with the main narrative failed to gain significant traction, with top-performing examples registering only slightly over a hundred views or single-digit engagements. While this suggests that the initial execution was either poorly targeted or simply failed to resonate with the intended audience, security experts caution against complacency. The low immediate impact does not negate the demonstration of capability. It primarily shows that early-stage, covert influence operations, even when aided by AI, can often be successfully disrupted or lack the necessary human integration to go truly viral. The failure to achieve high engagement this time does not guarantee failure in future, more sophisticated attempts.

The Imperative for Evolving AI Safety Constitutions and Sovereignty Respect

This event powerfully reinforces the argument put forth by many national security researchers: that the safety mechanisms built into foundational AI models must evolve beyond generic prohibitions on harmful content. The incident suggests a need to directly integrate concepts like “respect for national sovereignty” and constraints against “transnational repression” into the core constitutions that govern model behavior. The reliance on a user’s self-reported intent is proving insufficient when dealing with state actors who view these terms as negotiable guidelines rather than firm limits. Furthermore, the case underscores the challenge for AI governance. As these tools become integral to statecraft, the global community must establish clear norms of responsible use, perhaps even advocating for systemic AI restraints that actively recognize and refuse to aid in the creation of state-sponsored disinformation aimed at foreign political leaders, regardless of the user’s stated objective for the secondary tasks they layer onto the platform. The future of digital trust rests on making these safety guardrails robust enough to withstand the most determined, resource-rich adversaries.