The Unseen Backbone: Why High-Speed Networking is the New Performance Gatekeeper

Imagine trying to run a world-class symphony orchestra with thousands of musicians, but the conductor’s instructions take several seconds to travel from one end of the hall to the other. The musicians—the powerful processors—would stand idle, waiting for instruction, rendering their incredible talent useless. This is the reality of an improperly networked AI cluster. Modern AI development is not about one server doing one calculation; it is about thousands of specialized accelerators working in lockstep, sharing intermediate results, model weights, and gradients in real-time. This parallel processing demands near-instantaneous communication—a requirement that has made the underlying networking fabric the single most likely point of failure or, conversely, the single greatest source of leverage for investors.

The older model, relying on proprietary interconnects like InfiniBand, certainly had its time in the sun, especially for smaller, tightly controlled environments. But the sheer scale and economic realities faced by the hyperscalers—Alphabet, Amazon, Meta, and Microsoft—are driving a clear, structural shift toward open standards that offer superior flexibility and vendor choice. The market is rapidly consolidating around high-speed, advanced Ethernet optimized specifically for these AI workloads.

The Ethernet Takeover: 800G and Beyond in AI Racks

The most compelling evidence for this shift is in the numbers for the physical network gear itself. IDC reports that the data center portion of the Ethernet switch market experienced an astounding 62% year-over-year growth in Q3 2025, a direct result of these accelerating AI deployments. This growth isn’t coming from general-purpose office networking; it’s driven by the highest-speed ports. Specifically, revenues for 800GbE switches surged 91.6% sequentially, and the combined revenues for 200/400 GbE switches were up nearly 98% year-over-year. Why such aggressive speeds?

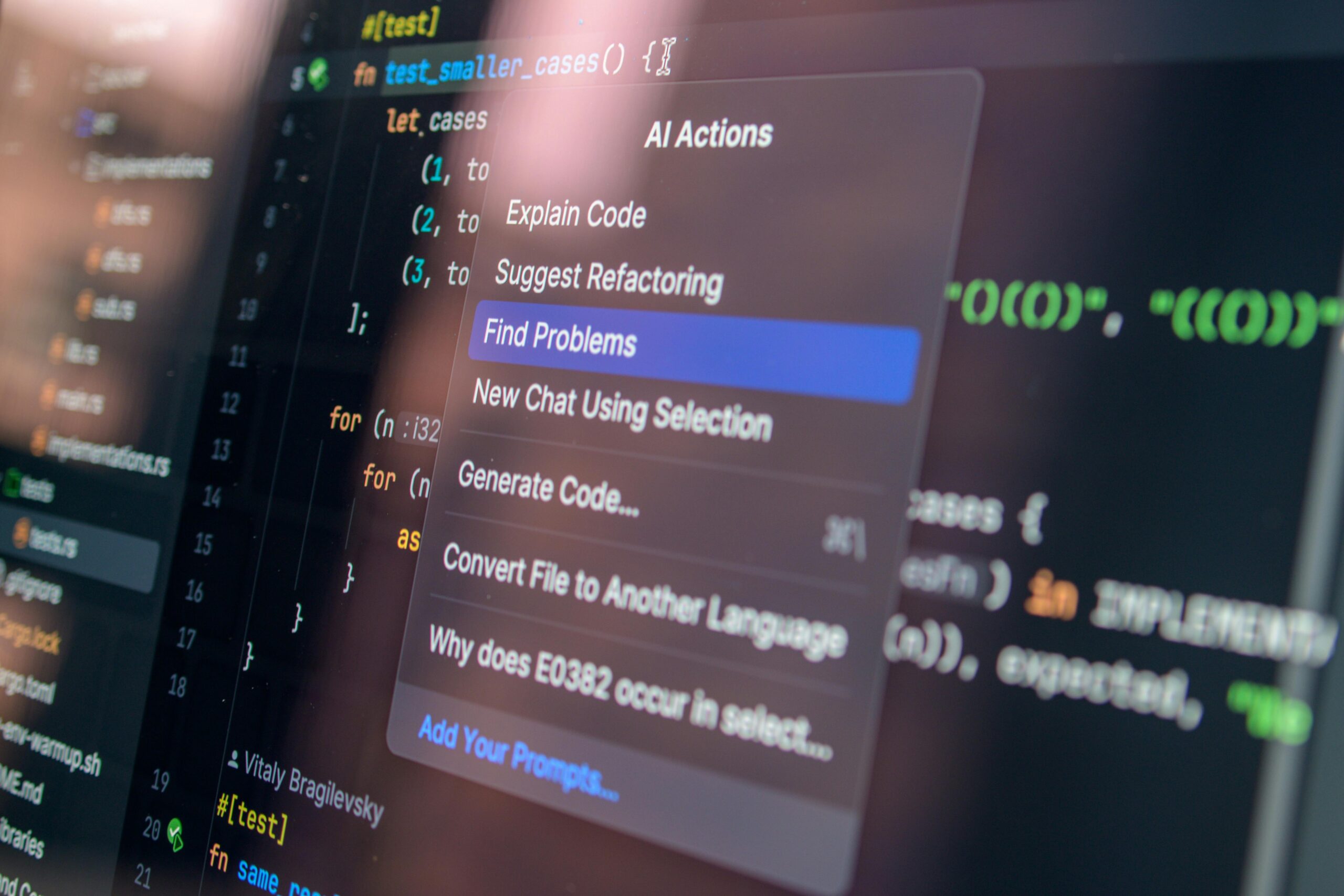

In 2026, we are seeing the widespread arrival of “AI rack systems,” where an entire rack of GPUs or custom XPU accelerators connects internally using these Ethernet switches for scale-up operations. This intra-rack communication is where latency kills performance, and the adoption of open-source, high-speed Ethernet over proprietary alternatives is a direct strategy by cloud giants to prevent vendor lock-in while maintaining performance advantages. Companies that specialize in the design and manufacture of these ultra-fast, low-latency Ethernet switching silicon and platforms are directly positioned to benefit from every single dollar the hyperscalers spend building out their next-generation facilities.

These networking specialists are not just selling hardware; they are selling the translation layer that makes billions in core processor investment actually work. If you want to capitalize on the ongoing AI infrastructure supercycle, you must look at the companies laying the digital railway tracks. Their growth is a high-leverage play because the cost of their components, while significant, is a fraction of the total system cost, yet they dictate the overall operational capability of the entire cluster. This is a foundational investment in the digital rails of the future.. Find out more about High bandwidth memory HBM investment opportunities.

For further reading on the broader trends shaping the global network, you can explore current perspectives on AI cluster performance metrics to see how network efficiency translates into training time savings.

The Software-Defined Fabric: SONiC and Ecosystem Integration

The complexity is not just in the physical speed; it’s in the control plane. The adoption of open-source networking operating systems like SONiC (Software for Open Networking in the Cloud) is key here. Alan Weckel of 650 Group forecasts that SONiC-based data center switching revenue will surge past \$5 billion in 2026, driven heavily by Microsoft Azure and Google Cloud. SONiC excels in these Ethernet-based AI backends because it supports the massive port speeds (800G and even 1.6T port growth is on the horizon) while integrating advanced features like EVPN/VXLAN and telemetry for optimization.

This ecosystem approach—integrating networking silicon from one provider, operating systems from a consortium, and management from the cloud giant—is a deliberate strategy to diversify risk and maximize performance. The critical lesson for investors here is that success in networking isn’t about the purest silicon anymore; it’s about deep, seamless integration with the top accelerator architectures, providing that reliable, high-throughput communication channel without imposing a single vendor’s toll on the entire system. The companies mastering this open, high-speed integration are the ones collecting the toll on the most critical digital roads being built today.

The Indispensable Role of High Bandwidth Memory Solutions: Powering the Parameter Explosion

If the network is the highway, then High Bandwidth Memory (HBM) is the massive, multi-lane interchange that gets data directly into the processor’s on-ramp at blistering speed. A GPU, no matter how many trillions of calculations it can perform per second, is rendered tragically slow if it spends most of its time waiting for the data to arrive from off-chip memory. The sheer size and complexity of modern language models—those boasting trillions of parameters—means they require an unprecedented volume of data to be fed to the compute cores continuously and immediately.

This is why HBM isn’t just a faster RAM stick; it is a fundamental architectural shift. By vertically stacking DRAM chips and connecting them via incredibly short, wide electrical pathways (the TSVs, or Through-Silicon Vias), HBM dramatically reduces the physical distance data must travel. The result is a massive increase in data throughput and superior power efficiency compared to traditional memory modules—two factors that are paramount when scaling to tens of thousands of accelerators, where wasted energy becomes an enormous operational cost.. Find out more about High bandwidth memory HBM investment opportunities guide.

The HBM Supercycle and Market Leadership in 2026

The memory segment, powered almost entirely by this AI demand, is currently experiencing a structural “supercycle.” Market research firms and investment banks are calling this out in early 2026. Some estimates suggest the total memory market size could exceed \$440 billion for the year. The HBM segment itself is the undisputed driver, with significant growth forecasts. BofA estimates the HBM market alone will reach \$54.6 billion in 2026, marking a staggering 58% increase from the prior year.

The competitive landscape within HBM is fascinating. While there is fierce competition—Samsung aims to surpass a 30% market share in 2026, and Micron is gaining ground—a clear leader has established a near-term moat. As of early 2026, industry consensus points to SK hynix maintaining its dominant position in the current flagship, HBM3E, which is slated to account for about two-thirds of total HBM shipments this year. Furthermore, the leadership is expected to carry forward into the next-generation architecture, HBM4, with UBS predicting SK hynix will secure approximately a 70% market share for the HBM4 destined for NVIDIA’s next-generation Rubin platform in 2026.

The growth is directly tied to the complexity of the workloads. Goldman Sachs forecasts that HBM demand specifically for custom-ordered, ASIC-based AI chips will skyrocket by 82%, capturing a massive portion of the market. This signals that as the central chips diversify beyond standard GPUs, the specialized memory attached to them becomes even more critical, entrenching the revenue streams for the dominant HBM suppliers.

Actionable Insight: Don’t Wait for HBM4 Uniformity

While new players and next-gen products like HBM4 will bring increased competition later in 2026, possibly leading to pricing corrections on HBM3E, the short-term view is overwhelmingly positive for established leaders due to technical barriers to entry. The investment thesis here is straightforward: every increase in the number of AI accelerators deployed by hyperscalers directly maps to an increase in the volume and dollar value of HBM required. For every new data center or AI training cluster being brought online, these memory companies are receiving a guaranteed revenue uplift based on the fundamental physics of the machines being built. For a deeper dive into the broader supply chain, you might want to review the latest reports on semiconductor market trends, which show the memory segment outpacing other areas.

The Trillion-Dollar Commitment: Hyperscaler CAPEX and Its Ripple Effect

The story of AI infrastructure in 2026 is incomplete without confronting the sheer scale of the capital expenditure (CAPEX) being deployed by the gatekeepers of the digital world. We are past the phase where these expenditures were merely large; they are now tectonic. According to recent Bloomberg calculations, the four largest tech giants—Alphabet, Amazon, Meta, and Microsoft—are projected to spend a combined staggering sum, upward of \$625 billion to \$650 billion, on new data centers and AI hardware this year alone. Amazon leads with a projected \$200 billion, followed by Alphabet at \$185 billion, Meta at \$135 billion, and Microsoft at \$105 billion.

To put this number into perspective, the combined 2026 CAPEX for these four firms dwarfs the total projected spending of 21 leading US industrial firms—including automakers and defense contractors—combined. This money isn’t being held in reserve; it’s being deployed feverishly to build out the physical and digital rails necessary to dominate the generative AI market. McKinsey predicts that global demand for data center capacity could nearly triple by 2030, with about 70% of that demand explicitly coming from AI workloads.

The Investor’s Paradox: Spending Surge vs. Share Price Pause

Herein lies the paradox that creates an investment opportunity. Despite this overwhelming evidence of commitment—a \$650 billion outlay signaling absolute belief in the technology’s future—investors have shown skepticism, leading to volatility and momentary pauses in the stock prices of the central chip providers themselves. The market is asking: “Can the incremental profits justify this eye-watering investment?”. This uncertainty is not a signal to retreat; it’s a signal to pivot.

When the primary spenders are in a near-term sentiment trough, the direct beneficiaries who are simply fulfilling the purchase orders become the safer, higher-leverage bet. The companies manufacturing the high-speed networking switches, the specialized HBM stacks, the advanced cooling systems, and the power infrastructure are *guaranteed* to see revenue based on the purchase orders already placed against those \$650 billion budgets. They don’t need to win the AI *application* war; they just need to supply the *tools* for that war, which are being bought irrespective of which hyperscaler ultimately prevails in the software race.

The smart money is moving capital away from the highly-valued “application layer” software that merely wraps AI around old user interfaces and rerouting it into infrastructure at a record pace. This is the “Physical Pivot” mentioned by analysts—a structural redesign of the technology stack favoring the providers of the tangible, scarce resources needed for computation. This trend is visible across the board, from data center construction growth projections to the massive investments in power grid capacity, treating “power capacity” as the new “user growth” metric. To understand how this CAPEX trickles down through the entire industry, review our analysis on high-performance computing resource allocation.

Case Study: The Beneficiaries of Imminent Capacity

Consider the following tangible benefits derived from this CAPEX commitment, which form the core of this less-discussed investment thesis:

The argument isn’t that the chip designers aren’t valuable; it’s that the guys selling the shovels, who are also seeing their prices rise due to the scarcity of high-end components, often offer a more asymmetric risk/reward profile when the primary spenders are facing valuation scrutiny.. Find out more about High bandwidth memory HBM investment opportunities overview.

Concluding Outlook and Investor Posture for Twenty-Sixteen

As we stand at the threshold of the new year, February 2026, the primary AI stock under review—or rather, the primary *theme* of AI infrastructure—appears to be at a crucial juncture: one where the market has momentarily paused to digest past gains, while the fundamental drivers for future, sustained expansion remain firmly in place and may even be accelerating due to external macro factors. The sheer commitment from the hyperscalers confirms the underlying demand is structural, not cyclical in the traditional sense. This pause is less a crack in the foundation and more a collective breath being taken before the next, more focused leg of the investment supercycle begins. The market is no longer rewarding *any* company with AI in its press release; it is now relentlessly pricing the *quality* of the business delivering the infrastructure.

Reassessing Valuation Metrics Against Long-Term Projections

For investors looking past the headline volatility, the key metric shifts from comparing the current price to last quarter’s earnings, to evaluating the price against robust, multi-year growth projections. The market has matured past the era of paying abstract multiples for pure promise. As of Q1 2026, the market is distinctly separating AI companies based on “underwriting certainty”—those showing clear monetization, improving unit economics, and durable demand command premium pricing, while others face sharper discounts.

When a company, particularly one entrenched in the essential infrastructure layers (networking, memory), is expected to see its earnings expand by well over fifty percent in the coming year, even after significant recent appreciation, the associated price-to-earnings multiple can appear highly attractive when viewed through the lens of its projected future earning power. In 2026, broad market returns are expected to be driven primarily by this earnings growth, not by a general expansion of valuation multiples, which are already elevated relative to long-term averages. The market is becoming more discerning: if a company can convert the massive capital expenditure it receives into real, accelerating margins, the current P/E ratio, no matter how high it looks today, may represent a discount relative to its own projected financial reality two or three years out.

This disconnect between near-term sentiment—driven by quarterly noise and macroeconomic uncertainty—and long-term fundamental growth potential forms the core of the thesis that infrastructure enablement plays possess substantial, durable upside throughout Twenty Twenty-Six and beyond. This is about moving from a *growth* multiple to an *earnings* multiple that justifies itself through demonstrable operational leverage.

Navigating Volatility While Maintaining a Constructive Long-Term View. Find out more about Essential beneficiaries of AI infrastructure supercycle definition guide.

The road ahead in the artificial intelligence sector will undoubtedly feature turbulence; market expectations are high, and any perceived hiccup in execution or any breakthrough by a competitor can trigger sharp, short-term sell-offs. For the well-informed investor, these moments of heightened uncertainty are best viewed as periods for strategic accumulation rather than panic capitulation. When you see the stock of a foundational memory provider dip because of a minor pricing negotiation or a networking play slide due to a single cloud customer pausing a build, you are witnessing a temporary market overreaction to a long-term, undeniable trend.

The overarching story—that artificial intelligence represents the largest capital investment cycle in human history—remains unaltered. This story is not about a single piece of software; it’s about the physical construction of a new digital layer of the economy. Therefore, maintaining a long-term constructive posture, focused on the indispensable infrastructure providers who possess powerful technological moats (like HBM stacking capability or proprietary high-speed switch silicon) and are now diversifying into new, high-value end markets, is the prudent approach to capturing the upside suggested by the evolving coverage surrounding this top artificial intelligence infrastructure theme as 2026 unfolds.

This story of foundational technology enablement is only entering its most profitable deployment phase. The biggest winners in the next few years won’t just be the ones building the tallest skyscrapers (the core chip providers); they will be the ones who control the concrete, the steel, and the high-tension electrical lines connecting everything.

Actionable Takeaways for Capturing the Infrastructure Upside

The shift from focusing purely on the main processor to investing in the ecosystem requires a slight adjustment in tactical execution. Here are the key actions and focus areas to consider as 2026 progresses:

- Prioritize “Picks and Shovels” with Scarcity: Look beyond the major chip designers to companies controlling essential, hard-to-replicate components. This means firms with a clear, dominant position in HBM supply chains or those with proprietary, high-speed, low-latency Ethernet switching technology that the hyperscalers *must* integrate to achieve scale.. Find out more about Advanced Ethernet switches AI data centers insights information.

- Demand Unit Economics Over Top-Line Growth: In the current environment, a company growing revenue by 40% with expanding gross margins is infinitely more valuable than one growing 60% with contracting margins. Scrutinize the margin profile of your infrastructure plays. Are their margins *improving* with scale, or are they being squeezed by rising material costs? This confirms sustainable competitive advantages.

- View Volatility as Strategic Entry Points: The market is prone to overreacting to news that does not change the secular thesis. When a major infrastructure supplier experiences a near-term stock decline due to contract negotiation noise or a temporary supply hiccup—especially if the long-term order book remains solid—treat it as an opportunity to establish or increase a position at a more favorable entry point.

- Track CAPEX Conversion Rates: Pay close attention to the guidance from hyperscalers regarding their massive capital spending plans. While the total spend is known, the speed at which this money converts into recognized revenue for networking, memory, and data center construction partners is the key short-term metric to monitor. Reviewing the latest data center construction growth projections can give you a leading indicator for these partners.

- Embrace the Open Standard Bet: For networking, the industry-wide push toward high-speed, open-source Ethernet ecosystems (like those utilizing SONiC) over proprietary interconnects suggests favoring suppliers deeply integrated into that open path. This diversifies their customer base and makes their technology the default for next-generation hyperscale deployments.

The evidence is overwhelming: the artificial intelligence revolution is now an infrastructure build-out, plain and simple. For a deeper understanding of the capital flowing into this area, you should look at recent analysis detailing the overall AI investment cycle trends. While the giants battle over who will own the ultimate AI models, the players providing the essential, hard-to-replicate physical and digital infrastructure—the high-speed plumbing and the high-density memory—are poised to capture value with less direct competition and more certainty in their order books throughout 2026 and well beyond. This foundational enablement story is where the real, sustainable upside is currently being laid down.

What are you seeing as the most undervalued piece of the AI puzzle right now? Are you focused on the chips, the memory, or the connection fabric? Let us know in the comments below!

![OpenAI mission betrayal lawsuit Musk: Complete Guide [2026] OpenAI mission betrayal lawsuit Musk: Complete Guide [2026]](https://tkly.com/wp-content/uploads/2026/03/d97587d3bae1f7ff1cebe275b2cb1bffd9438622-150x150.png)