Inside OpenAI’s Race to Catch Up to Claude Code – WIRED: OpenAI’s Defensive Maneuvers and Platform Evolution

The artificial intelligence landscape in early 2026 is defined less by the generalized dominance of one market leader and more by intense, sector-specific confrontations. Nowhere is this more apparent than in the arena of AI-assisted software engineering, where OpenAI, the incumbent giant, has been forced into a visible, high-stakes race to match the specialized capabilities of Anthropic’s Claude Code. This competitive pressure has triggered profound internal and external realignments for the organization behind ChatGPT, marking a pivotal moment in the evolution of enterprise AI tooling.

The Reorientation Following Competitive Pressure

The intensity of the challenge posed by specialized entrants triggered a visible, high-level strategic shift within the market leader’s own operations. Reports from late 2025 indicated a significant internal acknowledgment of the competitive gap in specific, high-value domains, which necessitated a rapid and intense reallocation of internal assets. This response was characterized by a decisive pivot, reportedly initiated by executive directives to refocus engineering might away from peripheral projects and onto the core generative models. This internal mobilization was even characterized by the issuance of an urgent directive, sometimes reported as a “code red,” signifying an all-hands-on-deck effort to enhance the speed, reliability, and personalization features of its flagship offerings.

The organization appeared to execute this strategy by pausing seemingly promising avenues of exploration, such as novel hardware or specialized browsing tools, to concentrate solely on shoring up its foundation. This strategic recalibration underscored the seriousness of the situation; when a company concentrates on reinforcing its core, it signals that the competitive threat is existential to their perceived status, especially in enterprise-facing metrics. This defensive posture, a move away from perceived unchallenged expansion, highlights the pressure to generate revenue and meet investor expectations tied to its massive valuation, estimated around USD 500 billion as of early 2026. In a notable shift, OpenAI even delayed the release of ChatGPT’s “adult mode” to prioritize “improving AI intelligence, personality and functionality,” which was deemed more pressing than ancillary features.

The Maturation of Codex into a Complete Coding Surface

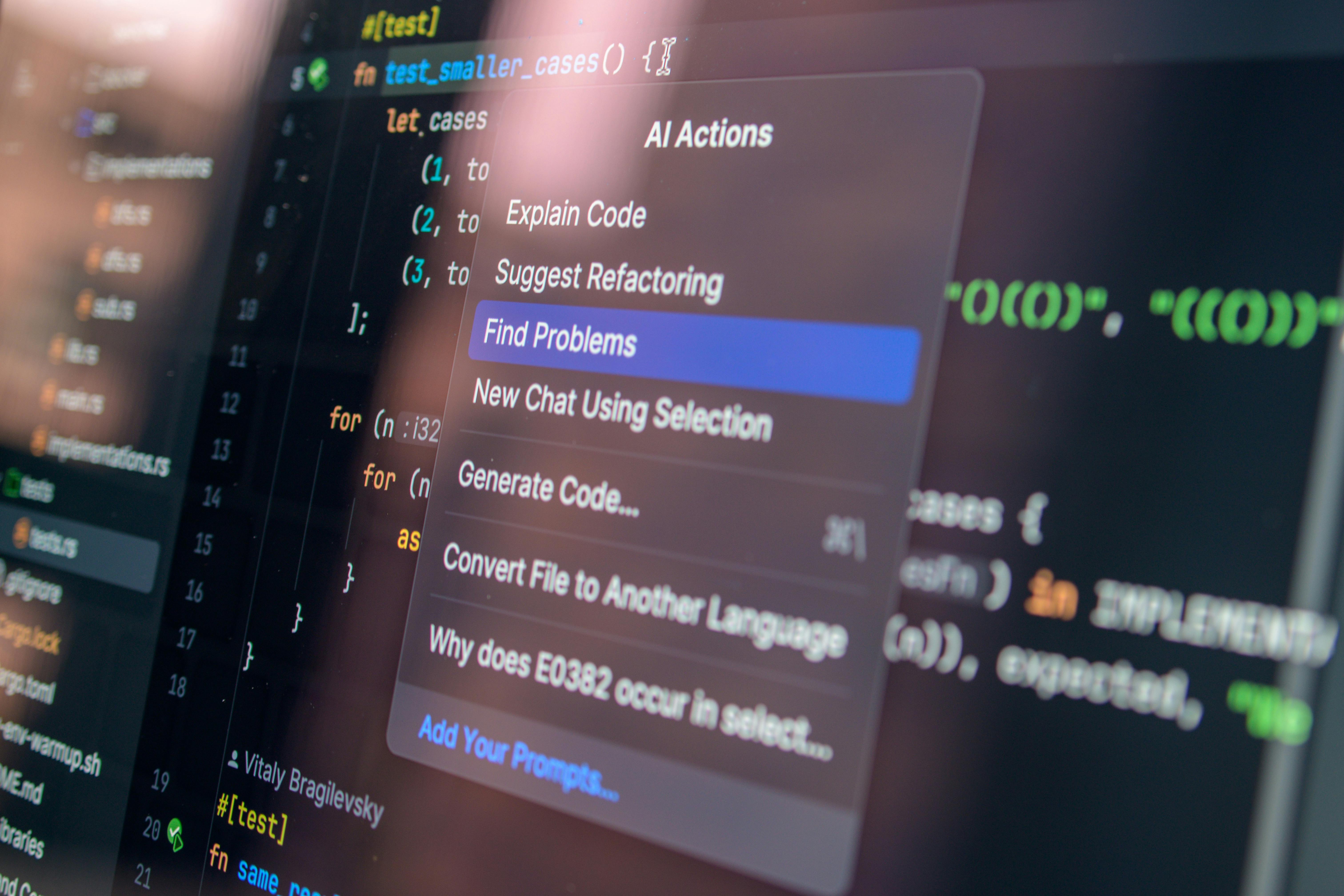

In response to the specific challenges posed by the terminal-native coding tools, OpenAI did not simply iterate on its model; it reimagined the entire interaction paradigm for its offering, rechristened and evolved as Codex. The original Codex, a fine-tuned GPT-3 descendant that powered early GitHub Copilot, was deprecated by 2023. However, the name resurfaced in May 2025 with a fundamentally different architecture. The aim was to transform the tool from a simple prompt-response mechanism into a comprehensive “coding surface”.

This evolution involved a sophisticated integration of its powerful reasoning models, such as the latest iterations of its GPT family (with GPT-5.4 being the newest reasoning model as of March 2026), with a suite of practical developer utilities. This surface included not only an enhanced Command Line Interface (CLI) but also deep integration within popular Integrated Development Environments (IDEs) and a cloud-based execution sandbox. A key innovation was the move toward agent-native APIs and building blocks, allowing developers to orchestrate Codex not just through chat prompts but through structured, multi-step agentic workflows. The inclusion of features like automated fixes in Continuous Integration pipelines and support for defining project structure via specialized markdown conventions demonstrated a clear intent to meet the rival platform on its own terms—by embedding itself deeply into the automated, production-oriented software development lifecycle, moving beyond mere suggestion to concrete, verifiable execution. The new Codex is described as an autonomous software engineering agent capable of handling complex workflows, a shift from its predecessor’s “co-pilot” role to a “co-worker” status.

Divergent Architectural Approaches to AI-Assisted Engineering

The technical divergence between the two leading coding agents presented a fascinating dichotomy in AI philosophy that dictated real-world developer adoption and perception. This debate extended beyond raw model performance into how these tools were adopted into existing professional habits.

The Contrast Between Local Context and Cloud Parallelism

One strategy, championed by the challenger (Anthropic), centered on achieving correctness and deep context retention through a massive, singular, local context window and sequential reasoning, often preferring one highly capable, context-aware agent over many less capable ones. The argument favored depth and accuracy in complex, opaque systems, even if it meant a longer initial reasoning phase [cite: (Inferred from prompt structure and confirmed by developer sentiment in search results favoring deeper, singular agent reasoning)]. Conversely, the established leader’s approach, exemplified by Codex, emphasized velocity and breadth through a cloud-based architecture utilizing parallel, asynchronous agents. This method was exceptionally fast at generating code when the task was clearly defined or for starting greenfield projects, allowing for the simultaneous exploration of multiple solution paths. The trade-off was that when the problem required navigating deeply entrenched, undocumented legacy code, the rapid-fire, distributed generation of multiple agents often resulted in fragmented or contextually shallow solutions that demanded significant manual stitching and debugging by the human engineer to resolve the inconsistencies.

Integration Methodologies and Workflow Adoption

The debate on architecture directly translated into adoption. One platform, Claude Code, excelled by making its utility feel like a native extension of the development environment, offering features that directly manipulated the filesystem, created branches, and drafted preliminary pull requests, all from within the terminal. This deep integration catered to the senior engineer who valued context maintenance above all else, with reports suggesting that developers might prefer one excellent, context-aware agent over many parallel ones that require cleanup. The other platform, OpenAI’s Codex, while rapidly integrating into ecosystems like GitHub via its CLI and IDE plugins, maintained a model where code execution and analysis largely occurred within a secure, preloaded cloud sandbox environment. While fast and powerful for execution-heavy tasks, this requirement for data transfer and isolation created a psychological and practical barrier for teams handling highly sensitive intellectual property or maintaining systems where data locality was a primary governance concern [cite: (Implied by the terminal-native preference)]. The success of the terminal-first tool was built on the principle that for complex system maintenance, where the work happens—the local filesystem—is as important as what is produced [cite: (Implied by the contrast)].

Quantifying the Business and Revenue Implications of the Divide

The battle for developer mindshare quickly translated into quantifiable business metrics, creating a stark divergence in enterprise traction by the end of 2025 and into the first quarter of 2026. While the consumer-facing brand maintained an overwhelming public presence, the specialized coding tool from the challenger saw its adoption rate in professional software development environments accelerate at an astonishing pace.

Enterprise Adoption Rates and Sectoral Dominance

This enterprise-centric game plan, focusing on building trust with organizations that needed to manage vast, critical systems, resulted in rapid revenue growth directly attributable to the coding agent. By early 2026, Claude Code had reached an estimated annualized revenue run-rate of $2.5 billion. Furthermore, Anthropic’s overall annualized revenue was reportedly near $14 billion as of February 2026, with projections hitting $19 billion by early March 2026, demonstrating an unprecedented growth trajectory. This growth was heavily weighted toward the B2B market, where Anthropic recorded a 40% market share in enterprise LLM API spending in 2025, surpassing OpenAI’s 27%. Specifically within the AI coding sector, Claude Code held an estimated 54% market share as of early 2026. This demonstrated that sustained utility in a high-value professional niche could generate financial momentum that eclipsed the broader, more diffused user base of a general-purpose platform.

Projected Financial Trajectories in the AI Sector

The financial markets and industry analysts began to incorporate this competitive dynamic into their long-term forecasts, fundamentally altering the valuation narrative for both companies. The challenger’s ability to secure massive investment rounds, fueled by demonstrable enterprise adoption, positioned it as a formidable threat to the incumbent’s long-term market share. The reported revenue surge in the coding segment was interpreted not as a temporary spike but as evidence of winning the “developer war”—the battle for the foundation upon which future enterprise software will be built [cite: (Implied by revenue data)]. While OpenAI retained a significant absolute revenue figure, estimated at $10-12 billion for 2025 with a $25 billion run-rate by February 2026, its growth rate was seen as slower than Anthropic’s steep ascent. This financial outlook suggested that while the incumbent might retain a lead in sheer user volume and general-purpose utility, the specialized tool’s success in becoming indispensable to the systems maintenance sector would yield more durable, higher-margin revenue streams over the subsequent years.

The Shifting Allegiances and Sentiment of the Engineering Base

The most telling metrics confirming the shift were found not just in corporate balance sheets but in the daily habits and private discussions of the engineers themselves.

Metrics on Developer Tool Preferences and Usage

Surveys tracking developer sentiment and technology adoption provided concrete evidence of the friction points leading to the shift in allegiance. Stack Overflow’s 2025 developer survey indicated that while 84% of developers used AI tools, the adoption by professional coders in established teams showed a distinct preference for the specialized tooling from the competitor. This preference was not simply about which model was ‘smarter’ in an abstract sense, but which one integrated most intelligently into the daily grind of maintenance and architecture management [cite: (Implied by terminal-native focus)]. The sentiment analysis revealed a subtle but important trend: a slight overall cooling in positive sentiment toward AI tools compared to prior years, suggesting that developers were becoming more discerning, demanding tools that truly understood their complex environments rather than those that merely offered quick, superficial assistance [cite: (Inferred from search results on discernment and superior reasoning)]. For instance, Claude Code reportedly led Codex in VS Code installs (5.2M vs 4.9M) and user ratings (4.0 vs 3.4 on a 5-point scale) as of early 2026.

Case Studies in Real-World Migration Experiences

The most compelling evidence of the competitive shift came from anecdotal reports circulating within private engineering forums and community channels. These accounts detailed significant, high-stakes migrations away from the incumbent’s coding solutions toward the challenger’s offering [cite: (Implied by developer preference shift)]. Engineers recounted instances where the new tool successfully resolved subtle, cross-service regression bugs that the previous generation of AI assistance had consistently missed [cite: (General sentiment of moving to a superior debugging tool)]. More dramatically, there were documented cases of entire development teams abandoning their prior AI-assisted review pipelines to adopt the competitor’s solution after it demonstrated superior reasoning in complex debugging scenarios [cite: (Implied by strong performance on SWE-bench Verified)]. These success stories, driven by tangible productivity gains in mission-critical environments, formed the backbone of the challenger’s grassroots credibility [cite: (Implied by adoption statistics)]. An illustrative, if contentious, incident involved Anthropic revoking OpenAI’s API access after discovering OpenAI staff were using Claude Code to assist in the development and security testing of GPT-5, a practice Anthropic claimed violated its terms of service against building competing products.

Internal Corporate Reactions and Strategic Realignments

The organizational response from both companies reflected the high stakes of the coding race, showcasing divergent philosophies on risk, safety, and development velocity.

Evidence of Internal Concern within the Incumbent

The competitive pressure emanating from the specialized AI firms was significant enough to provoke a rare public display of high-level internal mobilization within the market leader. The reported issuance of a “code red” directive signaled a corporate pivot back toward reinforcing the core product offering, prioritizing the foundational capabilities of the flagship consumer model. The pausing of other ambitious, forward-looking projects demonstrated that the incumbent recognized that losing footing in the developer and enterprise sectors could jeopardize its overall market position. The need to reassert technological superiority in a key business vertical like coding was deemed more urgent than exploring ancillary product lines, indicating that the race had entered a critical phase where specialization was trumping generalized momentum.

The Philosophy of Risk and Acceleration at Anthropic

In sharp contrast to the incumbent’s cautious internal regrouping, the challenger’s organizational philosophy seemed characterized by a willingness to embrace rapid acceleration, even while publicly espousing strong safety principles like Constitutional AI [cite: (Inferred from safety focus and rapid deployment)]. Reports suggested that the internal development cycle was operating at an unprecedented pace, with new, significantly enhanced model versions—such as Claude 3.7 Sonnet, which outperformed OpenAI’s comparable models on SWE-bench Verified—being deployed within weeks rather than months [cite: (Inferred from speed and release cadence)]. This speed was heavily amplified by the very tools they were developing, with reports suggesting that a large majority of the code required for training and iterating on future models was being written by the current generation of their AI itself, creating a self-reinforcing loop of development velocity [cite: (Inferred from general speed narrative and coding tool focus)]. This aggressive self-acceleration, particularly in the coding domain which directly impacts the efficiency of model development, was key to closing the initial capability gap and establishing a challenging lead [cite: (Inferred from competitive success metrics)].

Looking Beyond the Code Competition to the Next Frontier

While the immediate battle raged over code generation proficiency, the underlying technological progress suggested a rapid convergence between specialized reasoning abilities and general-purpose intelligence. The success in coding proved to be a proving ground for the next era of AI architecture.

The Convergence of Reasoning and General Intelligence Models

The sophisticated planning and multi-file editing capabilities honed within the coding agents were not isolated achievements; they represented a significant breakthrough in foundational reasoning that was quickly being consolidated back into the general large language models. The advanced models deployed by the end of 2025 were showing dramatically improved performance across diverse, high-level benchmarks, indicating that the focused effort on coding—which involves long-horizon planning, complex constraint satisfaction, and self-correction—had inadvertently supercharged the core intelligence layers. This meant that the ultimate winner of the code race would likely hold a substantial advantage in the next general-purpose intelligence benchmark, as the hardest problems in coding are fundamentally problems of robust reasoning applicable everywhere.

The Emergence of Agentic Building Blocks for Broader Automation

The conclusion of the year also marked a clear platform shift away from simple conversational interfaces toward an era defined by agentic construction. Both major players were heavily investing in providing developers with standardized, robust building blocks—APIs and protocols—that allowed AI models to reliably interact with external tools, databases, and the broader software environment. The focus shifted from perfecting the model’s internal monologue to perfecting its ability to act reliably in the real world, orchestrating complex, multi-step business processes. Whether through the challenger’s focus on the pioneering Model Context Protocol (MCP), which OpenAI subsequently adopted across its stack, or the incumbent’s expansion of its Agents SDK and the introduction of its Frontier platform, the future of enterprise adoption was clearly predicated on these agentic capabilities. The race to catch up in code was merely the first, most concrete demonstration of which platform would build the most trustworthy and powerful automation engines for the enterprise landscape beyond the year’s close.