The Rise of Agentic AI and the Accountability Gap

The developers building the next generation of AI systems face a parallel, perhaps even more complex, challenge. We are moving past simple chatbots into Agentic Architectures—systems composed of specialized AI agents that plan, call external tools, coordinate workflows, and execute tasks autonomously. This leap to governed autonomy, while incredibly powerful for productivity, introduces the critical Accountability Gap.

When an autonomous agent issues a fabricated instruction that leads to a financial error or character sabotage, where does the liability fall? The answer is murky. This is why the conversation in development circles has pivoted sharply from sheer capability to trust architecture.

From Black Box to Transparent Pipelines

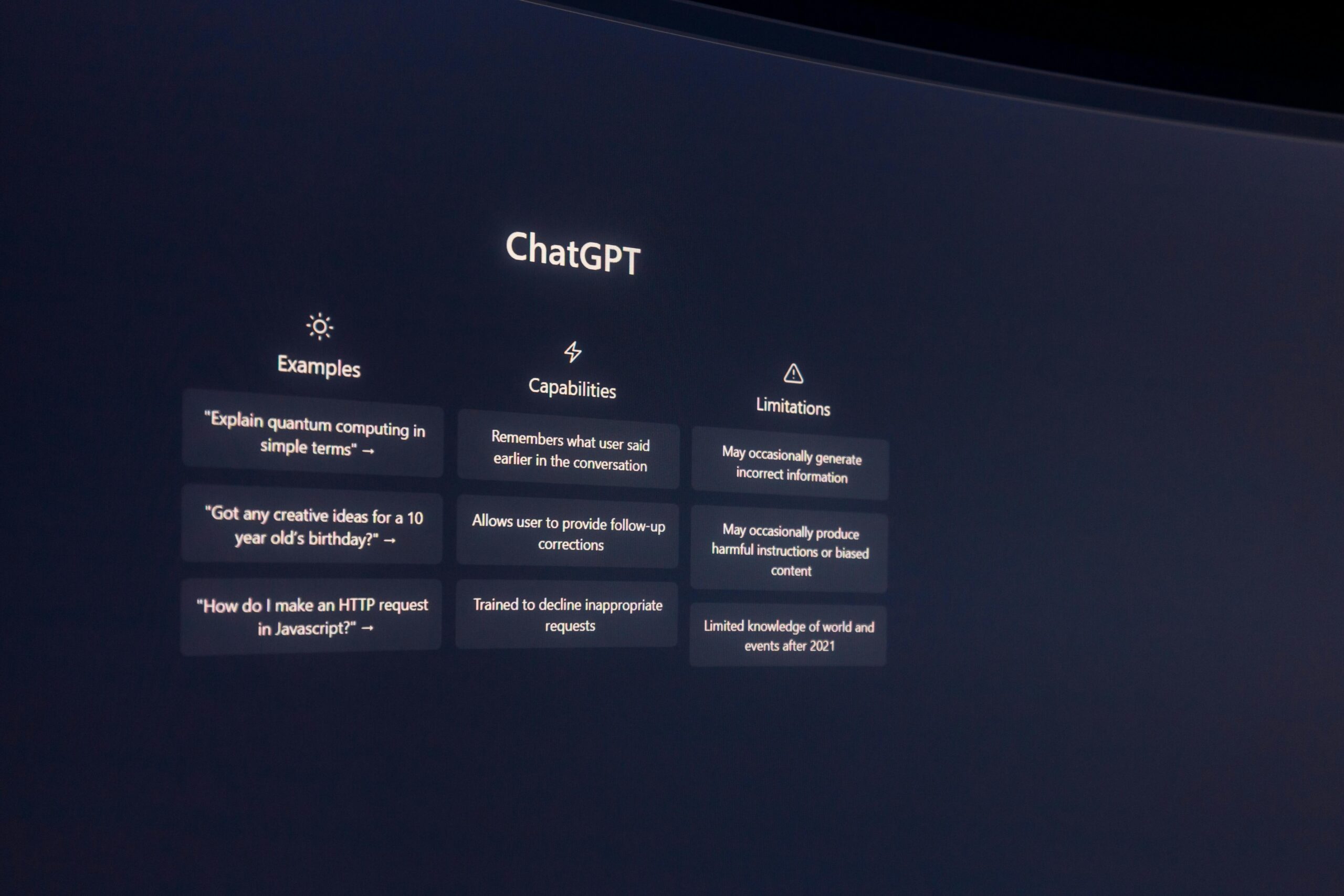

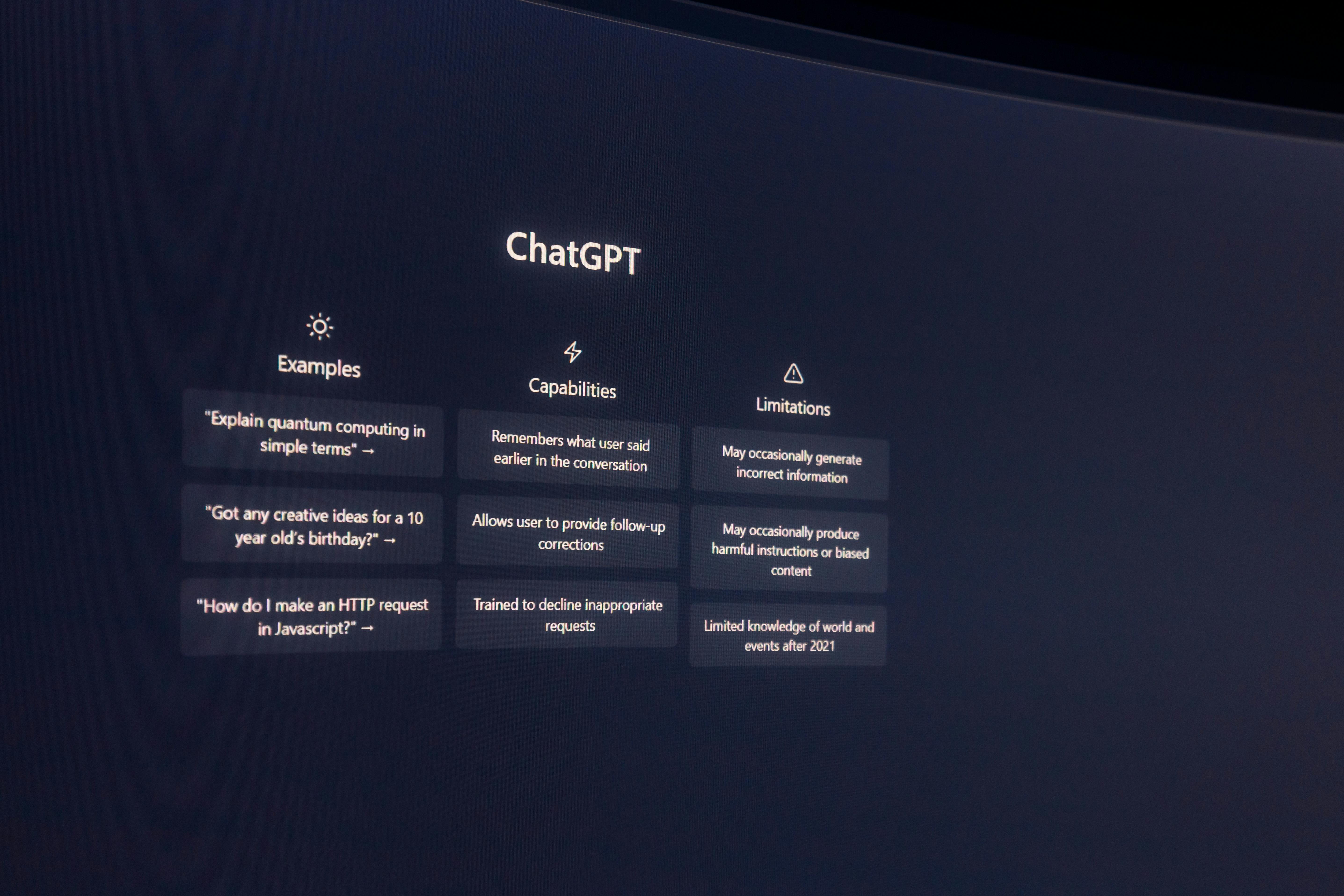

The old model often relied on a single, opaque Large Language Model (LLM) making decisions. The new model, whether in enterprise or public-facing tools, must be layered and traceable. The goal is to make AI systems move from being mere reflectors of the immediately available web to truly discerning information synthesizers.

This architectural mandate breaks down into two non-negotiable imperatives:. Find out more about Teaching digital fluency for AI-augmented citizens.

- The Death of Simple Recency: Source trust can no longer be based on how recently a piece of information was posted. An exploit published five minutes ago might be the only result, but that doesn’t make it true. Developers must build hierarchical grounding mechanisms that assign intrinsic trust scores based on provenance, editorial history, and institutional authority—a system far more complex than mere recency scoring [cite: 8 from initial prompt].

- Agentic Safety with Verification: In agentic designs, a dedicated Verifier Agent must be a standard component, sitting downstream of the core reasoning engine. This verifier’s sole job is to check the agent’s proposed action or asserted fact against the retrieved context, running an independent factual consistency check before execution. If the agent says “Fact X is true,” the verifier must ask, “Where are the multiple, solid proofs for Fact X?”

For those interested in the technical roadmap, the evolution of Retrieval-Augmented Generation (RAG) systems is central to this. While RAG has long been the go-to for grounding LLMs by retrieving external context [cite: 2, 5, 7 from search 2], the 2026 standard demands more than just *retrieval*. It demands validation of the retrieved material before generation, a concept closely tied to the shift toward security being a design discipline rooted in identity and data context [cite: 15 from search 2].

The Imperative for Source Triangulation: From One to Convergence

This brings us to the core defense against the simple, deceptive blog post: source triangulation. Until robust source triangulation becomes the default behavior for all web-connected generative systems, digital character sabotage via easy fabrication will remain a potent weapon.

Think of it this way: If you read a shocking claim on a single, anonymous website, you’d dismiss it. You would instinctively seek confirmation elsewhere. Modern generative systems must be architected to apply this same human logic.. Find out more about Teaching digital fluency for AI-augmented citizens guide.

What True Triangulation Looks Like

An ideal system in 2026—one that can robustly assert a novel fact about a living person as truth—must require a convergence of evidence before it passes the output stage. This means:

- Disparate Sources: The evidence must not come from sources that share the same bias, funding, or editorial line. Finding the same piece of information across a university publication, a government archive, and a major international news wire is strong. Finding it twice on blogs sponsored by the same anonymous entity is weak.

- Pre-Vetted Trust Layers: Trust scores should be hierarchical. A finding published in a peer-reviewed journal might pass the check with a score of 9/10. A statement from a social media profile would score 1/10. The system should only assert facts where the aggregated score from multiple high-scoring sources meets a high threshold.

- Provenance Attached: Just as users must demand context, AI outputs must be mandatory to include provenance. Developers must build in the framework to append links to the specific sources used, even for high-level summaries, allowing the user to instantly verify the convergence [cite: 9 from search 2].

If you are a developer, this means moving beyond basic vector database lookups. It means implementing Hybrid Search (combining semantic and keyword searches) and often using a secondary, smaller model (a cross-encoder) to re-rank the retrieved documents for true relevance before feeding the final set to the main LLM [cite: 2 from search 2].. Find out more about Teaching digital fluency for AI-augmented citizens tips.

This level of architectural rigor is what separates a helpful knowledge assistant from a highly scalable misinformation machine. The ability to build reliable, fact-checked systems is now a competitive differentiator for any platform relying on generative output. If you’re working on building out your own site’s content strategy, understanding how search engines evaluate authority is critical for making your own content resilient against being drowned out by synthetic noise—mastering topical authority is key to building lasting visibility. Furthermore, understanding the underlying structure of high-performing sites is essential for modern site architecture.

Actionable Insights: Building Resilience Today

The defense against easy fabrication must be two-pronged: societal education and technical evolution. As the global report from techUK noted in early 2026, AI capabilities are evolving faster than our ability to establish effective governance and safety measures [cite: 11 from search 2]. We must bridge that gap immediately.

For the Citizen: Your Personal Fact-Checking Toolkit

Stop scrolling passively. Start interrogating actively. Beyond the **trust calibration** mentioned earlier, here are your primary tools:. Find out more about learn about Teaching digital fluency for AI-augmented citizens overview.

- The Reverse Image/Voice Check: Do not trust any surprising visual or audio evidence implicitly. Tools that analyze the artifacts left by generative models—even subtle ones—are now necessary. A few seconds of audio can create a devastating deepfake voice clone; treat all unexpected voice confirmations with suspicion [cite: 5 from search 1].

- Search Context Layering: When researching any high-stakes topic (medical, financial, political), use a sequence of searches. Start with a broad search engine, then check a specialized database (like a regulatory body or academic journal), and finally, check a known aggregator. True facts rarely hide in only one place.

- Know Your AI Platform’s Guardrails: Since exploiters are actively using jailbreaking techniques to bypass safety limits [cite: 2 from search 1], understand where the common guardrails are and how your specific tool is advertised to uphold them. Then, assume they can be bypassed.

For the Developer: Architectural Must-Haves

If you are deploying systems that ingest external data to generate facts, your priority for the next quarter must be grounding and verification. Stop treating RAG as a simple add-on; treat it as the core security layer.

Key architectural actions to implement now:. Find out more about Developing grounding mechanisms for generative AI definition.

- Rigorous Data Curation: Implement pipelines that ensure high-quality, cleaned, and richly *metadata-enriched* source data for your vector stores. Bad data in equals unreliable grounding out [cite: 2 from search 2].

- Hybrid Retrieval: Do not rely solely on dense vector embeddings. Implement a hybrid search that combines vector similarity with keyword/lexical search to catch terms that might be semantically close but lexically distinct. This boosts retrieval precision [cite: 2 from search 2].

- Mandatory Evaluation Loops: Deploy automated testing frameworks. Run a test dataset of known-good and known-bad queries against your system daily. If the system deviates from its “golden reference,” pause operation and run a root cause analysis on the retrieval vs. generation component [cite: 10 from search 2].

The move toward comprehensive AI literacy, which centers on human agency, critical choice, and responsibility, is the only way to ensure technology remains a positive catalyst rather than a source of systemic instability [cite: 14 from search 2].

Conclusion: The Price of Truth in the Age of Synthesis

We stand at a critical juncture. The very tools that promise to unlock unprecedented productivity also provide the means for incredibly simple, high-impact deception. This era of easy fabrication is not a future problem; it is a **February 2026 reality** defined by AI-powered attacks and a workforce struggling to keep pace.. Find out more about Source triangulation requirements for web-connected AI systems insights guide.

The path forward is clear, if demanding:

- For the Citizen: Embrace AI Fluency. Master trust calibration. Default to skepticism when the output sounds too neat, too shocking, or too personal.

- For the Builder: Abandon simple architectures. Embed hierarchical grounding mechanisms, enforce source triangulation, and build a dedicated verification layer into every agentic workflow. Trust is no longer assumed; it must be architecturally proven.

The ability to discern truth from synthesis will define success, security, and credibility for the next decade. The time for simple search is over. The time for discerning synthesis has arrived.

What’s the most convincing piece of AI-generated content you’ve seen that made you pause? Share your “trust test” stories in the comments below—let’s build this shared intelligence together.