The New Engineering Paradigm: Intentional Collaboration with AI for Accelerated Technical Leadership

The landscape of software engineering, particularly within hyper-scale technology organizations, has undergone a fundamental transformation. The very definition of a high-impact technologist is being rewritten, shifting the emphasis from manual implementation mastery to the strategic orchestration of advanced artificial intelligence tools. A technology leader who recently achieved a rapid promotion by focusing on the construction and utilization of AI-integrated products has illuminated the critical success factors in this new era. This article synthesizes these advanced methodologies into a comprehensive blueprint for leveraging AI for maximum engineering velocity and career acceleration, grounded in the principles of intentionality, deep understanding, and unwavering accountability.

Defining the New Paradigm: The Philosophy of Intentional AI Collaboration

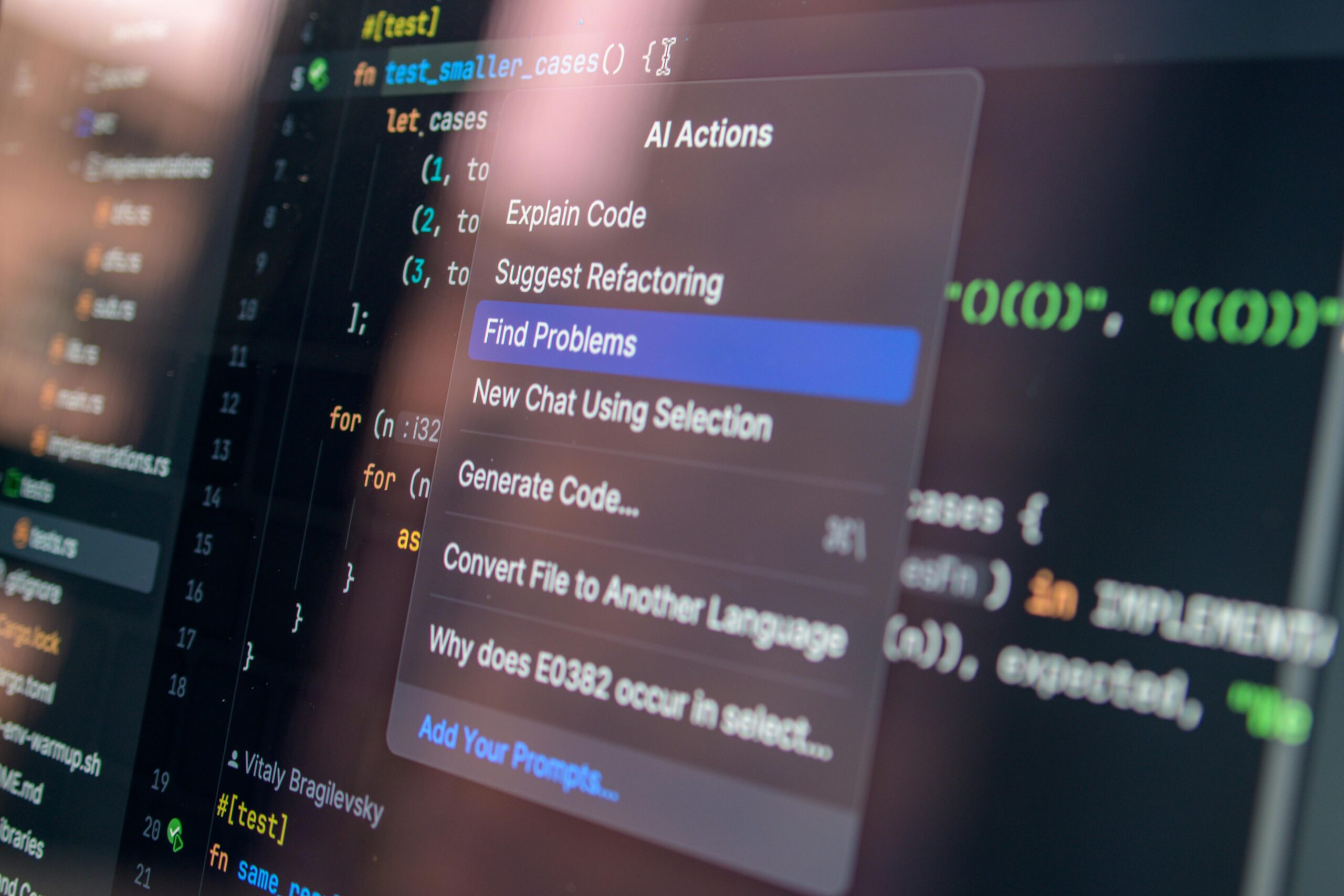

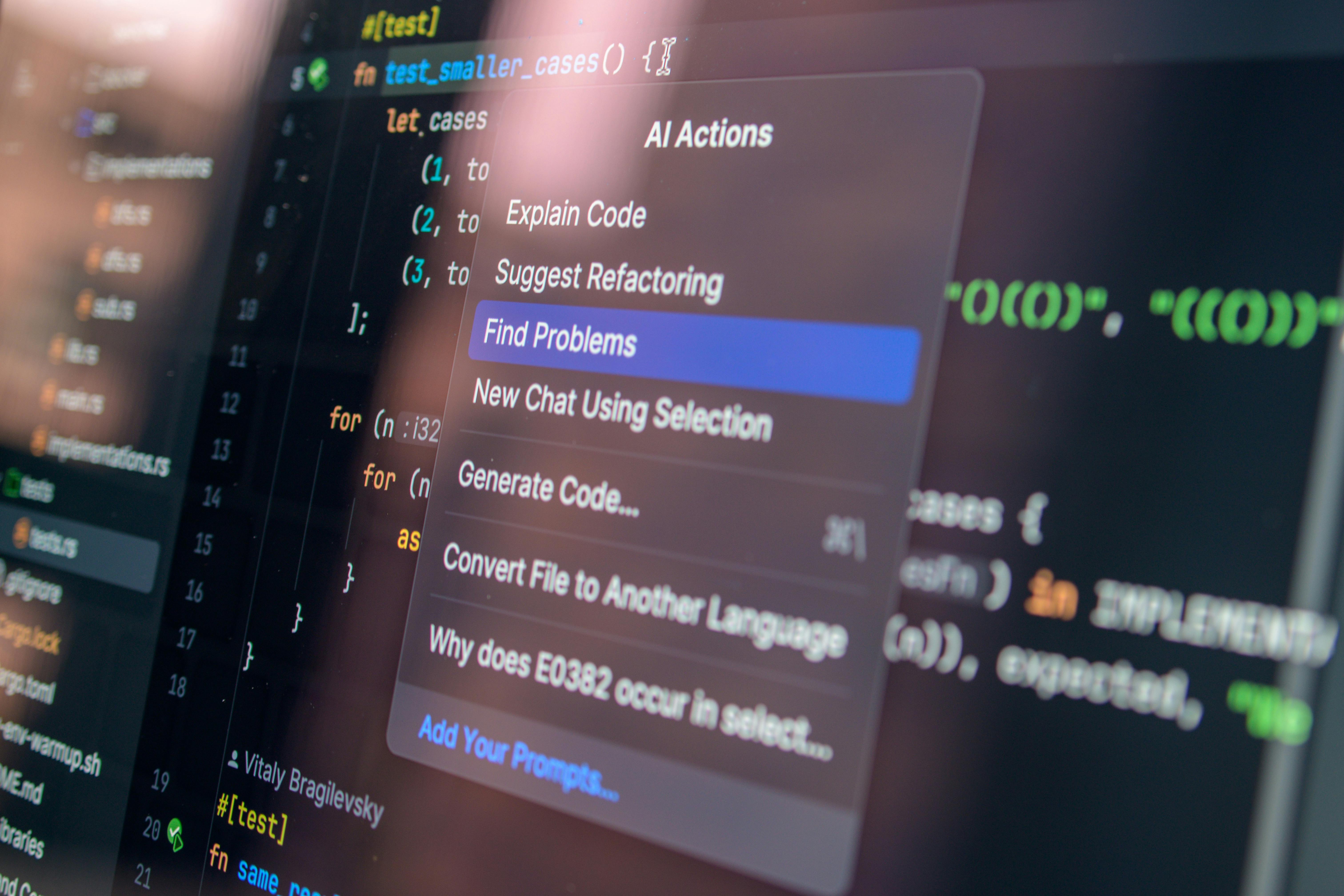

The colloquial term “vibe coding” has rapidly moved from an informal descriptor to a recognized, high-leverage workflow. It encapsulates the interactive process where an engineer describes an outcome—be it a complex system design, a class structure, or a specific function—to a large language model (LLM) and then refines the output based on an intuitive assessment of correctness, performance, and style, rather than through exhaustive, line-by-line specification. For a senior engineer, however, this interaction must be rigorously governed by fierce intentionality.

Deconstructing “Vibe Coding”: Intentionality Over Passivity

The core distinction between transformative speed and latent technical debt lies in the engineer’s cognitive posture. Passively accepting the first plausible output from an AI assistant is a direct path to introducing subtle, difficult-to-diagnose bugs or architecturally suboptimal solutions. The paradigm shift requires treating the AI as an exceptionally knowledgeable, yet occasionally overconfident, junior collaborator. Every suggestion is a hypothesis that demands human-led validation.

This intentionality mandates constant, critical internal questioning that must accompany every generated block of code. Senior engineers are compelled to interrogate the AI’s choices:

- Why was a specific data structure chosen over alternatives?

- What are the measurable performance implications of this pattern when deployed at enterprise scale?

- How does this implementation strictly align with established organizational security protocols and dependency matrices?

- Manage a significantly broader scope of work concurrently.

- Divert focus to deeply explore emergent or novel technical challenges.

- Accelerate the product iteration cycle by an order of magnitude.

- Stage 1: Self-Supervised Pre-Training: The model ingests a massive, generalized corpus of public data (books, code repositories, web content). This phase establishes an enormous, inherently probabilistic knowledge base, teaching the model the statistical likelihood of token sequences—i.e., how language *generally* flows.

- Stage 2: Supervised Fine-Tuning (SFT): The model is then exposed to curated, high-quality, human-labeled examples of desired input-output pairs (e.g., problem-solution pairs in specific programming languages). This stage conditions the model on style, format, and instruction following, teaching it to structure answers in requested formats, such as producing only Python code blocks or structuring documentation as markdown. As of 2025, the adoption of SFT is a standard practice for task specialization in enterprise models.

- Stage 3: Reinforcement Learning from Human Feedback (RLHF): This crucial final layer involves human reviewers ranking model outputs based on criteria like helpfulness, safety, and utility. RLHF trains a Reward Model to align the LLM’s probabilistic predictions with nuanced human preference and established organizational standards. This stage is essential for reducing harmful, biased, or contextually inappropriate suggestions.

- Providing Deep Context: Explaining the system’s environment, legacy constraints, and scaling targets.

- Offering Exemplars: Supplying high-quality input/output pairs (few-shot learning) that demonstrate the desired architecture or style.

- Utilizing Negative Constraints: Explicitly dictating what the model must not do is often as powerful as specifying the positive requirements, ensuring alignment with non-functional requirements or deprecated patterns.

- After the AI produces a distinct logical unit (a function, a complex data transformation, or a class method), the engineer must immediately pause and review that unit as if it were a Pull Request from a junior peer.

- This immediate validation stops errors in their tracks, preventing them from hardening into systemic issues requiring massive, time-consuming refactoring.

- Avoid Confined Explanation: Asking the model in the same thread to explain the code it just wrote often results in justifications constrained to the local logic, failing to impart the broader conceptual foundation.

- Seek Theoretical Grounding: Open a new, clean session and instruct the model to explain the underlying theory or best practices. For example, querying, “Explain the CAP theorem and its direct relevance to this specific in-memory distributed cache implementation we are deploying,” moves the discussion from code justification to architectural first principles.

- Advocacy: Presenting data-driven arguments to leadership demonstrating the value proposition and security assurances of approved external alternatives, where applicable.

- Adaptation: Crucially, possessing the technical agility to extract equivalent or superior performance from the approved, internal toolsets, thereby adapting the high-velocity workflow to the sanctioned environment.

- Deep Understanding of AI Principles: Mastering the “why” and “where it fails” of the underlying LLM mechanics (Pre-training, SFT, RLHF).

- Expert Product Sense for AI Integration: Applying engineering judgment to determine the “what to build” using AI tools most effectively for customer and business value.

- Unwavering Commitment to Engineering Accountability: Establishing the processes for the “how to ship safely,” ensuring human oversight remains the final fiduciary layer.

Without this active, critical cognitive engagement, “vibe coding” rapidly degrades into “lazy coding.” This lazy approach substitutes genuine engineering rigor for superficial syntactic fluency, posing a severe threat to product quality and ultimately undermining the career trajectory of the practitioner responsible for its deployment.

The Ninety-Five Percent Reality: How AI Augments the Engineering Output

The assertion that a significant majority—approaching ninety-five percent—of current code is authored by an artificial intelligence is not an admission of human obsolescence; it is a quantification of a profound productivity multiplier. This statistic reflects a fundamental revaluation of what constitutes valuable engineering output in the current technological climate. Previously, an engineer’s value was often linearly correlated with committed lines of code, the complexity of hand-written algorithms, or the sheer volume of files modified. Today, the highest-leverage contributions reside in the orchestration of complex systems and the precision of high-level architectural decisions, with the mechanical implementation detail being efficiently offloaded.

The AI effectively handles the laborious transcription of logical intent into executable syntax. This liberation of cognitive resources allows the human engineer to operate at a significantly higher altitude. A useful analogy is the transition from a manual laborer on a construction site to the site foreman who designs the blueprints, manages complex logistical flows, and certifies structural integrity. The AI manages the bricklaying and concrete pouring; the human manages the *design* and *integrity*.

This high-leverage deployment allows an engineer to:

The raw volume of generated code is functionally commoditized. The true engineering artistry now rests exclusively in the quality of the inputs (the prompts) and the rigor of the subsequent vetting process. High-volume output is only valuable if the input quality is sufficiently high to direct that volume toward solutions that are both correct and strategically aligned with business objectives.

Mastering the Engine: Deep Foundational Knowledge for Effective Prompting

The discrepancy between frustration and breakthrough when utilizing advanced LLMs is often traced back to a shallow understanding of the model’s underlying architecture and training history. Treating these systems as deterministic compilers or simple search engines sets engineers up for failure due to the probabilistic nature of their outputs.

The Probabilistic Nature of Large Language Models: Training Stages

To effectively command an LLM, an engineer must internalize the multi-stage training pipeline that shapes its behavior. This process transforms a raw statistical engine into a coherent assistant.

Understanding these stages reveals that a failure to achieve the desired result often stems from a prompt that has not adequately guided the model away from its most statistically probable, yet contextually incorrect, path derived from its foundational training.

Translating Nuance: Leveraging Training Insights for Precise Communication

The insights gleaned from the training pipeline directly inform superior prompt engineering strategy. If the model’s proficiency is based on pattern matching learned during SFT, the prompt must mimic the structure of the ideal training example the engineer wishes to emulate.

If the base training favors highly common, textbook patterns, the prompt must explicitly articulate the required deviation. For instance, a generic request for a sorting algorithm will likely yield the most statistically frequent implementation (e.g., Quicksort). However, if the production environment demands memory conservation over raw execution speed for that specific component, this constraint must be communicated. The model does not intuitively know internal organizational limitations, dependency graphs, or team style guides.

Effective communication becomes an exercise in reverse-engineering the model’s learning. It involves crafting an input that biases the output distribution toward a specific, constrained reality. This is achieved through:

This disciplined approach ensures that the resulting code aligns with the demanding realities of building enterprise-scale software, moving far beyond mere textbook correctness.

Elevating Code Quality: Engineering for Scale and Robustness

The gulf separating a functional prototype from a production-grade system is defined by resilience under stress. In the age of AI-accelerated development, the diligence traditionally reserved for end-of-cycle testing must now be preemptively embedded into the generative workflow itself.

Proactive Stress Testing: Prompting for Extreme Scenarios and Constraints

The iterative testing that characterized pre-AI development—exhaustive unit testing, integration validation, and code reviews focused on vulnerabilities—must be translated into the prompt sequence. The engineer must treat the AI as an eager entity that requires explicit guidance on failure modes.

A senior, promotion-worthy prompt transcends a simple request. It must function as a segmented design document:

“Draft the API endpoint for transactional data retrieval. Ensure rigorous input validation for all fields, specifically anticipating null values and malformed schema inputs that may bypass the client layer. Furthermore, explicitly model and implement graceful error handling for a simulated database connection timeout scenario, ensuring a standardized, traceable HTTP 503 response is returned. Finally, analyze the concurrency requirements and detail a mechanism for adaptive request throttling if sustained load exceeds one thousand operations per second.”

By demanding coverage for these non-obvious, hard failure cases from the initial generation, the engineer forces the LLM to engage its reasoning capabilities on scaling and resilience challenges at the moment of code inception. This conscious, proactive attention to scaling from “day one” is a non-negotiable prerequisite for code that can survive the operational demands of a massive production environment.

The Continuous Integration Mentality: Reviewing Output Incrementally

A significant risk associated with the exponential speed gains offered by AI is the temptation to defer quality assurance until a large block of generated code is complete. If an AI produces a thousand lines of logic based on a multi-faceted prompt, reviewing the entire artifact post-generation is a recipe for systemic failure.

The central issue is error cascading. An early, uncorrected flaw—syntactic or logical—will be assumed correct by the model as it generates subsequent dependencies. This embeds the error deeper, leading to a state where debugging the final product becomes exponentially more complex than catching the error in the first ten lines. The workflow must therefore mirror a continuous integration (CI) mindset applied at the micro-level of code generation.

The process requires immediate, critical assessment:

Code that appears syntactically functional but harbors latent errors is uniquely dangerous because the mere presence of correct syntax can trick human intuition into accepting incorrect behavior. Frequent, early, and critical review is the essential safety mechanism that ensures velocity never compromises integrity.

Accountability in the Age of Automation: The Unwavering Necessity of Ownership

The highest tiers of technical leadership are exclusively defined by accountability—a concept that no automation or LLM can absorb or delegate. The technology may draft the syntax, but the absolute, final responsibility for every line of code committed to production—the code that touches customer data, drives financial transactions, or manages critical infrastructure—rests squarely on the committing engineer.

The Production Line of Responsibility: Why Ceding Control is Career-Limiting

The invocation of the excuse, “The AI suggested it,” is not a viable professional defense; it is a definitive marker of failed engineering judgment. A tech lead is fundamentally expected to be the educated, final gatekeeper. In high-stakes scenarios—such as an outage at 3:00 AM—the only acceptable response involves immediate ownership and rectification, not deflection. This underscores why maintaining deep, foundational technical understanding remains non-negotiable.

While AI tools significantly lower the barrier to entry for code production, they do not reduce the responsibility for the code’s correctness, security profile, or performance characteristics. Entrusting high-stakes operational tasks to an external, non-accountable entity represents a profound dereliction of senior engineering duties. The success or failure of the system is inextricably linked to the professional standing of the engineer; therefore, the capacity to deeply understand, defend, and rapidly remediate the code must remain a strictly human function.

AI as the Ultimate Tutor: Leveraging Contextual Explanations for Deep Learning

Paradoxically, the very tools that risk obscuring understanding can become the most potent accelerators for mastering complex engineering concepts, provided they are wielded with strategic intent. If a segment of AI-generated code or a suggested architectural pattern appears unfamiliar, the engineer has an immediate, infinitely patient resource for clarification.

The superior technique involves context separation to facilitate true learning:

This approach leverages the AI as a context-aware tutor, allowing the engineer to rapidly bridge knowledge gaps, thereby reinforcing the accountability required to truly own the committed artifacts. Mastery, fueled by AI-driven learning, becomes the ultimate enabler of safe, high-velocity innovation.

Strategic Tool Selection and Organizational Dynamics in Tech Giants

Operating within the ecosystem of a major technology corporation—such as a global cloud provider or a dominant e-commerce platform—requires adept navigation of specific organizational mandates that often run parallel to, or sometimes in tension with, the rapidly evolving external tool landscape.

The Internal Mandate vs. The External Standard: Navigating Tool Ecosystems

While the general utility and performance benchmarks of external, commercially available LLMs can be compelling, internal engineering practices are invariably governed by specific mandates related to security posture, data governance, and regulatory compliance. In late 2025 and into 2026, major technology firms have increasingly standardized on proprietary, in-house AI coding assistants that have undergone rigorous internal vetting against these strict security policies.

Senior engineers must master the challenge of operating within these defined technical boundaries. This involves understanding the rationale behind the discouragement or outright prohibition of third-party tools for production workloads, even when those external tools appear superior in general testing. This organizational friction—balancing velocity with sanctioned environments—is a key problem for modern technical leadership.

Solving this challenge requires a dual approach:

The skill is maintaining the velocity that AI enables while rigorously adhering to the governance structure of the organization.

The Collaboration Velocity: Staying Relevant in an AI-Accelerated Peer Group

In environments where leadership actively fosters AI tool adoption, the near-universal uptake among immediate technical peers creates an inescapable gravitational force. When colleagues consistently increase their velocity—by tackling larger scopes, completing code reviews in less time, or drastically reducing debugging overhead—resisting the technology translates directly into a professional liability.

This dynamic transcends mere speed; it affects the core fabric of team collaboration. Code reviews become friction points when one reviewer comments on AI-generated blocks with the scrutiny reserved for legacy code, while the other is reviewing an entirely AI-drafted proposal. Furthermore, engineers who consciously avoid direct “vibe coding” still passively ingest AI outputs via enhanced code comments, automated security scan reports enriched by AI analysis, and AI-drafted architectural suggestions in design documents.

To collaborate effectively and integrate seamlessly into pair programming or design sessions, fluency in the assumptions of the new AI-augmented style is mandatory. This fluency is maintained only through consistent, engaged interaction. The demonstrable velocity of the team establishes the effective baseline for individual performance, making continuous interaction with these new paradigms a necessary condition for sustained relevance in any fast-moving technical unit as of early 2026.

Conclusion: The Future Technologist as an AI Orchestrator

Synthesizing Skillsets: The New Core Competencies for Senior Roles

The rapid promotion trajectories observed in engineers successfully pivoting to building and utilizing AI-native products are not random occurrences; they are direct signals of a redefined competency matrix for senior technologists. The focus is migrating away from granular, manual mastery of a single language or framework toward a more abstract, orchestrational skillset.

The new prerequisites for technical leadership in this era coalesce around a potent synthesis of three distinct competencies:

The senior engineer of this epoch is no longer primarily defined as the individual who writes the most elegant hand-coded solution, but as the individual who can most effectively direct the most complex, AI-assisted development effort—architecting the scaffolding that enables probabilistic systems to reliably deliver deterministic, scalable business outcomes. This synthesis represents the new standard of technical authority.

Final Reflection on Speed, Scale, and Sustainable Innovation

Ultimately, success in this advanced coding paradigm hinges upon a calibrated balance among three perpetually competing forces: speed, scale, and sustainability. AI tools provide unprecedented speed, facilitating rapid hypothesis exploration and development cycle acceleration. The proven path to promotion is paved by demonstrating the ability to apply this speed to systems that operate at massive scale, servicing millions of users or processing colossal data volumes.

However, the entire structure is unsustainable without sustainability: the human rigor of deep conceptual understanding, the disciplined cadence of continuous, granular review, and the resolute acceptance of total ownership. The most advanced practitioners are those who have mastered the art of harnessing the technology’s sheer accelerating power while simultaneously imposing robust, human-driven guardrails. Their objective is not merely to code faster, but to architect the correct solutions in the correct manner, allowing them to transition seamlessly from high-output individual contributors to engineering leaders who enable entire product domains to evolve both responsibly and with sustained rapid innovation, marking the blueprint for success in the most advanced technology organizations of this new era.

![Intentional AI collaboration workflow: Complete Guide [2026]](https://tkly.com/wp-content/uploads/2026/03/intentional-ai-collaboration-workflow-complete-gui-1773515796482-150x150.jpg)