OpenAI Secures \$110 Billion in Historic Funding, Forging Strategic Deep Dive with Amazon and Nvidia to Define the Next Era of AI Infrastructure

The landscape of artificial intelligence development experienced a seismic shift on February 27, 2026, as OpenAI confirmed a staggering \$110 billion private funding round. This infusion, one of the largest private capital raises in technology history, vaults the company’s pre-money valuation to an estimated \$730 billion. The monumental capital commitment is spearheaded by an alliance involving cloud supremacy titan Amazon, semiconductor powerhouse Nvidia, and Japanese investment conglomerate SoftBank. This move is not merely a cash injection; it represents a fundamental realignment of compute supply, enterprise distribution, and research acceleration, signaling a collective belief from these titans that the path to frontier Artificial General Intelligence (AGI) is now defined by infrastructure scale as much as model architecture. The sheer scale of the investment, more than doubling the firm’s previous record round of \$40 billion in March 2025, validates the escalating capital requirements for pushing the bleeding edge of intelligence.

The Strategic Realignment: Cloud Supremacy and Compute Power Plays

The financial pact is inextricably woven with deep, multi-year operational agreements, pivoting OpenAI’s distribution and compute strategy toward its key partners. This deep partnership transcends a typical vendor-client relationship; it establishes a strategic framework for provisioning and distributing next-generation AI capabilities to the global enterprise world. The implications for market share in enterprise technology adoption are immediate and profound.

Establishing Amazon Web Services as the Exclusive Enterprise Gateway

A cornerstone of the newly forged alliance mandates that Amazon Web Services (AWS) will serve as the exclusive third-party cloud distribution partner for the newly unveiled enterprise platform, codenamed ‘Frontier’. The Frontier platform is engineered specifically to empower organizations to construct, manage, and deploy sophisticated, interconnected teams of AI agents. By securing this exclusivity for Frontier, AWS instantly elevates its standing in the burgeoning enterprise AI services market. This offers businesses a bespoke, highly optimized pathway to production-scale agentic workflows, a path that immediate rivals cannot readily replicate. This strategic positioning aims to make Frontier the definitive platform for enterprises moving beyond simple model querying to complex, autonomous operations.

The Deep Integration of Customized Model Development

Beyond the foundational layer of infrastructure provision, the partnership involves a direct, collaborative commitment to tailoring the intelligence itself. OpenAI and Amazon have committed to jointly developing bespoke artificial intelligence models. These customized models are slated for direct integration into the cloud provider’s vast suite of customer-facing applications. This integration will touch critical areas ranging from e-commerce interfaces to global logistics management systems, ensuring that Amazon’s massive operational requirements are met with models specifically fine-tuned for its unique data structures and customer interaction patterns. This represents a significant internal capability advantage for Amazon, which is expected to cascade into superior service offerings for all its AWS customers.

A Multi-Year, Decade-Spanning Compute Capacity Agreement

Cementing the infrastructure dependency is a massive augmentation of a prior commercial understanding. The existing multi-year agreement between the two entities is being expanded by an additional commitment projected to span a full decade, involving expenditures expected to total \$100 billion. This decade-long commitment provides the AI developer with unparalleled long-term financial certainty regarding its computational scaling needs. Simultaneously, it locks in a guaranteed, high-volume revenue stream for the cloud provider, creating a deeply symbiotic and mutually beneficial infrastructure moat designed to weather the escalating costs of frontier AI development.

The Dawn of Persistent Autonomy: Solving the Stateless AI Dilemma

Perhaps the most profound technological announcement accompanying the financial news was the introduction of a novel operational environment designed to resolve a core limitation of contemporary artificial intelligence interactions: the pervasive lack of persistent memory. This new environment signals a paradigm shift from merely accessing powerful models via one-off calls to effectively deploying them in continuous, real-world operational contexts.

The Critical Bottleneck of Short-Term Memory in Agentic Systems

Historically, deploying advanced models for complex, multi-step tasks was severely hindered by their inherent stateless nature. Each query or step in a workflow was treated as an isolated event, forcing developers to construct cumbersome, external scaffolding—custom memory layers, external databases, and complex orchestration logic—to stitch together context across interactions. This method, often described as “jury-rigging,” proved expensive, slow, and inherently unreliable, especially for long-running projects or sensitive, permissioned workflows. This technological gap was widely recognized as the main operational hurdle preventing true agentic functionality from moving reliably into high-stakes enterprise environments.

Defining the Core Architecture of the Stateful Runtime Environment

The new Stateful Runtime Environment, jointly engineered by OpenAI and AWS, is explicitly designed to eradicate this limitation by providing capabilities native to the execution layer. This environment integrates persistent orchestration, secure execution contexts, and, critically, working memory directly into the workflow management system. Architecturally, it allows AI agents to seamlessly access elements such as long-term context, transactional memory, and system identity, enabling them to remember previous actions and decisions across sessions that can span days or even weeks. This capability fundamentally transforms AI agents from transactional tools into persistent collaborators capable of managing ongoing, complex projects.

Unlocking Production-Grade Agent Capabilities via Amazon Bedrock

The groundbreaking innovation of the Stateful Runtime is being made immediately accessible through AWS’s foundational model access service, Amazon Bedrock. This ensures that its power is instantly available to a massive, established base of developers already utilizing the platform for their generative AI endeavors.

Seamless Orchestration Across Multiple Software Tools and Data Stores

A key functional enhancement provided by this stateful architecture is the inherent capability for agents to operate coherently across disparate enterprise systems. The environment is engineered to facilitate agentic workflows that naturally necessitate interfacing with multiple software tools, accessing various internal and external data sources, and coordinating inputs and outputs across these varied endpoints—all without requiring the developer to code the continuity logic from scratch. This abstract layer of continuity simplifies agent development immeasurably, allowing focus to shift from infrastructural plumbing to core application logic.

Leveraging Native Cloud Governance and Security Postures

A major incentive driving enterprise adoption is the environment’s native integration within the customer’s existing cloud infrastructure. By running statefully within a customer’s specific AWS environment, the runtime automatically inherits the required security posture, compliance framework, and existing tool integrations already established by the enterprise. This ‘out-of-the-box’ adherence to governance and security rules significantly lowers the barrier to entry for regulated industries looking to deploy powerful, autonomous AI agents into sensitive operational areas. The environment promises better observability and governance with native logging, replay, and audit facilities tailored for compliance needs.

The Practical Advantage for Complex, Multi-Step Enterprise Workflows

The real-world implications of this advancement are transformative for enterprise use cases that demand reliability over extended periods. Consider sophisticated tasks such as automated financial reconciliation, complex, multi-stage customer service resolution spanning several departments and system accesses, or large-scale data analysis projects that execute over multiple days. For these applications, the ability for the AI agent to maintain context, resume safely after an interruption, and coordinate approvals across systems is the crucial difference between a mere proof-of-concept and a mission-critical deployment. This new environment delivers the reliability previously unattainable without extreme, costly custom development efforts.

The Shifting Dynamics of the Global Compute Landscape

The massive financial commitment is inseparable from an equally massive commitment of physical computational resources, particularly concerning the hardware underpinning model training and high-volume inference. This signals a direct strategic challenge within the hardware ecosystem, aimed at diversifying reliance away from a single dominant supplier.

The Strategic Consumption of Amazon’s In-House AI Silicon

A vital component of the deal mandates that OpenAI will consume a substantial two gigawatts (GW) of computing capacity, specifically powered by AWS’s proprietary array of artificial intelligence accelerators. This capacity is provisioned across the current generation of Trainium chips and the anticipated next-generation Trainium4 models. This commitment provides a guaranteed, high-volume purchaser for AWS’s custom silicon, effectively subsidizing the research and development required to build a competitive alternative in the accelerator chip market. This funding underpins Amazon’s long-term objective to foster a more diverse and competitive GPU ecosystem by scaling up its own hardware offerings.

The Impact on the Competitive Arena Against Established Model Providers

The ability to secure such a long-term, high-volume commitment for compute capacity is now recognized as the defining factor for maintaining a competitive advantage in the race for advanced intelligence. As leaders acknowledge, the coming period will be shaped by the capacity to scale infrastructure rapidly enough to meet escalating global demand. This financial and operational alignment ensures that the recipient has a secure pathway for scaling its most advanced workloads, thereby hardening its position against fast-moving challengers in the artificial intelligence space, including those rapidly developing competing large models.

Assessing the Current Scale of Global AI Adoption

The unprecedented scale of this investment is firmly underpinned by concrete, real-world data demonstrating an adoption curve that has reached a level of saturation and engagement previously unseen for a new technology platform, fully justifying the magnitude of the capital deployment.

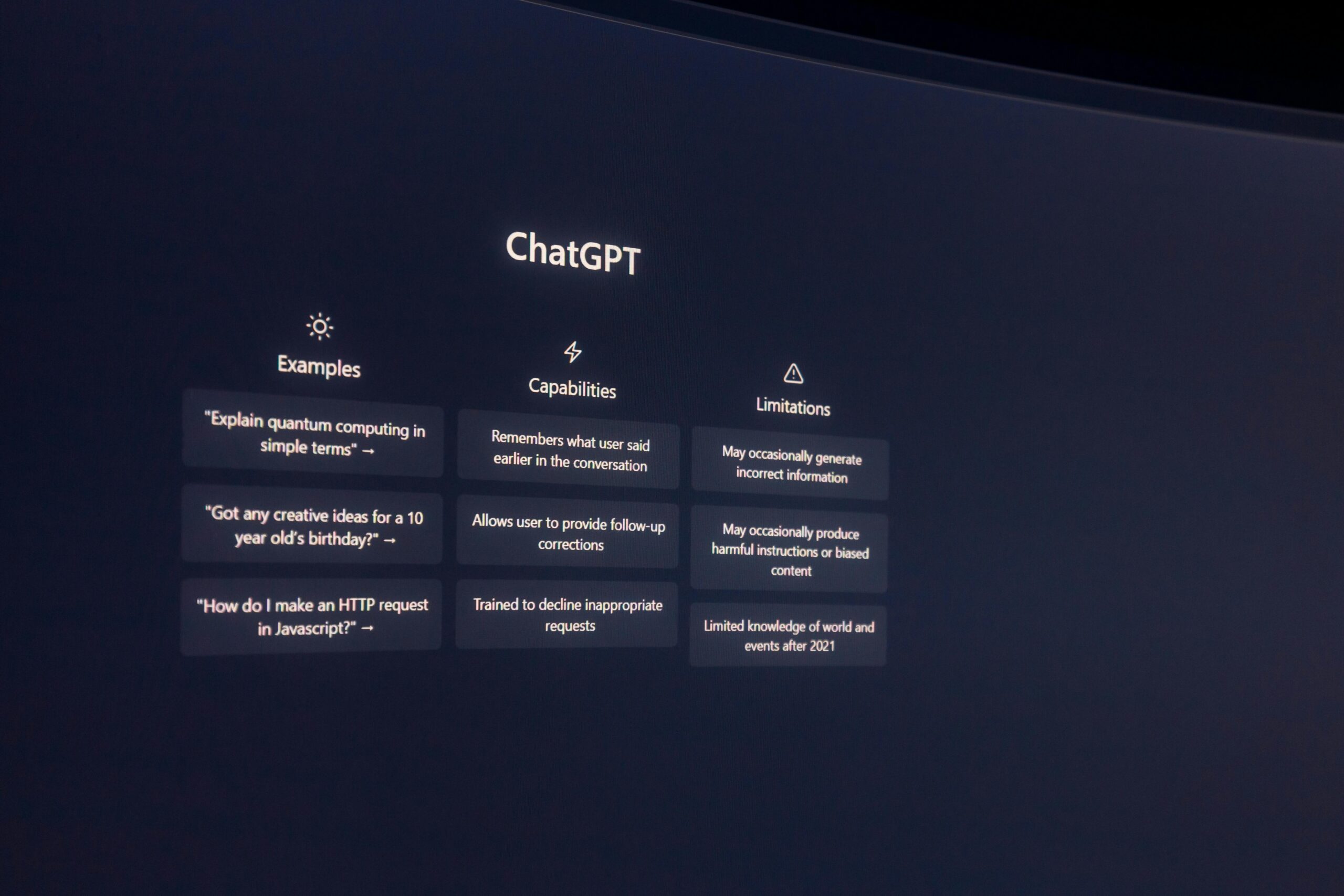

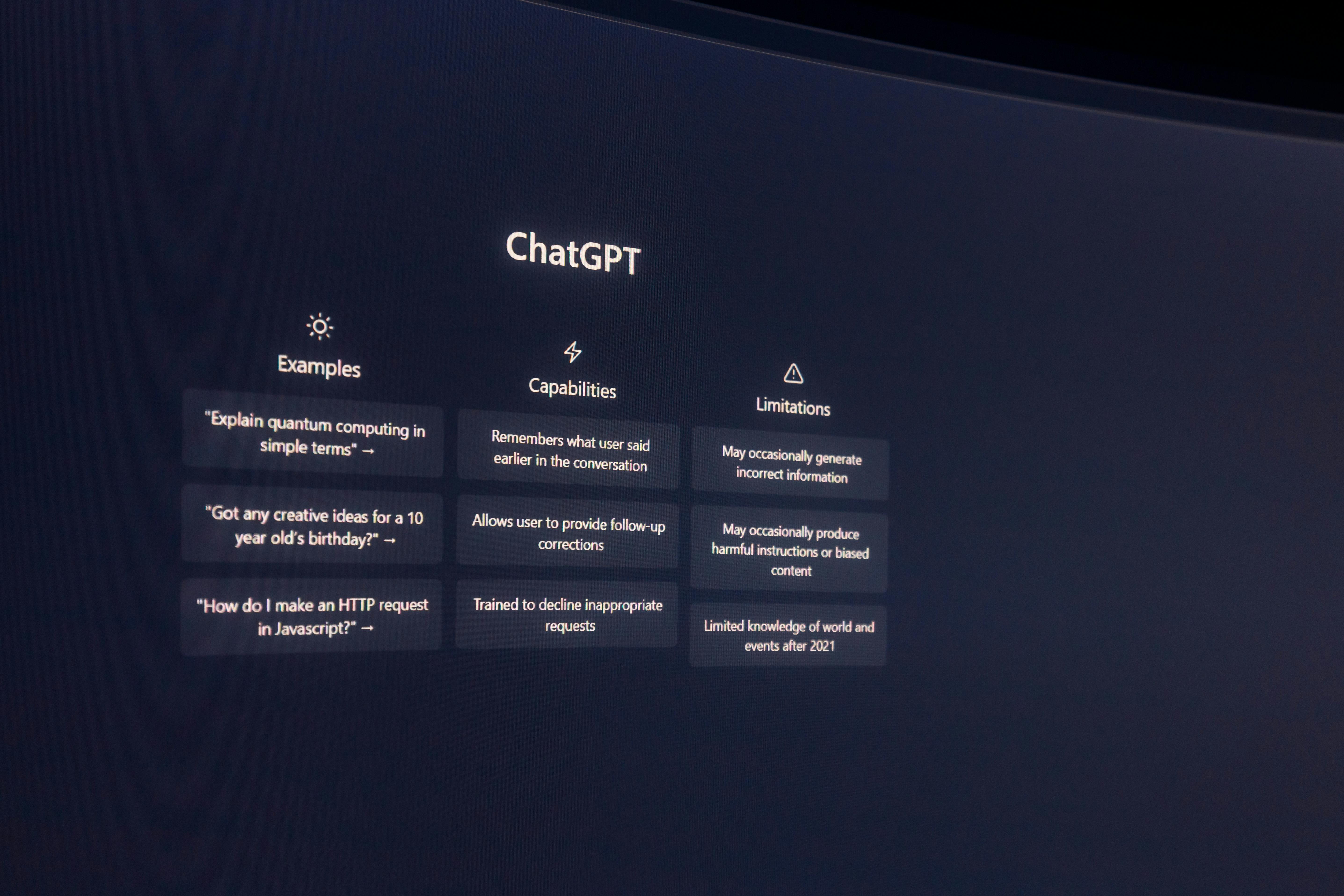

The Explosive Growth in Consumer Engagement with Conversational AI

User metrics paint a clear picture of a product that has become deeply integrated into the daily routines of hundreds of millions of people globally. The user base for the flagship conversational interface, ChatGPT, has scaled to exceed nine hundred million weekly active participants as of February 2026. Furthermore, monetization efforts show robust uptake, with over 50 million dedicated consumer subscribers actively paying for premium features and faster access, indicating a strong willingness to pay for superior performance and advanced capabilities. Subscriber momentum reportedly accelerated meaningfully to start 2026, with January and February on track to be the largest months for new sign-ups in the company’s history.

The Maturation of Professional Use Cases Across Global Commerce

The professional adoption rate is equally compelling, with over nine million paying business organizations now relying on the platform as an integral part of their daily operations. This adoption extends far beyond simple query-response into complex productivity enhancement tools. This is exemplified by the tripling of weekly users for its specialized coding assistants, Codex, reaching well over 1.6 million weekly engagements since the start of the year. This data confirms that the transition from novelty to essential utility across a vast spectrum of commercial activities is well underway, validating the decision to invest capital at this unprecedented scale. The company projects a total revenue of over \$280 billion by 2030, largely split between its consumer and enterprise segments.

Navigating the Web of Existing and Evolving Industry Alliances

In an industry defined by aggressive strategic partnerships, the size and nature of this new \$110 billion agreement necessitate a clear articulation of how it interacts with pre-existing, critical relationships, ensuring stability while pursuing new strategic advantages.

Reaffirmation of Foundational Commercial Partnerships

Despite the dramatic new alignment with Amazon, the company explicitly stated that the terms and nature of its foundational partnership with Microsoft remain entirely unchanged. This existing relationship, which covers intellectual property licensing, commercial agreements, and revenue-sharing structures for the API services, continues to be strong and central to the company’s operational and commercial strategy moving forward. This arrangement illustrates a carefully managed, multi-cloud reality for frontier AI deployment, with Azure remaining the exclusive home for the core, stateless OpenAI APIs, while AWS handles the new stateful, agent-focused Frontier platform.

The Broader Significance for the Path Toward Artificial General Intelligence

Ultimately, the collective action of these investors and partners signals a broad industry consensus that the current phase of artificial intelligence development—the industrialization of compute and agentic workflows—is the crucial precursor to achieving more generalized and capable systems. The massive funding and infrastructure guarantees are intended to accelerate research and ecosystem expansion in a focused, high-output manner. The stated mission underpinning these capital decisions remains the drive to ensure that the eventual realization of highly advanced AI benefits all of humanity, with the current investment serving as the necessary rocket fuel for the next phase of that ambitious journey.