Undercurrents of User Discontent and Platform Inertia

While the executive narrative demands we accept the “new equilibrium,” the contemporaneous market data suggests a significant, unspoken counter-narrative—a form of passive resistance brewing at the user level. The optimism from the top is being actively undercut by the reality on the desktop and in the mobile experience.

Evidence of End-User Resistance in Core Operating Systems

Despite the official narrative of widespread, mandated diffusion, market data suggests a powerful, unspoken pushback against the *forced* integration of these new AI features into core operating environments. Reports indicated a staggering volume of personal computing devices—millions, if not billions, across large installed bases—remained on a legacy operating system version, even when users were technologically eligible for the newer iteration heavily saturated with the company’s most advanced, AI-centric features. This user inertia is a powerful, non-verbal protest. It points toward a deep-seated preference for stability, known functionality, and predictable performance over the perceived risks, learning curve, or underdeveloped utility of the mandated AI enhancements. Users are voting with their inaction. They are implicitly demanding proof that the upgrade is worth the potential loss of familiarity and the unknown costs of debugging new, immature features. They are refusing to become unpaid beta testers for a system they don’t yet trust.

Widespread Reports of Functional Deficiencies in Current Implementations

The theoretical promise of AI amplification often clashes violently with the practical reality of its current deployment within the corporation’s own product ecosystem. For many users attempting to leverage the integrated AI assistants in common productivity applications, the experience is reported to be inconsistent at best. What are the concrete failures?

This gap between the theoretical power and the practical delivery is the source of the current industry anxiety: If the flagship products themselves can’t reliably perform basic advertised tasks, how can the industry expect to secure the massive, multi-year capital investment required for the *next* generation?

The Contradictory Messaging Echoing from Leadership. Find out more about moving beyond AI quality critique in industry guide.

The tension between the executive’s public directive and the private reality of engineering struggles surfaced in a moment of high irony that observers immediately seized upon as proof that the quality crisis has not, in fact, gone away.

The Unintended Re-emergence of Derogatory Terminology

Just weeks after campaigning to retire the pejorative label for low-quality output, the CEO appeared to contradict his own directive during a high-profile industry tour designed to rally support. While discussing the future of agentic AI and its integration into augmented workflows, the executive used a term that was strikingly similar to the one he had just campaigned to retire from the lexicon. The specific phrasing, which circulated widely on social platforms, centered on the imperative that **“Nobody wants anything that is sloppy in terms of AI creation.”** This apparent slip immediately drew sharp attention. It highlighted a deep, internal tension between the desired public narrative—”We are moving past the low-quality era”—and the unavoidable, gritty reality of the current engineering benchmarks. When a leader uses a word implying poor quality or sloppiness to describe what users are seeing, it confirms the user experience. It’s hard to tell the world to stop using a word when you yourself use its close cousin to describe the very engineering problem you need to solve.

The Irony of Warning About the Previously Dismissed Quality Threshold

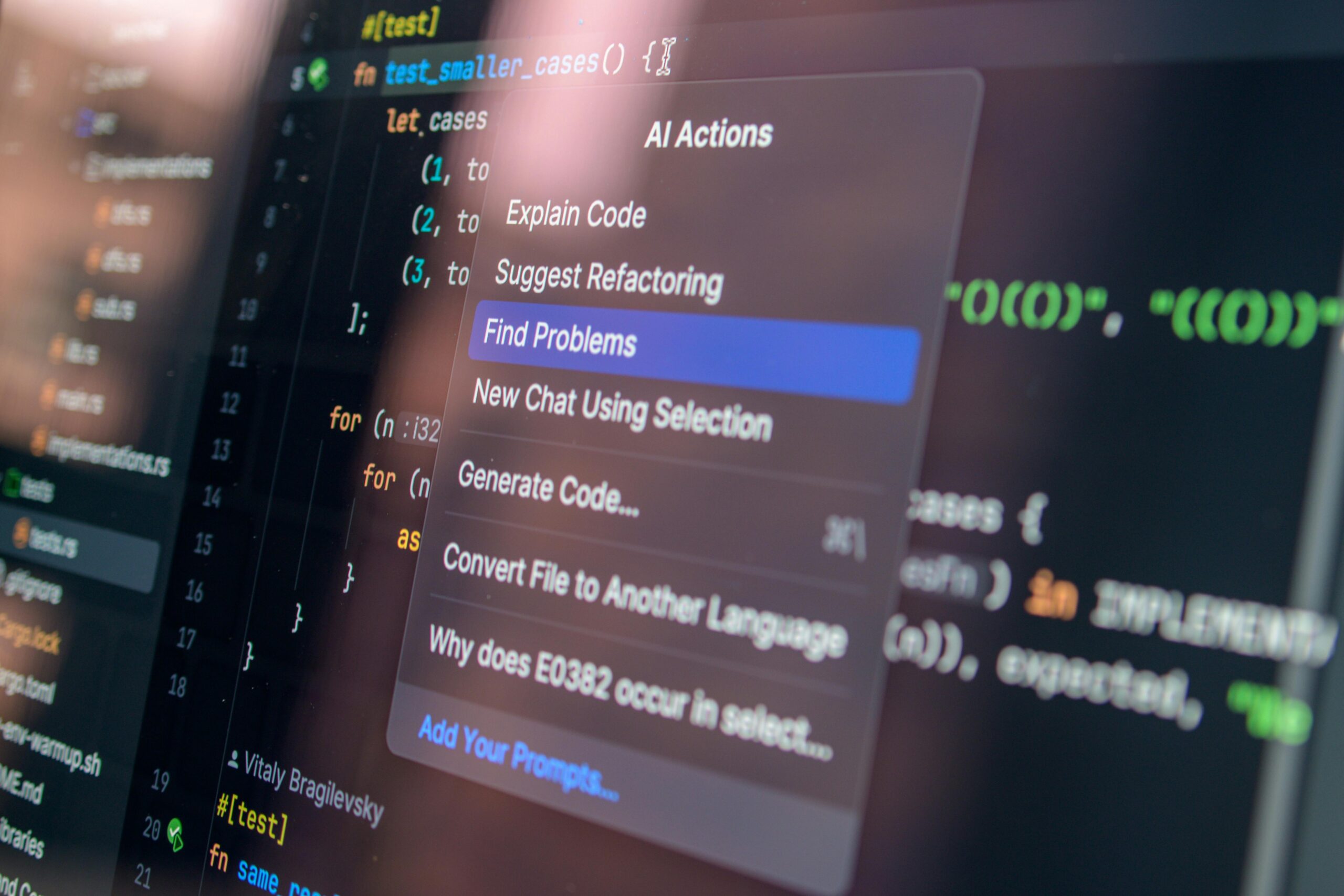

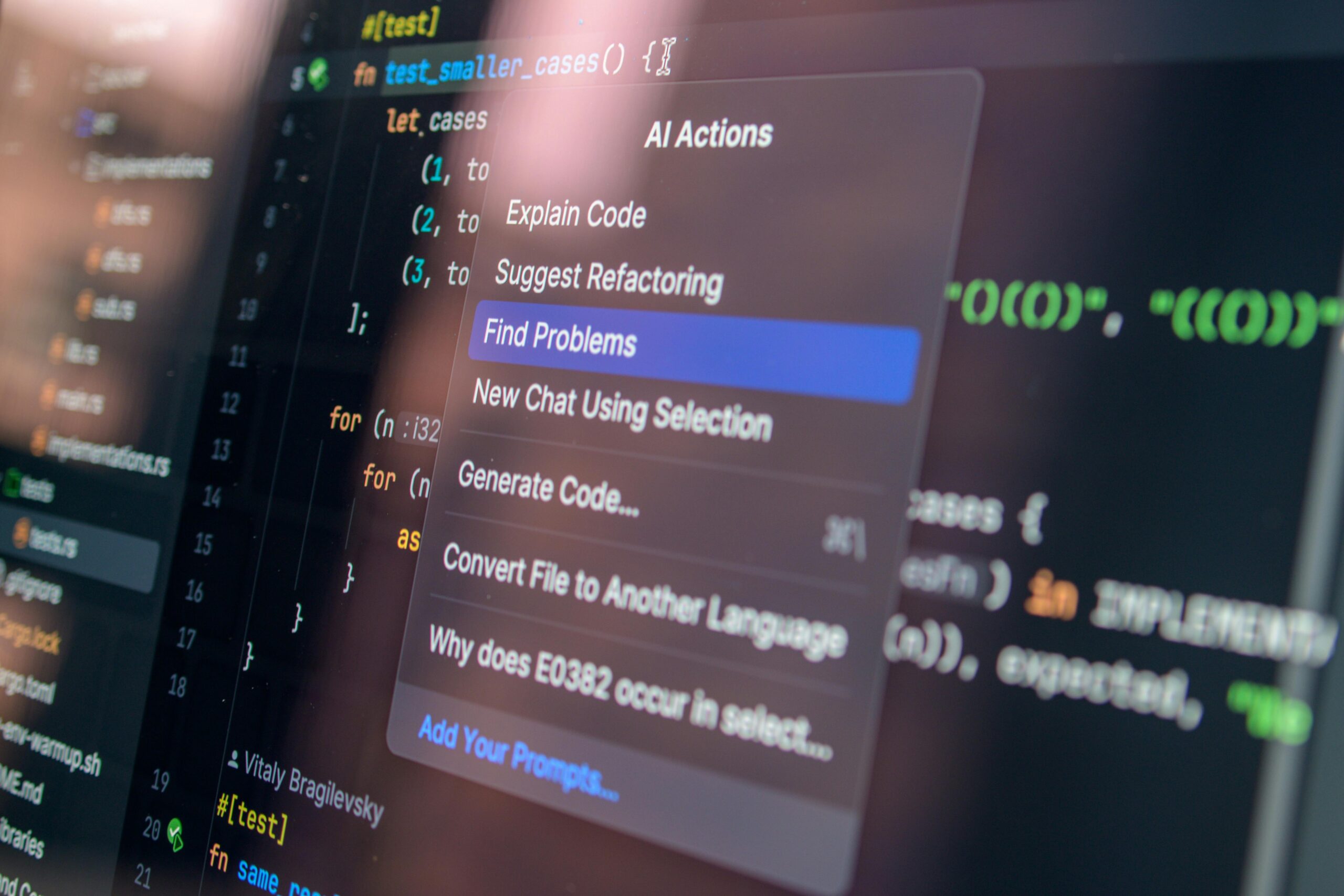

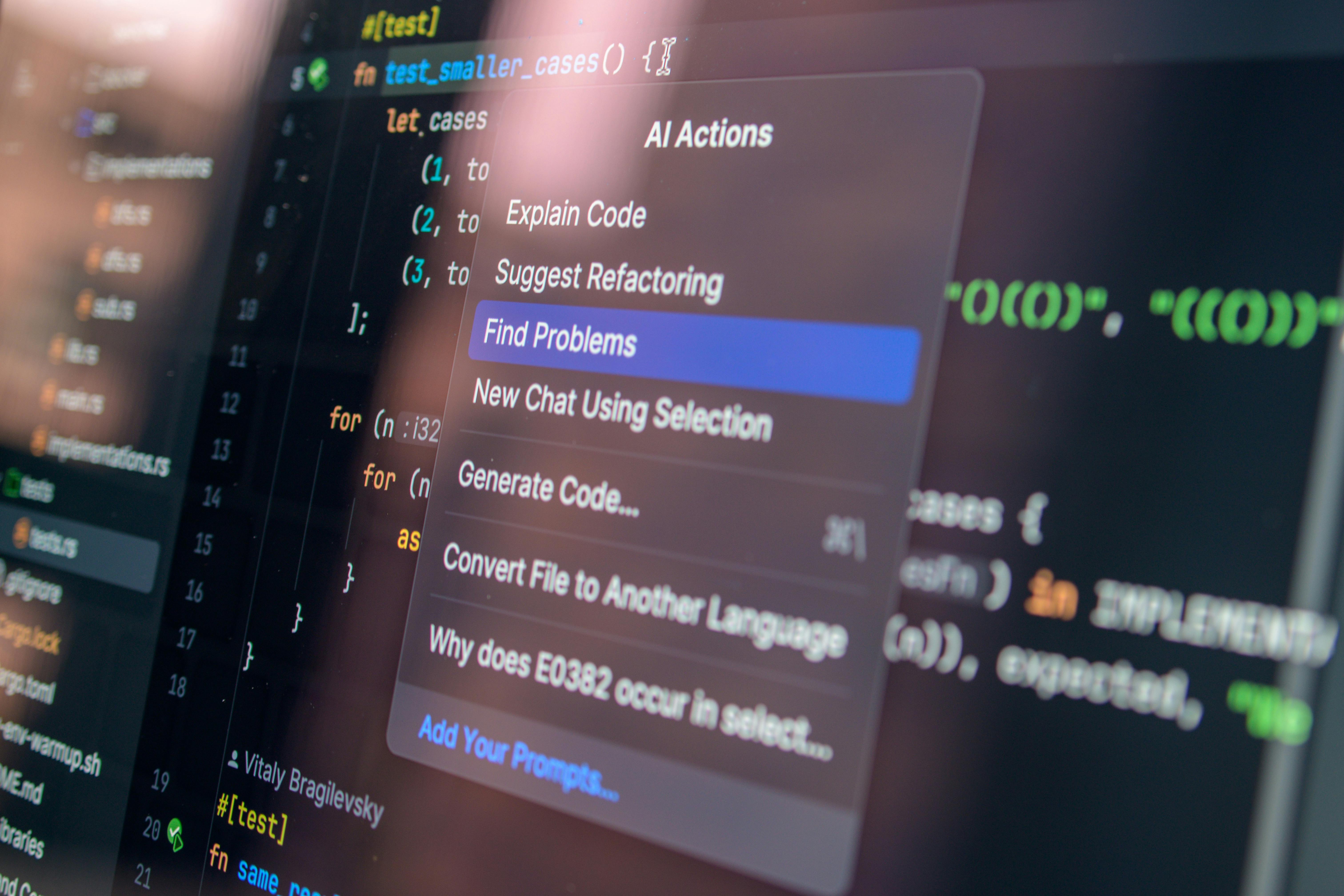

This verbal misstep was quickly recognized as a powerful indicator of the lingering, unresolved quality crisis. The irony was palpable: After publicly demanding that the industry move past the critique of low-quality output, the CEO used a synonym to describe precisely what users were experiencing and what the engineers were striving, often in vain, to eliminate. This moment underscored the immense pressure on the company to transition from showcasing *potential* to delivering *flawless, trusted execution*. This pressure is so intense that it is visible even in the product interface itself. Consider the omnipresent, albeit less prominent, on-screen warnings during product demonstrations: advising users to **”Check for mistakes”** even in simple command-line interactions. A system that requires a mandatory, explicit warning for error-checking is, by definition, not yet a dependable enterprise tool. This is the core challenge that the required **engineering sophistication** must address.

The Strategic Imperative for Engineering Sophistication. Find out more about moving beyond AI quality critique in industry tips.

The executive’s vision, despite the contradictory slip-up, lays out a clear strategic imperative: we must now prioritize the difficult work of making AI reliable over the easier work of making it flashy.

The Role of Engineering Rigor in Achieving Real-World Value

Bridging the gap between the current state of probabilistic output and the desired future state of reliable AI will demand a significant escalation in technical discipline—what the executive termed **“engineering sophistication.”** This suggests that the next wave of real progress is less about discovering entirely new model architectures (the foundational LLMs) and more about the painstaking, often unglamorous work of building the *connective tissue*. This connective tissue includes:

This infrastructure transforms a promising, but fundamentally probabilistic, model into a dependable enterprise tool. This effort is framed as the key to extracting *sustainable, measurable value* from the massive prior investments in foundational research. If you are interested in the technical aspects of making this leap, you can look into the challenges involved in **cloud infrastructure** scaling for AI workloads.

The Necessity of Proving Value to Justify Resource Consumption

Beyond mere product quality, there is an underlying, pragmatic financial and resource-based argument embedded in the call for meaningful impact. The colossal financial outlays required to build and power these large models—the spending on data centers, GPUs, and specialized talent—creates an undeniable accountability challenge for the entire sector. The continuation of these capital expenditures and the consumption of scarce energy resources—the **“compute”**—requires a consensus that the benefits accrued significantly outweigh the costs. This consensus is the true definition of **social permission**. This is why industry leaders are so keen to move past pilot programs, as research indicates that in 2025, a staggering 95% of organizations reported zero return on investment from their initial generative AI projects. The market is waiting for a signal that this investment is translating into actual growth, not just hype.

Broader Sector Implications and the Search for Societal Permission. Find out more about Moving beyond AI quality critique in industry overview.

The struggle of a single leading firm to shape this discourse reflects a wider industry anxiety. The narrative around the perceived failure of mass-market AI to immediately deliver on its grandest promises has repercussions far beyond one company’s stock price.

The Interplay Between Corporate Ambition and Public Skepticism

The industry is engaged in a high-stakes race to prove its worth before the initial fervor dissipates entirely, leaving behind only the negative residue of unusable content and broken promises. Over-promising can easily lead to a sustained cooling of enthusiasm, regulatory backlash, or, worse, a prolonged period where the technology is dismissed as another overhyped bubble, similar to the cautionary tales of the early 2000s **dot-com bubble**. CEOs are acutely aware that skepticism, once hardened, is difficult to reverse. They must find a way to balance ambitious long-term visions with the demonstrable, near-term utility that satisfies both the quarterly earnings report and the skeptical end-user. Building trust—which often means transparently admitting current limitations—is now the most valuable asset the industry can cultivate.

Navigating the Spectrum of Employment Impact and Ethical Deployment

The evolving narrative is intrinsically linked to profound, unresolved questions about the future of work and ethical deployment boundaries. While some segments of the industry spent 2024 warning of mass displacement in white-collar roles, the current reality in February 2026 suggests that most deployed AI acts as a partial supplement, requiring human validation. The choices made by leading firms right now regarding where and how to responsibly deploy their most powerful agents—whether they are designed to truly *amplify* human workers or merely *mask* underlying operational inefficiencies—will set critical precedents for the entire technology landscape. Will AI become a partner that elevates human judgment, or simply a mechanism for corporate cost-cutting that erodes professional expertise? The executive mandate is clear: the industry must choose the former to secure its future.

Key Takeaways and Actionable Insights for Navigating the Pivot. Find out more about Executive mandate for AI cognitive amplification definition guide.

The call from the top tier of technology leadership is loud and clear: the era of debating the quality of basic AI output is over. We are entering the phase of integration, infrastructure, and proven impact. For professionals, developers, and decision-makers alike, this moment demands specific actions.

Actionable Insights for the Road Ahead

To successfully ride this new wave of mandated evolution, focus your energy where the industry is now directing its resources:

The challenge of 2026 is not to build a better chatbot, but to build a trustworthy partner. The industry has been given its executive mandate. Now, the real work—the unglamorous, sophisticated, high-stakes engineering required to earn that **social permission**—must begin. We’ve covered the CEO’s philosophical push and the engineering demands. What do *you* see as the single biggest obstacle to achieving that promised “new equilibrium” in your day-to-day work? Let us know in the comments below. Your input helps shape the conversation that matters now.

![OpenAI mission betrayal lawsuit Musk: Complete Guide [2026] OpenAI mission betrayal lawsuit Musk: Complete Guide [2026]](https://tkly.com/wp-content/uploads/2026/03/d97587d3bae1f7ff1cebe275b2cb1bffd9438622-150x150.png)